Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

Microsoft

AWS Rekognition

| Age | 29-39 |

| Gender | Male, 95.6% |

| Sad | 71% |

| Calm | 28.5% |

| Fear | 0.2% |

| Angry | 0.1% |

| Disgusted | 0.1% |

| Surprised | 0.1% |

| Happy | 0% |

| Confused | 0% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Person | 98.6% | |

Categories

Imagga

created on 2022-01-09

| paintings art | 66.9% | |

| interior objects | 13.3% | |

| food drinks | 8.5% | |

| events parties | 3.6% | |

| cars vehicles | 3.1% | |

| text visuals | 2.8% | |

| people portraits | 1% | |

Captions

Microsoft

created by unknown on 2022-01-09

| a group of people sitting in a dark room | 87.8% | |

| a group of people in a dark room | 87.7% | |

| a group of people sitting around a baseball field | 51% | |

Clarifai

Created by general-english-image-caption-clip on 2025-07-13

a nun with her children.

Salesforce

Created by general-english-image-caption-blip on 2025-05-22

a photograph of a man in a black and white photo of a man in a black and white

OpenAI GPT

Created by gpt-4o-2024-11-20 on 2025-06-15

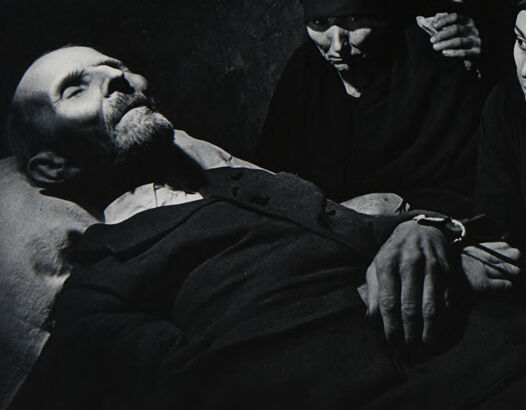

The image depicts a group of individuals gathered in a dimly lit room, with solemn and somber body language and attire suggestive of mourning or a funeral scene. The atmosphere is heavy, characterized by the use of dark clothing and shadowy lighting. One person, presumably deceased, is lying down while others are seated or standing close, their hands clasped or resting on the figure, conveying themes of grief and loss.

Created by gpt-4o-2024-08-06 on 2025-06-15

This black-and-white image depicts a somber scene where several individuals are dressed in dark, heavy clothing. The central focus of the image is a figure lying down, with an outstretched hand resting calmly, which is being gently held by a person seated nearby. The atmosphere is one of quiet reflection and mourning, with the group gathered closely together in a dimly lit setting, suggesting an intimate and possibly solemn occasion. The composition features a strong contrast between light and shadow, emphasizing the contours and textures of fabrics and gestures, contributing to the emotional depth of the scene.

Anthropic Claude

Created by anthropic.claude-3-haiku-20240307-v1:0 on 2025-06-19

The image appears to be a black and white photograph depicting a group of people gathered around a bedridden individual. The individuals are dressed in traditional Middle Eastern or Central Asian attire, with the women wearing dark robes and headscarves. The man on the bed appears to be elderly or ill, with a long beard and a solemn expression. The overall atmosphere of the image is somber and contemplative, suggesting a scene of mourning or a significant event.

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-19

This is a powerful black and white photograph that appears to be a mourning scene. The image shows several women in dark head coverings gathered around what appears to be a deceased man lying on his back. The man has a beard and appears to be dressed in dark clothing. The composition is dramatic, with strong contrast between light and shadow, typical of classic documentary photography. The women's expressions and postures convey grief and solemnity, creating an emotionally charged atmosphere. The stark lighting and dark background add to the somber mood of this scene, which appears to capture a traditional mourning ritual or wake.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-05-27

The image is a black-and-white photograph of a group of people gathered around a man who appears to be deceased. The man is lying on his back, with his head tilted to the left and his eyes closed. He is wearing a dark-colored shirt and pants, and his right arm is bent at the elbow, with his hand resting on his stomach. His left arm is straight, with his hand hanging off the side of the bed.

Surrounding the man are six individuals, all dressed in dark clothing. They are positioned in a semi-circle around him, with their faces filled with concern and sadness. The woman in the center of the group has her hand on the man's chest, while the others stand behind her, their heads bowed in mourning.

The background of the image is a plain wall, which adds to the somber and intimate atmosphere of the scene. The overall mood of the image is one of grief and loss, as the group of people gather around the deceased man, paying their respects and mourning his passing.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-05-27

This image is a black-and-white photograph of a group of people gathered around a man lying on a bed. The man, positioned on the left side of the image, is dressed in dark attire and appears to be deceased or unconscious. He wears a watch on his left wrist.

Surrounding the man are seven individuals, all clad in dark clothing and head coverings. They seem to be mourning or paying their respects to the man. The background of the image features a wall with a textured, rough appearance, which adds to the somber atmosphere of the scene. Overall, the image conveys a sense of solemnity and reverence, capturing a poignant moment of grief and remembrance.

Amazon Nova

Created by amazon.nova-pro-v1:0 on 2025-05-26

A black-and-white photo of a man lying on a bed, probably in a hospital room, with several women standing around him. The man is wearing a watch and has a bandage on his hand. The women are wearing hijabs and are looking at something. One of them is holding a piece of paper. The woman on the right is holding her chin with her hand. The wall behind them is painted white.

Created by amazon.nova-lite-v1:0 on 2025-05-26

The image is a black-and-white photograph depicting a somber scene. In the foreground, a man lies on a bed, appearing to be in a state of rest or possibly deceased. His face is turned to the side, and he has a beard and mustache. Around him, several women are gathered, all dressed in dark clothing, including head coverings. They are positioned in a semi-circle around the man, with some looking down at him and others gazing into the distance. Their expressions are solemn, and the overall atmosphere is one of mourning or contemplation. The background is a plain, textured wall, adding to the somber tone of the image.

Google Gemini

Created by gemini-2.0-flash on 2025-05-17

This is a black and white photograph depicting what appears to be a wake or a memorial scene. An elderly man lies in repose on a bed or bier, dressed in a dark suit and white shirt. His hands are clasped together, and his face is serene with his eyes closed, suggesting he is deceased.

Surrounding the man are several women, all dressed in dark clothing and head coverings, which is a traditional sign of mourning in many cultures. They gaze down at the man with expressions of sorrow and solemnity. The lighting is dramatic, with strong contrasts that highlight the figures and deepen the shadows, adding to the somber mood of the scene.

The composition is reminiscent of historical mourning scenes, and the photo's high contrast and grainy texture contribute to a sense of realism and gravitas. The backdrop is a simple wall, providing a stark contrast to the human drama unfolding in the foreground.

Created by gemini-2.0-flash-lite on 2025-05-17

The image is a black and white photograph capturing a somber scene, likely depicting a funeral or a moment of mourning. A deceased elderly man lies in a bed, his face and upper body illuminated by a soft light. He is surrounded by a group of people, predominantly women, who appear to be grieving.

The individuals surrounding the deceased are dressed in dark clothing and head coverings, which further emphasizes the somber mood of the scene. Their facial expressions suggest grief, concern, and possibly disbelief. Some are looking directly at the deceased, while others gaze off into the distance, lost in their thoughts. The lighting and composition of the image draw attention to the man's body and the expressions of those around him, creating a sense of intimacy and shared sorrow. The overall tone of the photograph is poignant and reflective.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-06-05

The image appears to be a black-and-white photograph depicting a somber and dramatic scene. It features a group of people gathered around a man who is lying down, possibly deceased or injured. The individuals surrounding him are dressed in dark clothing, suggesting a mournful or serious context.

Here are some detailed observations:

Central Figure: The man lying down is the focal point of the image. He appears to be in a state of rest or possibly unconscious. His head is tilted back, and his facial expression is not clearly visible.

Surrounding Individuals:

- There are several people surrounding the central figure, all dressed in dark, possibly traditional or religious attire.

- Their expressions and postures convey emotions of grief, concern, or solemnity.

- One person in the center is holding a small object, possibly a rosary or prayer beads, indicating a religious or spiritual context.

- The individuals are positioned closely around the central figure, suggesting a sense of community or shared experience.

Setting: The background is dark and indistinct, which adds to the somber mood of the image. The setting could be indoors, possibly in a dimly lit room or a place of worship.

Composition: The photograph is composed in a way that draws the viewer's attention to the central figure and the expressions of the surrounding individuals. The use of light and shadow emphasizes the emotional intensity of the scene.

Overall, the image conveys a sense of loss, grief, or a significant emotional event, possibly related to a funeral, wake, or a similar solemn gathering.

Qwen

Created by Qwen/Qwen2.5-VL-7B-Instruct on 2025-07-10

This is a black-and-white photograph showing a group of people gathered around a person lying on a hospital bed. The person on the bed appears to be in a state of distress or pain, with their hand resting on their chest. Surrounding the person on the bed are several individuals, including women and men, who seem to be attending to them. The women are wearing traditional head coverings, and the atmosphere of the photograph suggests a solemn or somber occasion, possibly a medical emergency, a death, or a religious event. The lighting is dim, which could indicate the setting is indoors or at night, and the overall mood of the image is one of gravity and concern.

Created by Qwen/Qwen2.5-VL-72B-Instruct on 2025-07-10

This image portrays a somber and intimate moment. It is a black-and-white photograph depicting a group of people gathered around a man who is lying down, appearing lifeless. The individuals surrounding him are dressed in dark clothing, with some wearing veils or head coverings, which suggests a sense of mourning or grief. Their expressions and body language convey deep emotional distress, with some looking down at the man, while others seem to be in contemplation or sorrow. The lighting is dramatic, casting shadows and highlighting the man's face and the hands of the people near him, adding to the intensity and emotional weight of the scene. The overall atmosphere is one of loss and lamentation.