Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

Microsoft

AWS Rekognition

| Age | 25-35 |

| Gender | Male, 99.9% |

| Calm | 93.6% |

| Confused | 4.9% |

| Angry | 0.7% |

| Disgusted | 0.3% |

| Sad | 0.2% |

| Fear | 0.1% |

| Surprised | 0.1% |

| Happy | 0.1% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Person | 99.2% | |

Categories

Imagga

created on 2022-01-08

| paintings art | 97.6% | |

| streetview architecture | 1.2% | |

Captions

Microsoft

created by unknown on 2022-01-08

| a group of people standing in front of a store | 92.1% | |

| a group of people standing outside of a store | 92% | |

| a group of people standing in a store | 90.5% | |

Clarifai

Created by general-english-image-caption-clip on 2025-07-14

a group of demonstrators march through filming location.

Salesforce

Created by general-english-image-caption-blip on 2025-05-22

a photograph of a man holding a sign that says,'we are not going to the same

OpenAI GPT

Created by gpt-4o-2024-11-20 on 2025-06-16

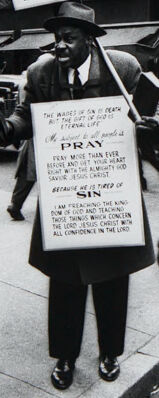

The image portrays a busy urban street scene, likely from a mid-20th-century era, given the style of the cars, clothing, and signage. A man wearing a suit and hat stands prominently in the foreground, holding two signs with religious messages. One sign includes text encouraging prayer and discussing faith. He is standing near a corner, surrounded by pedestrians dressed in various attire, including jackets, suits, and hats. In the background, buildings display advertisements and signs, including "Broner" and "Webster." A boy wearing a leather jacket appears to be observing the scene. Vintage cars are parked or moving along the street, contributing to the overall ambiance of a bustling city environment from a past era.

Created by gpt-4o-2024-08-06 on 2025-06-16

The black-and-white image depicts a busy urban street corner scene from the mid-20th century. Prominent in the image is a man standing and holding an American flag, wearing a hat and overcoat, with a large sign that has religious text and messages urging people to "pray" and to be "saved." The details on the sign include scripture as well as warnings against sin and encouragement for spiritual salvation. Several other pedestrians, dressed in typical mid-century attire, such as hats and overcoats, traverse the sidewalk. In the background, vintage automobiles are parked along the street. The storefronts feature signage with businesses named "Broner," "Weber and Heilbroner," and "Rothstein." The bustling atmosphere is typical of a city setting, capturing a moment in everyday life.

Anthropic Claude

Created by anthropic.claude-3-haiku-20240307-v1:0 on 2025-06-18

The image depicts a street scene in what appears to be an urban setting, likely New York City. It shows a group of people, some of whom are holding signs or placards, standing on the sidewalk. The signs seem to be related to religious or political messages. The buildings and storefronts in the background suggest a busy commercial area. The overall atmosphere of the scene conveys a sense of activity and engagement, with the people on the street seemingly participating in some form of public demonstration or expression.

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-18

This is a black and white photograph that appears to be from an urban street scene, likely from the mid-20th century. Several people are shown wearing typical period clothing including hats and overcoats. The most notable element is what appears to be a person wearing signs with text, possibly a protester or street preacher. The setting looks like a city street with storefronts and buildings visible in the background, along with some parked cars typical of the era. The image has the documentary-style quality common to street photography of that period.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-06-09

The image depicts a black-and-white photograph of a man standing on a city street, holding a sign that reads "PRAY" in large letters. The man is dressed in a suit and tie, and is surrounded by other people walking on the sidewalk. In the background, there are several buildings and cars visible.

- Man with Sign

- The man holding the sign is wearing a suit and tie.

- He has a serious expression on his face.

- The sign he is holding reads "PRAY" in large letters.

- The sign also contains smaller text that is not legible.

- Background

- There are several buildings visible in the background.

- Some of the buildings appear to be shops or stores.

- There are also several cars parked on the street.

- People are walking on the sidewalk, some of whom are looking at the man with the sign.

- Overall Atmosphere

- The atmosphere of the image is one of seriousness and solemnity.

- The man with the sign appears to be trying to convey a message or make a statement.

- The people in the background seem to be going about their daily business, but some of them are paying attention to the man with the sign.

The image suggests that the man with the sign is trying to bring attention to an important issue or cause, and is using his presence on the street to make a statement. The people in the background seem to be reacting to his presence, but it is not clear whether they are supportive or critical of his message.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-06-09

The image is a black-and-white photograph of a city street scene, featuring a man holding a sign with religious text and a flag. The man, dressed in a suit and hat, stands on the sidewalk, surrounded by other pedestrians. He holds a sign that reads "PRAY" and features additional text, although it is not fully legible. A small American flag is attached to the top of the sign.

In the background, several buildings are visible, including one with a sign that reads "bronner." The overall atmosphere of the image suggests a busy urban environment, with people going about their daily activities. The presence of the man with the sign adds a sense of religious or political activism to the scene.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-06-08

A black-and-white photograph shows a man standing on a busy street corner, holding a sign that reads "PRAY FOR SIN." The man is wearing a hat and a coat. Another man stands nearby, holding a cigarette in his hand, and a third man is standing on the sidewalk, smoking. Behind them, a car is driving down the street, and a building with a sign that reads "Bronner" is visible. In the distance, there are more buildings and a flag.

Created by amazon.nova-pro-v1:0 on 2025-06-08

The black-and-white photo shows a street scene with people, including two men holding a sign. One of the men is wearing a hat and leather shoes, while the other is wearing a hat and leather shoes. Another man is standing in front of a trash bin and holding a cigarette. A man is standing behind him. A man is walking in front of a car. A building with an American flag is on the side.

Google Gemini

Created by gemini-2.0-flash-lite on 2025-05-17

Here's a description of the image:

The photograph, taken in black and white, captures a bustling street scene, likely from the mid-20th century, given the style of the cars and clothing.

Central Figures:

- Religious Protester: A man stands on the sidewalk holding a sign. He wears a hat, a suit, and carries signs and American Flag, suggesting he is protesting.

- Young Men: A few young men appear as subjects in the frame with different looks. They are all dressed in the clothing of the time with their own individual styles.

- Other People: Several other pedestrians are visible, some walking, others looking towards the camera, creating a sense of everyday life.

Surrounding Environment:

- Buildings: Buildings line the street, some with signage and awnings. The architectural style suggests a commercial area or city center.

- Vehicles: Cars of the era are parked or moving on the street.

- Sidewalks: The image is clearly taken on a sidewalk.

Overall Impression:

The photograph is evocative of a specific time and place, capturing a moment of urban life with a particular emphasis on religious expression and interaction with the community.

Created by gemini-2.0-flash on 2025-05-17

Here's a description of the image:

This is a black and white street photograph capturing a moment in what appears to be an urban setting, likely an American city during the mid-20th century. The photograph features several people and elements that contribute to the overall narrative.

In the foreground, on the left, a man with glasses is smoking a cigarette. He's wearing a suit and tie, with a patterned shirt peeking through. Next to him is a young man with a hat. He's wearing a jacket over a shirt and jeans, and he also holds a cigarette. He is looking directly at the camera.

A man stands in the middle ground holding two signs with religious messages. The sign he's wearing on his chest reads "The wages of sin is death.. PRAY Pray more than ever..Because he is tired of Sin..I am preaching the Kingdom of God". Behind him flies an American flag.

To the right, there's a young person with their back turned to the camera, looking in the direction of the preacher. Further back, there are people walking on the sidewalk, creating a sense of a busy city street.

Buildings with signs and store names are visible in the background, adding to the urban environment. Old cars are also parked on the street. The contrast between light and shadow is quite strong, creating a sense of depth and adding to the dramatic quality of the image.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-06-02

The image depicts a street scene in what appears to be an urban area, likely from the mid-20th century. The setting is a busy sidewalk with several people walking or standing around.

Key details include:

Foreground Figures:

- A man in the center of the image is wearing a cowboy hat and holding an American flag. He appears to be looking directly at the camera.

- Next to him is an African American man wearing a suit and hat, holding a large sign. The sign contains a religious message, emphasizing the importance of prayer and referencing biblical scriptures.

Background:

- The background shows a bustling city street with various buildings, including storefronts and signs. One prominent sign reads "Woolworth" and "Walden's," indicating the presence of retail stores.

- Several cars from the mid-20th century are visible on the street, adding to the vintage feel of the image.

- Pedestrians are walking in different directions, some looking at the man with the sign, while others are engaged in their own activities.

Atmosphere:

- The overall atmosphere suggests a lively urban environment with a mix of everyday activities and a religious or protest element introduced by the two central figures.

- The clothing and vehicles hint at a time period around the 1950s or 1960s.

This image captures a moment of public expression in a historical urban context, blending elements of daily life with a more deliberate message being conveyed by the individuals in the foreground.

Qwen

No captions written