Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 19-27 |

| Gender | Male, 99.7% |

| Calm | 99.4% |

| Angry | 0.3% |

| Sad | 0.1% |

| Surprised | 0% |

| Confused | 0% |

| Happy | 0% |

| Fear | 0% |

| Disgusted | 0% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

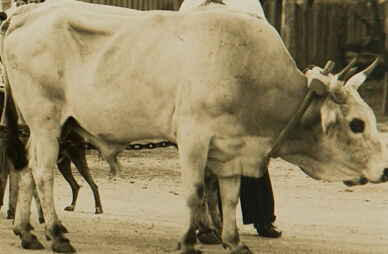

| Cow | 99.9% | |

Categories

Imagga

created on 2022-01-09

| nature landscape | 90.7% | |

| cars vehicles | 7.6% | |

Captions

Microsoft

created by unknown on 2022-01-09

| an old photo of a horse drawn carriage | 98.6% | |

| old photo of a horse drawn carriage | 98.1% | |

| a close up of a horse drawn carriage | 98% | |

Clarifai

Created by general-english-image-caption-clip on 2025-07-12

a horse and cart with a man and woman.

Salesforce

Created by general-english-image-caption-blip on 2025-05-20

a photograph of a man standing next to a cow in a wagon

OpenAI GPT

Created by gpt-4o-2024-11-20 on 2025-06-09

The image depicts a vintage rural scene featuring a large ox-drawn wagon with wooden wheels and a covered top, resembling an old-fashioned transport vehicle. Two individuals stand near the ox and wagon, dressed in period-appropriate clothing, including hats. Behind them, wooden structures and a rustic setting, including trees, suggest a farm or countryside location. The photograph has a sepia tone, adding to its historic atmosphere. The word "Annas" appears in the lower-right corner.

Created by gpt-4o-2024-08-06 on 2025-06-09

The image depicts a vintage rural scene featuring a large wooden wagon with a canopy, reminiscent of an old-fashioned covered wagon. The wagon is hitched to a pair of oxen, demonstrating traditional means of transportation and farming. Surrounding the wagon are two men, one standing beside the oxen, and the other beside the wagon. In the background, there are rustic wooden buildings with trees beyond them, illustrating a pastoral setting. The image is black and white, emphasizing the historical nature of the scene. It captures a moment in time when oxen were used for pulling heavy loads, indicative of an earlier era.

Anthropic Claude

Created by anthropic.claude-3-haiku-20240307-v1:0 on 2025-06-15

The image appears to be a historical photograph depicting a horse-drawn wagon or carriage in a rural setting. The wagon has a canvas covering and appears to be loaded with various items. There are several people standing next to the wagon, including a man wearing a cowboy hat. A white horse is also visible, harnessed to the wagon. The background shows trees and a rural landscape. The image has a sepia tone, indicating it is an older photograph.

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-15

This is a historical black and white photograph showing a covered wagon being pulled by an ox or cow. The wagon has a canvas top and wooden wheels, and appears to be a type of transport wagon typical of the early 20th century. Two people are standing with the wagon and animal. The wagon has wooden sides and what appears to be a barrel visible inside. In the background, there are some wooden buildings or barns and trees. The image has a rural, agricultural setting and captures a moment of early American transportation history. The photograph has "Annas" written at the bottom, which might be a photographer's signature or notation.

Meta Llama

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-05-30

The image depicts a sepia-toned photograph of a covered wagon being pulled by an ox. The wagon is positioned on the left side of the image, with its front facing to the right. It features a white canvas cover and is equipped with two large wheels at the back and one smaller wheel at the front. A man stands beside the wagon, holding onto it as he guides the ox.

In the background, there are trees and buildings visible, suggesting that the scene is set in a rural or countryside area. The overall atmosphere of the image appears to be one of simplicity and rustic charm, evoking a sense of a bygone era.

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-05-30

The image is a sepia-toned photograph of a covered wagon being pulled by an ox, with two men standing beside it.

The wagon is large and has a canvas cover, with a barrel visible on the side. The ox is light-colored and has a harness around its neck. The two men are wearing white shirts and hats, and one of them is holding the reins of the ox. In the background, there are trees and a building, suggesting a rural or countryside setting.

The overall atmosphere of the image is one of rustic simplicity and hard work, with the men and ox working together to pull the wagon through the countryside. The sepia tone of the photograph adds a sense of nostalgia and timelessness to the scene, evoking a sense of a bygone era.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-05-29

This image depicts a vintage photograph of a covered wagon, possibly from the early 20th century. The wagon is a traditional wooden wagon with a large, canvas-covered canopy. It has four wheels, with two large wheels at the front and two smaller wheels at the back. The wagon is being pulled by a cow, and two men are standing beside it. The man on the left is wearing a hat and a long-sleeved shirt, while the man on the right is wearing a hat and a scarf.

Created by amazon.nova-pro-v1:0 on 2025-05-29

The image is a black-and-white photograph of a wagon and a cow. The wagon is a wooden cart with four wheels and a white cover. There are two barrels attached to the side of the wagon. Two men are standing next to the wagon. One of them is wearing a hat and a long-sleeve shirt. The other man is wearing a hat and a long-sleeve shirt with a scarf around his neck. There is a dog standing behind the men.

Google Gemini

Created by gemini-2.0-flash-lite on 2025-05-02

Certainly! Here's a description of the image:

Overall Impression:

The image is a vintage, sepia-toned photograph. It evokes a sense of the past, possibly depicting a scene from the early to mid-20th century.

Key Elements:

- Wagon: A covered wagon takes center stage. It's a wooden structure with large wheels and a canvas top, likely used for travel or transport. A barrel and other items are mounted on the side.

- Cattle: A large, light-colored bull or ox is present. It's harnessed, presumably for pulling the wagon or other farm work.

- People: Several men are depicted. Two men appear to be supervising the wagon and the animal, and a few others are in the background. They all seem to be wearing hats, common in the time period.

- Setting: The scene is outdoors, on what appears to be a dirt road or farmyard. There are some simple wooden structures, possibly sheds or outbuildings, in the background, along with trees.

- Mood: The overall mood is quiet and rustic. It gives a sense of rural life, perhaps farming, and the use of animals for labor and transport.

Additional Details:

- The presence of the wagon, the bull, and the attire of the people point to a time when animal power and horse-drawn transportation were the norm.

- The photograph's tone suggests that it has been taken many years ago, adding to its historical interest.

Created by gemini-2.0-flash on 2025-05-02

The sepia-toned image captures a scene from what appears to be a bygone era. A large, light-colored ox is harnessed and ready to pull a wagon. The ox's hide shows its form and its horns curve slightly inwards.

The wagon is a substantial wooden structure with a covered top. A barrel is affixed to the side, and there is a washboard hanging on it.

Two men, both wearing hats, stand near the wagon. One is on the ox's side, dressed in a light-colored shirt and dark pants, looking towards the camera. The other man, also in a light-colored shirt and dark pants, stands near the wagon.

Behind the men, a group of children can be seen. Buildings with wooden walls and gabled roofs form the backdrop, and trees are visible on the horizon. The ground is a mixture of dirt and sparse vegetation. In the bottom right corner, a handwritten word "Annos" is visible.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-06-02

The image depicts a historical or vintage scene featuring a covered wagon, likely from the 19th or early 20th century. The wagon is a traditional wooden structure with a canvas cover, designed to protect the contents and passengers from the elements. It is equipped with large wooden wheels and appears to be well-stocked with various items, including barrels and other supplies.

In front of the wagon, two oxen are harnessed to pull it. One man is standing next to the oxen, holding the reins, while another man is positioned near the head of the oxen, possibly guiding or controlling them. Both men are dressed in period clothing, with one wearing a hat and the other a bandana around his neck.

The background shows a rural setting with trees and a wooden structure, possibly a barn or shed. The overall scene suggests a time of pioneering or early settlement, evoking images of frontier life and travel by covered wagon. The sepia tone of the photograph adds to the vintage feel of the image.

Qwen

Created by Qwen/Qwen2.5-VL-7B-Instruct on 2025-07-24

The image is a sepia-toned photograph depicting a rural scene. It features an old-style covered wagon, likely used for transportation or hauling goods, with a canvas covering and wooden construction. The wagon has large wooden wheels and appears to be hitched to a pair of oxen. There are two men standing beside the wagon. One man is dressed in a white shirt and dark trousers, while the other is wearing a hat and lighter-colored clothing. In the background, there are wooden sheds and trees, suggesting a farm setting. The ground is unpaved and appears to be dirt. The overall atmosphere of the image evokes a historical or pastoral feel, likely from a bygone era.

Created by Qwen/Qwen2.5-VL-72B-Instruct on 2025-07-24

This is a sepia-toned historical photograph depicting a rural scene. The central focus is a large, covered wooden wagon with large wheels, reminiscent of those used in the 19th century. The wagon has a canvas hood that is partially rolled up at the front, and various items are visible both inside and outside the wagon. A cylindrical object resembling a barrel is attached to the side of the wagon.

In the foreground, several people are standing next to the wagon. Two men are prominently visible, dressed in hats and attire typical of the era. One of the men is holding the reins of a large, light-colored ox, which is yoked and appears to be pulling the wagon. The background includes a wooden structure that looks like a shed or barn, and there are trees further back, indicating a rural or farm-like setting. The ground is dirt, and there is some grass in the lower part of the image. The overall scene suggests a moment of daily life in a rural or frontier environment.