Machine Generated Data

Tags

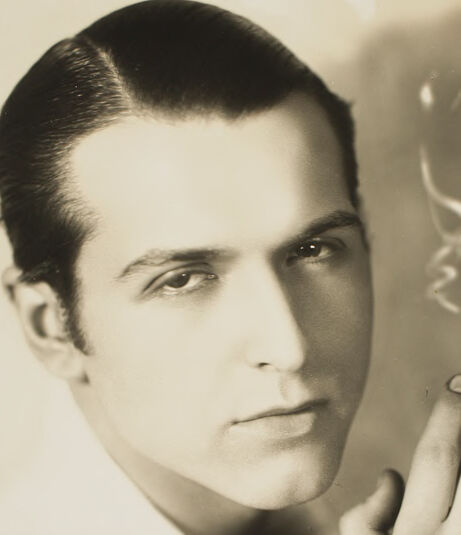

Clarifai

created on 2023-10-15

Imagga

created on 2021-12-15

Google

created on 2021-12-15

| Forehead | 98.5 | |

|

| ||

| Face | 98.4 | |

|

| ||

| Nose | 98.4 | |

|

| ||

| Lip | 97 | |

|

| ||

| Chin | 96.7 | |

|

| ||

| Eyebrow | 94.6 | |

|

| ||

| Eyelash | 92.1 | |

|

| ||

| Jaw | 88.2 | |

|

| ||

| Neck | 87.5 | |

|

| ||

| Flash photography | 87.1 | |

|

| ||

| Gesture | 85.3 | |

|

| ||

| Style | 83.8 | |

|

| ||

| Tie | 77.9 | |

|

| ||

| Monochrome photography | 74.9 | |

|

| ||

| Monochrome | 72.1 | |

|

| ||

| Formal wear | 71.4 | |

|

| ||

| Vintage clothing | 69.6 | |

|

| ||

| Room | 64.9 | |

|

| ||

| White-collar worker | 64.5 | |

|

| ||

| Eyewear | 63.5 | |

|

| ||

Microsoft

created on 2021-12-15

| wall | 98.9 | |

|

| ||

| person | 97.3 | |

|

| ||

| human face | 94.8 | |

|

| ||

| indoor | 93.9 | |

|

| ||

| portrait | 86.8 | |

|

| ||

| text | 86.7 | |

|

| ||

| man | 70.9 | |

|

| ||

| posing | 47.5 | |

|

| ||

| picture frame | 14.1 | |

|

| ||

Color Analysis

Face analysis

Amazon

Microsoft

AWS Rekognition

| Age | 18-30 |

| Gender | Male, 99.1% |

| Calm | 98.8% |

| Angry | 0.5% |

| Sad | 0.3% |

| Confused | 0.1% |

| Surprised | 0.1% |

| Happy | 0% |

| Fear | 0% |

| Disgusted | 0% |

Microsoft Cognitive Services

| Age | 31 |

| Gender | Male |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Feature analysis

Amazon

Person

| Person | 97.3% | |

|

| ||

Categories

Imagga

| paintings art | 96% | |

|

| ||

| people portraits | 4% | |

|

| ||

Captions

Microsoft

created on 2021-12-15

| a person standing in front of a mirror posing for the camera | 74.6% | |

|

| ||

| a person in front of a mirror posing for the camera | 74.5% | |

|

| ||

| a person taking a selfie in front of a mirror posing for the camera | 64.8% | |

|

| ||

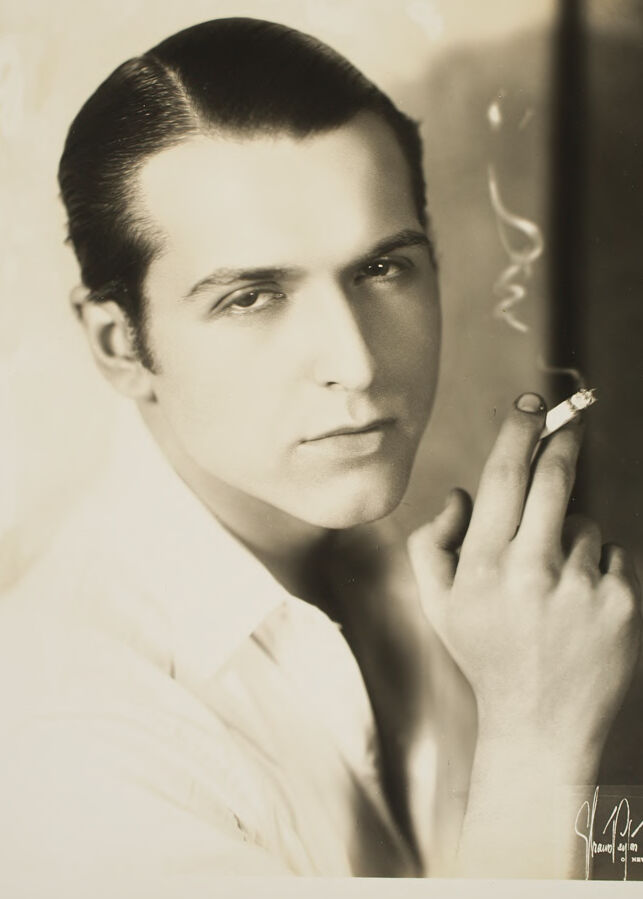

Text analysis

Amazon

YORK

SR YORK

SR

hauol ain

NEW TOBE

hauol

ain

NEW

TOBE