Machine Generated Data

Tags

Amazon

created on 2022-01-08

Clarifai

created on 2023-10-25

Imagga

created on 2022-01-08

| statue | 44.5 | |

|

| ||

| sculpture | 41.7 | |

|

| ||

| religion | 35.9 | |

|

| ||

| art | 33.5 | |

|

| ||

| ancient | 32 | |

|

| ||

| culture | 29.9 | |

|

| ||

| travel | 28.9 | |

|

| ||

| history | 27.7 | |

|

| ||

| temple | 27.6 | |

|

| ||

| earthenware | 26.2 | |

|

| ||

| stone | 25.4 | |

|

| ||

| architecture | 24.2 | |

|

| ||

| sand | 22.7 | |

|

| ||

| religious | 22.5 | |

|

| ||

| monument | 22.4 | |

|

| ||

| old | 20.9 | |

|

| ||

| soil | 20.3 | |

|

| ||

| god | 20.1 | |

|

| ||

| tourism | 19.8 | |

|

| ||

| ceramic ware | 19.7 | |

|

| ||

| figure | 16.6 | |

|

| ||

| decoration | 15.6 | |

|

| ||

| man | 15.5 | |

|

| ||

| famous | 14.9 | |

|

| ||

| earth | 14.3 | |

|

| ||

| traditional | 14.1 | |

|

| ||

| china | 14.1 | |

|

| ||

| carving | 13.4 | |

|

| ||

| antique | 13 | |

|

| ||

| utensil | 12.9 | |

|

| ||

| historic | 12.8 | |

|

| ||

| holy | 12.5 | |

|

| ||

| spirituality | 12.5 | |

|

| ||

| oriental | 12.3 | |

|

| ||

| historical | 12.2 | |

|

| ||

| people | 11.7 | |

|

| ||

| person | 11.7 | |

|

| ||

| body | 11.2 | |

|

| ||

| tourist | 10.9 | |

|

| ||

| carved | 10.8 | |

|

| ||

| face | 10.7 | |

|

| ||

| sport | 10.6 | |

|

| ||

| spiritual | 10.6 | |

|

| ||

| faith | 10.5 | |

|

| ||

| city | 10 | |

|

| ||

| landmark | 9.9 | |

|

| ||

| southeast | 9.9 | |

|

| ||

| worship | 9.7 | |

|

| ||

| strong | 9.4 | |

|

| ||

| east | 9.3 | |

|

| ||

| cadaver | 8.9 | |

|

| ||

| soldier | 8.8 | |

|

| ||

| museum | 8.7 | |

|

| ||

| building | 8.7 | |

|

| ||

| meditation | 8.6 | |

|

| ||

| male | 8.5 | |

|

| ||

| world | 8.3 | |

|

| ||

| bronze | 8.3 | |

|

| ||

| detail | 8 | |

|

| ||

| relief | 7.9 | |

|

| ||

| marble | 7.9 | |

|

| ||

| warrior | 7.8 | |

|

| ||

| belief | 7.8 | |

|

| ||

| adult | 7.8 | |

|

| ||

| heritage | 7.7 | |

|

| ||

| prayer | 7.7 | |

|

| ||

| wall | 7.7 | |

|

| ||

| muscular | 7.6 | |

|

| ||

| head | 7.6 | |

|

| ||

| human | 7.5 | |

|

| ||

| peace | 7.3 | |

|

| ||

| women | 7.1 | |

|

| ||

Google

created on 2022-01-08

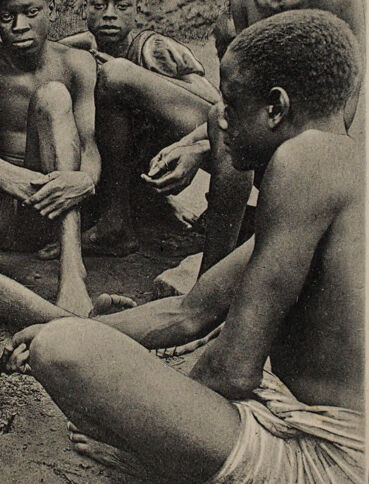

| Human body | 88.4 | |

|

| ||

| Headgear | 81.3 | |

|

| ||

| Chest | 81.3 | |

|

| ||

| Adaptation | 79.2 | |

|

| ||

| Art | 78.7 | |

|

| ||

| Thigh | 78 | |

|

| ||

| Crew | 77.1 | |

|

| ||

| Abdomen | 74.1 | |

|

| ||

| Vintage clothing | 72.2 | |

|

| ||

| Knee | 71.2 | |

|

| ||

| Barechested | 70 | |

|

| ||

| History | 67.8 | |

|

| ||

| Shorts | 67.4 | |

|

| ||

| Illustration | 66.9 | |

|

| ||

| Team | 66.5 | |

|

| ||

| Sitting | 65.6 | |

|

| ||

| Visual arts | 64.1 | |

|

| ||

| Monochrome | 63.6 | |

|

| ||

| Stock photography | 62.5 | |

|

| ||

| Human leg | 59.5 | |

|

| ||

Color Analysis

Face analysis

Amazon

Microsoft

AWS Rekognition

| Age | 23-33 |

| Gender | Male, 100% |

| Sad | 29.9% |

| Calm | 22.6% |

| Confused | 21.6% |

| Angry | 11.3% |

| Disgusted | 7.2% |

| Fear | 2.9% |

| Surprised | 2.5% |

| Happy | 1.9% |

AWS Rekognition

| Age | 23-33 |

| Gender | Male, 99.7% |

| Sad | 55.1% |

| Calm | 39.1% |

| Confused | 2.3% |

| Fear | 1% |

| Disgusted | 0.7% |

| Surprised | 0.6% |

| Angry | 0.6% |

| Happy | 0.5% |

AWS Rekognition

| Age | 23-31 |

| Gender | Male, 100% |

| Calm | 45% |

| Sad | 19.5% |

| Confused | 18.4% |

| Angry | 7.4% |

| Fear | 3.4% |

| Disgusted | 3.2% |

| Happy | 1.7% |

| Surprised | 1.4% |

AWS Rekognition

| Age | 22-30 |

| Gender | Male, 99.8% |

| Sad | 98.6% |

| Fear | 0.6% |

| Angry | 0.3% |

| Calm | 0.2% |

| Surprised | 0.1% |

| Confused | 0.1% |

| Disgusted | 0.1% |

| Happy | 0% |

AWS Rekognition

| Age | 35-43 |

| Gender | Male, 99.7% |

| Calm | 66.8% |

| Sad | 31.2% |

| Angry | 0.6% |

| Surprised | 0.4% |

| Fear | 0.4% |

| Disgusted | 0.3% |

| Confused | 0.2% |

| Happy | 0.1% |

AWS Rekognition

| Age | 22-30 |

| Gender | Male, 99.8% |

| Sad | 98.5% |

| Fear | 0.8% |

| Confused | 0.3% |

| Calm | 0.2% |

| Angry | 0.1% |

| Disgusted | 0.1% |

| Surprised | 0% |

| Happy | 0% |

AWS Rekognition

| Age | 21-29 |

| Gender | Male, 96.9% |

| Calm | 55.2% |

| Sad | 35.3% |

| Fear | 4.1% |

| Angry | 2.1% |

| Confused | 1.8% |

| Disgusted | 0.6% |

| Happy | 0.5% |

| Surprised | 0.4% |

AWS Rekognition

| Age | 19-27 |

| Gender | Male, 98.2% |

| Sad | 96.1% |

| Calm | 3% |

| Angry | 0.4% |

| Disgusted | 0.2% |

| Fear | 0.1% |

| Confused | 0.1% |

| Happy | 0.1% |

| Surprised | 0% |

Microsoft Cognitive Services

| Age | 36 |

| Gender | Male |

Microsoft Cognitive Services

| Age | 30 |

| Gender | Male |

Microsoft Cognitive Services

| Age | 28 |

| Gender | Male |

Microsoft Cognitive Services

| Age | 13 |

| Gender | Male |

Microsoft Cognitive Services

| Age | 48 |

| Gender | Male |

Microsoft Cognitive Services

| Age | 9 |

| Gender | Male |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Likely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Possible |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| paintings art | 31.8% | |

|

| ||

| people portraits | 23.7% | |

|

| ||

| beaches seaside | 20.2% | |

|

| ||

| streetview architecture | 10.8% | |

|

| ||

| events parties | 5.2% | |

|

| ||

| nature landscape | 2.8% | |

|

| ||

| pets animals | 1.9% | |

|

| ||

| food drinks | 1.8% | |

|

| ||

| interior objects | 1.1% | |

|

| ||

Captions

Microsoft

created on 2022-01-08

| Rod Taylor et al. sitting posing for the camera | 84.2% | |

|

| ||

| Rod Taylor et al. sitting on the ground | 84.1% | |

|

| ||

| Rod Taylor et al. sitting on a bench | 67.6% | |

|

| ||

Text analysis

Amazon

play.

at play.

Natives

at

Natives

at play.

Natives

at

play.