Machine Generated Data

Tags

Amazon

created on 2021-12-15

Clarifai

created on 2023-10-15

Imagga

created on 2021-12-15

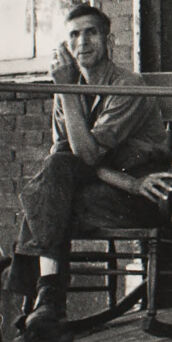

| man | 26.2 | |

|

| ||

| male | 23.9 | |

|

| ||

| people | 21.7 | |

|

| ||

| room | 20.9 | |

|

| ||

| family | 20.4 | |

|

| ||

| person | 19.8 | |

|

| ||

| happy | 18.8 | |

|

| ||

| classroom | 17.9 | |

|

| ||

| portrait | 17.5 | |

|

| ||

| chair | 17.5 | |

|

| ||

| barbershop | 17.1 | |

|

| ||

| adult | 15.8 | |

|

| ||

| love | 15.8 | |

|

| ||

| black | 15.6 | |

|

| ||

| shop | 15.2 | |

|

| ||

| child | 15.2 | |

|

| ||

| shopping cart | 14.7 | |

|

| ||

| wheeled vehicle | 14.3 | |

|

| ||

| couple | 13.9 | |

|

| ||

| lifestyle | 13.7 | |

|

| ||

| handcart | 13.6 | |

|

| ||

| old | 12.5 | |

|

| ||

| seat | 12.5 | |

|

| ||

| mother | 12.2 | |

|

| ||

| sitting | 12 | |

|

| ||

| happiness | 11.7 | |

|

| ||

| smiling | 11.6 | |

|

| ||

| outdoors | 11.2 | |

|

| ||

| window | 11 | |

|

| ||

| parent | 10.7 | |

|

| ||

| mercantile establishment | 10.6 | |

|

| ||

| home | 10.4 | |

|

| ||

| senior | 10.3 | |

|

| ||

| women | 10.3 | |

|

| ||

| youth | 10.2 | |

|

| ||

| building | 9.8 | |

|

| ||

| kid | 9.7 | |

|

| ||

| fun | 9.7 | |

|

| ||

| together | 9.6 | |

|

| ||

| urban | 9.6 | |

|

| ||

| married | 9.6 | |

|

| ||

| husband | 9.5 | |

|

| ||

| men | 9.4 | |

|

| ||

| father | 9.3 | |

|

| ||

| smile | 9.3 | |

|

| ||

| city | 9.1 | |

|

| ||

| kin | 9.1 | |

|

| ||

| one | 9 | |

|

| ||

| world | 8.9 | |

|

| ||

| boy | 8.7 | |

|

| ||

| day | 8.6 | |

|

| ||

| wife | 8.5 | |

|

| ||

| joy | 8.3 | |

|

| ||

| care | 8.2 | |

|

| ||

| indoor | 8.2 | |

|

| ||

| cheerful | 8.1 | |

|

| ||

| bench | 8.1 | |

|

| ||

| indoors | 7.9 | |

|

| ||

| retired | 7.8 | |

|

| ||

| daughter | 7.7 | |

|

| ||

| outside | 7.7 | |

|

| ||

| casual | 7.6 | |

|

| ||

| relax | 7.6 | |

|

| ||

| conveyance | 7.5 | |

|

| ||

| relationship | 7.5 | |

|

| ||

| leisure | 7.5 | |

|

| ||

| wheelchair | 7.5 | |

|

| ||

| floor | 7.4 | |

|

| ||

| alone | 7.3 | |

|

| ||

| playing | 7.3 | |

|

| ||

| dirty | 7.2 | |

|

| ||

| place of business | 7.1 | |

|

| ||

Google

created on 2021-12-15

| Chair | 89.7 | |

|

| ||

| Musician | 87.6 | |

|

| ||

| Dress | 84.3 | |

|

| ||

| Musical instrument | 79.6 | |

|

| ||

| Vintage clothing | 74.4 | |

|

| ||

| Classic | 73.7 | |

|

| ||

| Art | 72.3 | |

|

| ||

| Sitting | 67.8 | |

|

| ||

| Suit | 66.9 | |

|

| ||

| Monochrome | 65.2 | |

|

| ||

| Table | 64.4 | |

|

| ||

| Stock photography | 63.4 | |

|

| ||

| Plant | 61.2 | |

|

| ||

| Room | 60.2 | |

|

| ||

| Folk instrument | 59.4 | |

|

| ||

| Painting | 58 | |

|

| ||

| Monochrome photography | 56.8 | |

|

| ||

| Retro style | 54.5 | |

|

| ||

| Photo caption | 53.1 | |

|

| ||

| History | 52.4 | |

|

| ||

Color Analysis

Face analysis

Amazon

Microsoft

AWS Rekognition

| Age | 22-34 |

| Gender | Female, 92.8% |

| Calm | 56.5% |

| Sad | 27.4% |

| Angry | 12.8% |

| Confused | 1.4% |

| Fear | 1% |

| Disgusted | 0.4% |

| Surprised | 0.3% |

| Happy | 0.2% |

AWS Rekognition

| Age | 20-32 |

| Gender | Male, 62.7% |

| Calm | 86.6% |

| Sad | 7.9% |

| Angry | 3.8% |

| Disgusted | 0.6% |

| Confused | 0.5% |

| Surprised | 0.2% |

| Fear | 0.2% |

| Happy | 0.1% |

AWS Rekognition

| Age | 36-54 |

| Gender | Male, 99.2% |

| Confused | 56.2% |

| Calm | 22.3% |

| Sad | 16.2% |

| Disgusted | 1.8% |

| Surprised | 1.3% |

| Angry | 1% |

| Fear | 0.7% |

| Happy | 0.5% |

AWS Rekognition

| Age | 0-3 |

| Gender | Male, 80.1% |

| Fear | 82.3% |

| Surprised | 8.4% |

| Calm | 6.8% |

| Happy | 0.8% |

| Confused | 0.6% |

| Angry | 0.4% |

| Disgusted | 0.4% |

| Sad | 0.3% |

AWS Rekognition

| Age | 49-67 |

| Gender | Female, 50% |

| Calm | 60.9% |

| Happy | 30.3% |

| Angry | 4.9% |

| Fear | 1.3% |

| Sad | 1.3% |

| Surprised | 0.9% |

| Disgusted | 0.3% |

| Confused | 0.1% |

Microsoft Cognitive Services

| Age | 49 |

| Gender | Male |

Microsoft Cognitive Services

| Age | 5 |

| Gender | Female |

Microsoft Cognitive Services

| Age | 0 |

| Gender | Female |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| paintings art | 98.7% | |

|

| ||

Captions

Microsoft

created on 2021-12-15

| a vintage photo of a person in front of a window | 89.9% | |

|

| ||

| a vintage photo of a person standing in front of a window | 89.4% | |

|

| ||

| a vintage photo of a person | 89.3% | |

|

| ||

Text analysis

Amazon

PM

E.C

E.C