Machine Generated Data

Tags

Color Analysis

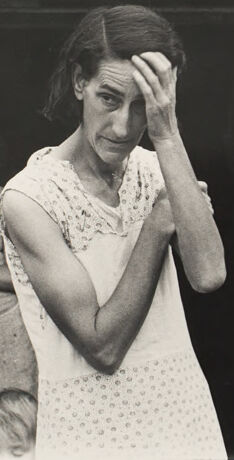

Face analysis

Amazon

Microsoft

AWS Rekognition

| Age | 23-37 |

| Gender | Male, 95.5% |

| Sad | 72.4% |

| Calm | 12% |

| Confused | 6.4% |

| Fear | 5% |

| Surprised | 2.1% |

| Angry | 1.6% |

| Disgusted | 0.3% |

| Happy | 0.1% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Person | 99.5% | |

Categories

Imagga

created on 2021-12-15

| paintings art | 70.7% | |

| streetview architecture | 23.6% | |

| people portraits | 3.3% | |

Captions

Microsoft

created by unknown on 2021-12-15

| a woman standing in front of a building | 86.5% | |

| a woman sitting on a bench in front of a building | 68.9% | |

| a woman sitting in front of a building | 68.8% | |

Clarifai

Created by general-english-image-caption-clip on 2025-07-12

photograph of a bride and groom.

Salesforce

Created by general-english-image-caption-blip on 2025-05-22

a photograph of a woman in a white dress standing in front of a house

OpenAI GPT

Created by gpt-4o-2024-11-20 on 2025-06-12

The image features a rustic scene with a log cabin that has a weathered wooden roof and walls made of logs and mud. Outside the cabin, there are objects such as a broom and kitchen items, suggesting humble living conditions. A woman is standing in front wearing a light-colored patterned dress, while part of an additional figure and a small child can be seen near the doorway. The overall atmosphere suggests a rural lifestyle, potentially in the early 20th century.

Created by gpt-4o-2024-08-06 on 2025-06-12

The image depicts a woman standing in front of a rustic log cabin. She is wearing a sleeveless, patterned dress and has her arms raised in front of her. The cabin behind her is made of logs with a wooden shingle roof. To the left of the door, there is a small shelf or ledge that holds various household items, including a kettle and a broom. In the doorway, there are two children visible. The exterior of the cabin has a rough and simplistic appearance, suggesting an older or rural setting. To the right of the doorway, a piece of fabric or towel is draped over the logs. The overall composition suggests a scene of everyday life in a rural or historic context.

Anthropic Claude

Created by anthropic.claude-3-haiku-20240307-v1:0 on 2025-06-18

The image depicts two women standing in the doorway of a rustic, wooden structure. The woman in the foreground appears distressed, with her hand covering her face. The woman in the background has a more neutral expression. The setting suggests a rural, impoverished environment, with the wooden structure and its dilapidated roof visible. The image has a somber, melancholic tone, capturing a moment of emotional distress or hardship experienced by the women.

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-18

This is a black and white photograph that appears to be from the Depression era or early 20th century. It shows a rustic log cabin or wooden structure with a shingled roof. In the doorway, there are two people - one in a white sleeveless dress in the foreground who has her hand to her forehead in what appears to be a gesture of distress or weariness. Behind her, partially visible in the doorway, is another person in what looks like a patterned dress. The image captures a sense of rural poverty and hardship typical of documentary photography from this period. The composition and lighting create a powerful emotional impact, highlighting the difficult living conditions and human struggle of the time.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-06-04

The image depicts a black-and-white photograph of a woman and a child standing in the doorway of a rustic log cabin or small house. The woman, positioned centrally, has her right hand raised to her forehead, with her elbow bent and her arm angled across her body. She wears a sleeveless white dress adorned with lace at the top and a patterned fabric at the bottom. Behind her, a young girl stands in the doorway, partially obscured by the woman's arm. The girl is dressed in a long-sleeved dress and appears to be holding a broom.

The building's exterior is constructed from rough-hewn logs, and the roof is composed of wooden shingles. A shelf on the left side of the image holds a few items, including a metal bucket and a bottle. The overall atmosphere of the image suggests a rural or impoverished setting, with the woman and child appearing to be engaged in some form of domestic activity.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-06-04

The image is a black-and-white photograph of a woman standing in front of a log cabin, with another woman and a child visible inside. The woman in the foreground has dark hair pulled back and is wearing a white dress with lace details on the top. She is holding her right hand to her forehead, possibly due to fatigue or stress.

Behind her, a woman and a child are standing in the doorway of the log cabin. The woman has dark hair and is wearing a light-colored dress, while the child is partially obscured by the woman's arm. The cabin appears to be made of rough-hewn logs, with a sloping roof covered in shingles. A broom is leaning against the wall to the left of the doorway, and a shelf with various items is visible on the left side of the image.

The overall atmosphere of the image suggests a sense of hardship and struggle, possibly during the Great Depression era. The woman's pose and expression convey a sense of exhaustion and worry, while the rustic surroundings and simple clothing suggest a life of poverty and hardship.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-06-03

The image is a black-and-white photograph of a woman standing in front of a log cabin. The woman is wearing a white dress and has her hand on her forehead, appearing to be in distress or deep thought. Behind her, another woman stands in the doorway of the cabin, looking at the woman in front. The cabin has a wooden roof and walls made of logs, with a small window on the side. The image has a vintage feel, suggesting it was taken in the past.

Created by amazon.nova-pro-v1:0 on 2025-06-03

A black-and-white photo shows a woman standing in front of a house with her hands on her face. She is wearing a dress and seems to be looking at something. Behind her, there is a woman standing in the doorway, and a child is standing in front of her. On the left, there is a table with a bucket, a broom, and a bottle.

Google Gemini

Created by gemini-2.0-flash-lite on 2025-05-16

Here's a description of the image:

Overall Impression: The photograph is a black and white shot of a woman standing in the doorway of a rustic cabin. It evokes a sense of hardship and perhaps desperation.

Foreground:

- Woman: A woman is the primary focus. She is positioned in the doorway. She is wearing a light-colored dress with a pattern and appears to be holding her hand to her head, as if in distress or thought. Her expression is somber.

- Doorway: The doorway is a dark rectangle, leading into the interior of the cabin.

Background:

- Girl: Behind the woman, inside the doorway, is a young girl. She appears to be looking out or watching. She is wearing a simple dress.

- Cabin: The cabin is constructed from rough-hewn logs. The roof is shingled and weathered. There are hints of a shelf or ledge near the door, with what appear to be a jar, bucket, and a broom resting on it. The overall impression is of poverty and a rural setting.

Atmosphere: The black and white format and the subjects' expressions give the photo a documentary feel. The composition and lighting, combined with the environment, suggest a narrative of struggle and resilience. The photograph may have been taken during the Great Depression, and captures the hardship faced by many rural families during that era.

Created by gemini-2.0-flash on 2025-05-16

The black and white photograph shows a woman standing in the doorway of a rustic cabin, with a younger girl behind her. The woman in the foreground has a concerned expression and is holding her hand to her forehead. She wears a light-colored dress with a subtle pattern.

The girl in the background stands inside the cabin's entrance, partly obscured by the doorframe. She looks downward, with a solemn demeanor. The cabin is made of rough logs and has a weathered, shingled roof. A shelf with a few household items, including a container and a broom, is visible to the left of the door. A white cloth hangs on the right side of the door. The overall tone of the image is somber and evocative of hardship.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-06-01

The image is a black-and-white photograph depicting two women standing in the doorway of a rustic, wooden house with a shingled roof. The house appears to be constructed from rough, uneven logs, suggesting a rural or traditional setting.

The woman in the foreground is wearing a light-colored dress with a floral or dotted pattern. She has her hand on her forehead, a gesture that often indicates worry, fatigue, or distress. Her expression appears somber or troubled.

Behind her, another woman stands in the doorway, partially obscured by the door frame. She is wearing a dress with a different pattern and is holding onto the door frame with one hand. Her expression is not clearly visible, but her posture suggests she is observing something outside the house.

The scene conveys a sense of hardship or concern, possibly reflecting the challenges of rural life or a difficult situation the women are facing. The overall atmosphere of the photograph is somber and reflective.

Qwen

Created by Qwen/Qwen2.5-VL-7B-Instruct on 2025-07-05

This is a black-and-white photograph featuring a woman standing in the doorway of a rustic, weathered wooden house. The woman is holding her hand against her forehead, appearing to be deep in thought or possibly distressed. She is wearing a sleeveless, light-colored dress with small patterns. Her expression is serious, and her posture suggests contemplation or fatigue.

Behind her, partially visible, is another person, possibly a child, standing near the threshold of the doorway. The house has a simple, unadorned appearance with a rough, unevenly aligned wooden roof and walls made of logs. The scene evokes a sense of rural life and modest living conditions.

Created by Qwen/Qwen2.5-VL-72B-Instruct on 2025-07-05

This is a black-and-white photograph depicting a scene in front of a log cabin. The image shows a woman in the foreground wearing a light-colored dress, with her hand raised to her forehead, possibly in a gesture of concern or exhaustion. In the background, inside the cabin, a young girl is visible, standing near a table with various objects on it, including a metal pot and what appears to be a cloth or towel. The cabin has a rustic appearance with wooden logs forming the walls and a shingled roof. The overall mood of the photograph seems somber and reflective of a simpler, perhaps challenging, lifestyle.