Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

AWS Rekognition

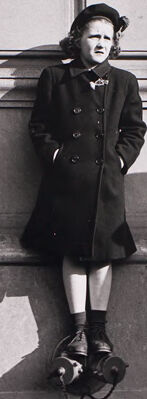

| Age | 21-33 |

| Gender | Female, 83.3% |

| Disgusted | 69.2% |

| Sad | 14.2% |

| Calm | 6.7% |

| Fear | 3.3% |

| Angry | 3% |

| Confused | 2.7% |

| Surprised | 0.5% |

| Happy | 0.4% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Coat | 100% | |

Categories

Imagga

created on 2021-12-14

| paintings art | 77.8% | |

| streetview architecture | 12.8% | |

| pets animals | 5.9% | |

| nature landscape | 1.6% | |

Captions

Microsoft

created by unknown on 2021-12-14

| a group of people posing for a photo | 74.3% | |

| a group of people posing for the camera | 74.2% | |

| a person posing for a photo | 74.1% | |

Clarifai

Created by general-english-image-caption-clip on 2025-07-13

a photograph of a group of young women.

Salesforce

Created by general-english-image-caption-blip on 2025-05-21

a photograph of a woman in a coat and hat sitting on a ledge

OpenAI GPT

Created by gpt-4o-2024-11-20 on 2025-06-10

This black-and-white photograph captures a city scene featuring individuals positioned near an urban wall. One person is perched on a ledge of the wall, wearing a dark coat and accessorized with neatly tied shoes and socks. The architecture of the wall includes rectangular paneling and a window with curtains on the left. The light casts distinct shadows across the textured surface, adding depth to the composition. Flanking figures in long coats add balance and structure to the image.

Created by gpt-4o-2024-08-06 on 2025-06-10

The image is a black and white photograph featuring a row of individuals standing against a building wall with rectangular paneling. The central figure, a child, is dressed in a coat, stockings, and shoes, and is sitting on a ledge or step, with feet not reaching the ground. The clothing of all individuals suggests a colder climate, with coats and long garments visible. The lighting creates distinct shadows cast on the wall behind them, indicating the photo was taken in bright daylight. The composition focuses on the play of light and shadow, as well as the arrangement of figures against the backdrop of the building. There's an open window with curtains on the left side of the image.

Anthropic Claude

Created by anthropic.claude-3-haiku-20240307-v1:0 on 2025-06-16

The image shows a black and white photograph of several people standing outside a building. In the center of the frame, there is a woman wearing a coat and hat, standing with her hands in her pockets. To her left, there is another woman wearing a long coat. To the right, there is a man standing in the background. The building behind them appears to be made of stone or concrete, with large windows. The overall scene has a somber, contemplative mood.

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-16

This is a black and white photograph that appears to be from the mid-20th century. It shows several people wearing dark winter coats standing against what appears to be the exterior wall of a building. The central figure is leaning against the wall wearing a dark coat, knee socks, and what look like roller skates on their feet. There are other figures in dark coats and hats on either side, and someone partially visible at the top right of the frame. The composition creates interesting shadows on the light-colored wall behind the subjects. The image has a documentary or street photography style characteristic of that era.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-06-09

The image is a black-and-white photograph of a young girl sitting on a ledge, surrounded by other children and adults.

- The girl is wearing a dark coat, a hat, and shoes. She has her hands in her pockets and is looking at the camera.

- To the left of the girl, there is another child standing next to an adult. The child is also wearing a dark coat and hat.

- To the right of the girl, there is another child standing next to an adult. The child is wearing a dark coat and hat.

- In the background, there is a building with a window and a door. The window has curtains, and the door is open.

- The overall atmosphere of the image suggests that it was taken in the 1940s or 1950s, based on the clothing and hairstyles of the people in the photo.

The image appears to be a candid shot of a group of children and adults gathered outside a building. The girl sitting on the ledge is the main focus of the image, and her expression and posture suggest that she is feeling confident and relaxed. The other children and adults in the photo are also dressed in dark coats and hats, which suggests that they may be attending a formal event or gathering. Overall, the image captures a moment in time of a group of people gathered together, and it provides a glimpse into the fashion and culture of the time period in which it was taken.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-06-09

This image is a black-and-white photograph of a young girl sitting on a ledge, surrounded by three other people. The girl is wearing a dark coat, hat, and shoes, with her hands in her pockets. She has a small bow at her neck and is sitting on a ledge with her legs crossed.

To the left of the girl stands an older woman, also dressed in dark clothing, including a hat and coat. On the right side of the girl is another woman, wearing a dark coat. In the background, a boy is standing on a ledge above the girl, wearing a dark jacket and light-colored pants.

The scene appears to be set in front of a building with a window on the left side of the image. The overall atmosphere suggests a formal or posed photograph, possibly taken in the mid-20th century.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-06-08

The image is a black-and-white photograph of three women standing in front of a building. The woman in the middle is standing on a ledge, wearing a hat and a coat, and looking down. The woman on the left is standing with her hands in her pockets, and the woman on the right is standing with her hands clasped in front of her. The building has a window with a curtain, and there is a boy standing on the ledge above the window.

Created by amazon.nova-pro-v1:0 on 2025-06-08

A black-and-white photo of four people standing on the wall of a building. The girl in the middle is wearing a hat and a coat. She is standing on the metal object with a chain attached to it. The woman on the left is wearing a hat and a coat. The woman on the right is wearing a coat. The boy on the right is wearing a coat. The building has a window with curtains.

Google Gemini

Created by gemini-2.0-flash-lite on 2025-05-16

Here's a description of the image:

This is a black and white photograph, likely from the mid-20th century, featuring several people in an outdoor setting.

Composition: The image is framed with a large white border, giving it a classic photographic print aesthetic. The scene itself is centered and somewhat compressed within the frame, creating a sense of focused observation.

Subject and Setting: The photograph captures a street scene, likely in a city. The background features a textured, light-colored stone wall with rectangular architectural details. There's a window with curtains visible on the left. The lighting suggests a sunny day, casting strong shadows.

People: There are four visible figures:

- On the far left, a woman in a dark coat and hat is standing.

- In the center, a young girl is perched on what appears to be a ledge or fountain. She's wearing a dark coat and shoes, and her posture suggests she is playing or enjoying the view. She has her hands in her pockets.

- To the right of the girl, a woman, also in a dark coat and hat, is visible.

- At the upper right, a young boy stands on a higher level of the structure.

Atmosphere: The scene has a sense of formality due to the clothing, the architectural backdrop, and the subdued lighting. The photo captures a moment of everyday life with a touch of the surreal due to the girl's unusual position.

Created by gemini-2.0-flash on 2025-05-16

The black and white photograph features a group of people standing in front of a building. On the left, an older woman dressed in a long coat and hat, clutching a small purse, stands with a stern expression. In the center, a young girl wearing a coat, hat, and socks stands perched on what appears to be a water fountain. Her hands are in her pockets, and she gazes straight ahead. To the right of the girl, a young boy stands partially obscured by the building's edge, looking out. Next to him is another woman in a coat. The building behind them has a series of rectangular panels and a window with sheer curtains on the left side. The lighting in the photograph creates sharp shadows that highlight the textures and forms of the people and the building.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-05-31

This black-and-white photograph captures a group of four women standing outside a building with large windows. The scene appears to be from an earlier era, likely the mid-20th century, judging by the clothing styles and overall composition.

Central Figure: The woman in the center is the most prominent figure in the image. She is wearing a double-breasted coat, a hat, and knee-high socks with shoes. She stands confidently with her hands in her pockets and looks directly at the camera.

Left Figure: To the left, another woman stands with her hands clasped in front of her. She is dressed in a long coat and a hat, and she appears to be looking slightly away from the camera.

Right Figure: On the right, a third woman is partially visible. She is also wearing a long coat and a hat, and her posture suggests she is looking towards the central figure or the camera.

Window Figure: In the upper right corner, a fourth woman is leaning out of a window. She is also dressed in a coat and hat, and she looks down towards the other women.

The building's facade is plain with large windows, and the women's shadows are cast on the wall, indicating that the photograph was taken in daylight. The overall mood of the image is somewhat formal and composed, reflecting the attire and demeanor of the women.

Qwen

Created by Qwen/Qwen2.5-VL-7B-Instruct on 2025-07-05

This is a black-and-white photograph that appears to be a street scene. The image shows four people, likely in a public setting given the building in the background, which has a classical architectural style with large windows and a decorative facade.

Foreground:

- On the left, a woman is standing with her hand in her coat pocket, looking to the side. She is wearing a long coat, gloves, and a hat, suggesting a cold day. Her posture is somewhat reserved and contemplative.

- In the center, a young girl stands leaning against a wall. She is wearing a long coat, shorts, and a hat, and she appears to be looking slightly away from the camera. The contrast in her attire, with the shorts and coat, contrasts with the formality of the setting.

- On the right, another woman is standing with her hands clasped in front of her. She is wearing a coat with a fur-lined hood and gloves, indicating cold weather. Her expression is neutral.

Background:

- A child is standing on a ledge high above the other people, looking down. The child appears to be wearing a coat and pants, and their posture is relaxed and casual compared to the others. The child's elevated position suggests a sense of curiosity or detachment from the scene below.

The overall mood of the photograph is contemplative and quiet, capturing a candid moment in what seems to be a public space, possibly during a cold day. The black-and-white format adds a timeless and classical feel to the image.

Created by Qwen/Qwen2.5-VL-72B-Instruct on 2025-07-05

This is a black-and-white photograph featuring a group of people in front of a building with a large window and a door. The central figure is a child wearing a hat, coat, and shorts, standing on a ledge with one leg propped up on a stone feature. The child has a serious expression and is looking directly at the camera. To the left, there is another child wearing a beret and a coat, standing with hands in their pockets, looking to the side. To the right, there is a person partially visible, wearing a coat and looking down. In the background, another child is standing on the ledge near the door, looking towards the camera. The photograph has a vintage feel, suggesting it was taken in an earlier time period. The image is framed with a white border.