Machine Generated Data

Tags

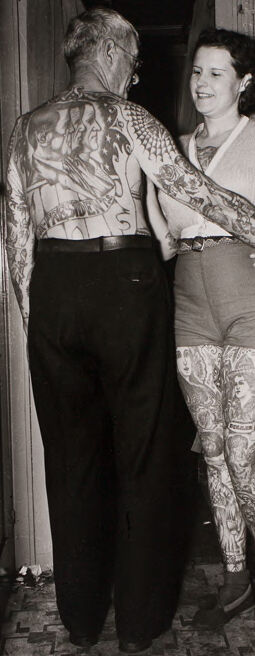

Amazon

created on 2022-01-08

Clarifai

created on 2023-10-25

Imagga

created on 2022-01-08

| call | 29.2 | |

|

| ||

| person | 25.4 | |

|

| ||

| fashion | 24.9 | |

|

| ||

| people | 22.3 | |

|

| ||

| posing | 22.2 | |

|

| ||

| adult | 20.7 | |

|

| ||

| portrait | 19.4 | |

|

| ||

| sexy | 19.3 | |

|

| ||

| attractive | 17.5 | |

|

| ||

| man | 17.5 | |

|

| ||

| sensuality | 17.3 | |

|

| ||

| model | 15.6 | |

|

| ||

| street | 14.7 | |

|

| ||

| outdoor | 14.5 | |

|

| ||

| dress | 14.4 | |

|

| ||

| elegance | 14.3 | |

|

| ||

| lady | 13.8 | |

|

| ||

| elegant | 13.7 | |

|

| ||

| human | 13.5 | |

|

| ||

| style | 13.3 | |

|

| ||

| pretty | 13.3 | |

|

| ||

| standing | 13 | |

|

| ||

| summer | 12.9 | |

|

| ||

| black | 12.8 | |

|

| ||

| hair | 12.7 | |

|

| ||

| prison | 12.5 | |

|

| ||

| lifestyle | 12.3 | |

|

| ||

| body | 12 | |

|

| ||

| wall | 12 | |

|

| ||

| device | 11.7 | |

|

| ||

| city | 11.6 | |

|

| ||

| vintage | 11.6 | |

|

| ||

| cute | 11.5 | |

|

| ||

| male | 11.4 | |

|

| ||

| clothing | 11.1 | |

|

| ||

| sensual | 10.9 | |

|

| ||

| urban | 10.5 | |

|

| ||

| old | 10.4 | |

|

| ||

| correctional institution | 10 | |

|

| ||

| elevator | 9.9 | |

|

| ||

| one | 9.7 | |

|

| ||

| door | 9.2 | |

|

| ||

| dark | 9.2 | |

|

| ||

| lovely | 8.9 | |

|

| ||

| sun | 8.8 | |

|

| ||

| happy | 8.8 | |

|

| ||

| window | 8.8 | |

|

| ||

| expression | 8.5 | |

|

| ||

| youth | 8.5 | |

|

| ||

| legs | 8.5 | |

|

| ||

| leisure | 8.3 | |

|

| ||

| makeup | 8.2 | |

|

| ||

| outdoors | 8.2 | |

|

| ||

| pose | 8.2 | |

|

| ||

| looking | 8 | |

|

| ||

| world | 8 | |

|

| ||

| lifting device | 7.9 | |

|

| ||

| couple | 7.8 | |

|

| ||

| happiness | 7.8 | |

|

| ||

| high | 7.8 | |

|

| ||

| vogue | 7.7 | |

|

| ||

| sexual | 7.7 | |

|

| ||

| fashionable | 7.6 | |

|

| ||

| suit | 7.6 | |

|

| ||

| penal institution | 7.5 | |

|

| ||

| sport | 7.4 | |

|

| ||

| park | 7.4 | |

|

| ||

| sunset | 7.2 | |

|

| ||

| women | 7.1 | |

|

| ||

| face | 7.1 | |

|

| ||

Google

created on 2022-01-08

| Gesture | 85.3 | |

|

| ||

| Smile | 84.4 | |

|

| ||

| Sleeve | 83.2 | |

|

| ||

| Flash photography | 81.8 | |

|

| ||

| Monochrome photography | 71.6 | |

|

| ||

| Vintage clothing | 71.4 | |

|

| ||

| Chair | 69.7 | |

|

| ||

| Event | 69 | |

|

| ||

| Art | 67 | |

|

| ||

| Monochrome | 66.1 | |

|

| ||

| Rectangle | 65.9 | |

|

| ||

| Room | 65 | |

|

| ||

| Dance | 64.7 | |

|

| ||

| Photographic paper | 58.3 | |

|

| ||

| Fun | 57.4 | |

|

| ||

| Visual arts | 53.9 | |

|

| ||

| Retro style | 50.1 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 22-30 |

| Gender | Female, 91% |

| Happy | 99.1% |

| Angry | 0.3% |

| Surprised | 0.2% |

| Disgusted | 0.1% |

| Confused | 0.1% |

| Sad | 0.1% |

| Fear | 0.1% |

| Calm | 0.1% |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very likely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| people portraits | 84.1% | |

|

| ||

| paintings art | 7.8% | |

|

| ||

| streetview architecture | 5.4% | |

|

| ||

| nature landscape | 1.2% | |

|

| ||

Captions

Microsoft

created on 2022-01-08

| a person standing in front of a door | 95.6% | |

|

| ||

| a person standing in front of a door | 93.7% | |

|

| ||

| a person standing in front of a window posing for the camera | 85% | |

|

| ||

Text analysis

Amazon

ignore

ignora

equire