Machine Generated Data

Tags

Amazon

created on 2022-01-08

Clarifai

created on 2023-10-25

Imagga

created on 2022-01-08

Google

created on 2022-01-08

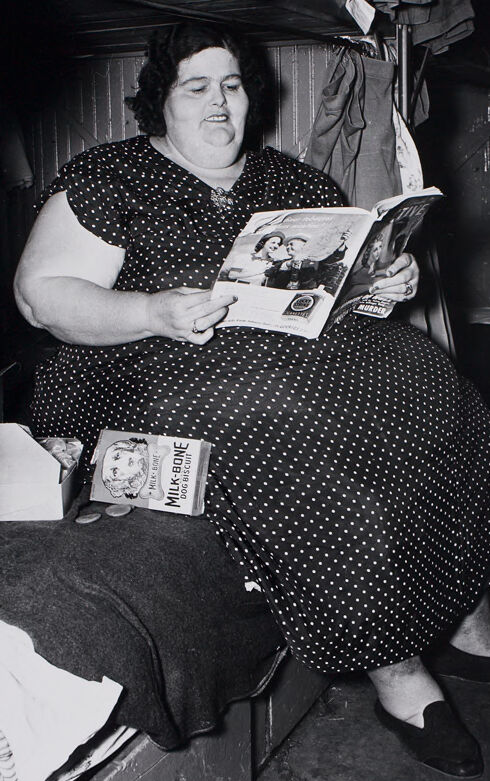

| Black | 89.5 | |

|

| ||

| Sleeve | 87.2 | |

|

| ||

| Dress | 83.5 | |

|

| ||

| Bag | 74.7 | |

|

| ||

| Chair | 74.1 | |

|

| ||

| Vintage clothing | 71.5 | |

|

| ||

| Monochrome photography | 69.8 | |

|

| ||

| Sitting | 69.7 | |

|

| ||

| Pattern | 69 | |

|

| ||

| Comfort | 66.7 | |

|

| ||

| Room | 66.2 | |

|

| ||

| Luggage and bags | 65.9 | |

|

| ||

| Stock photography | 65.2 | |

|

| ||

| Book | 65.2 | |

|

| ||

| Office equipment | 64.4 | |

|

| ||

| Lap | 63 | |

|

| ||

| Font | 61.8 | |

|

| ||

| Monochrome | 59.3 | |

|

| ||

| Linens | 55.5 | |

|

| ||

| Retro style | 54.8 | |

|

| ||

Microsoft

created on 2022-01-08

| text | 96.1 | |

|

| ||

| clothing | 92.7 | |

|

| ||

| person | 92.2 | |

|

| ||

| black and white | 87.4 | |

|

| ||

| human face | 86.1 | |

|

| ||

| book | 58.4 | |

|

| ||

| drawing | 50.2 | |

|

| ||

Color Analysis

Face analysis

Amazon

Microsoft

AWS Rekognition

| Age | 41-49 |

| Gender | Male, 70.2% |

| Disgusted | 94.8% |

| Angry | 2.5% |

| Calm | 0.9% |

| Happy | 0.6% |

| Confused | 0.5% |

| Sad | 0.4% |

| Surprised | 0.2% |

| Fear | 0.2% |

Microsoft Cognitive Services

| Age | 31 |

| Gender | Female |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Unlikely |

| Blurred | Very unlikely |

Feature analysis

Amazon

Person

| Person | 94.4% | |

|

| ||

Categories

Imagga

| paintings art | 99.3% | |

|

| ||

Captions

Microsoft

created on 2022-01-08

| text | 27.8% | |

|

| ||

Text analysis

Amazon

MILK-BONE

DOG

DOG BISCUIT

BISCUIT

MURDER

MILK

MILK BONE

BONE

igune

ligrer

MILK-BONE

MILK-BONE