Machine Generated Data

Tags

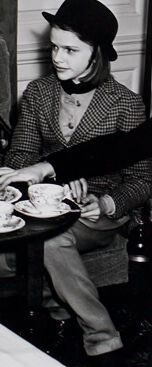

Amazon

created on 2022-01-08

| Person | 98.9 | |

|

| ||

| Human | 98.9 | |

|

| ||

| Person | 98.5 | |

|

| ||

| Meal | 98.4 | |

|

| ||

| Food | 98.4 | |

|

| ||

| Person | 98.4 | |

|

| ||

| Restaurant | 98.2 | |

|

| ||

| Person | 98.1 | |

|

| ||

| Couch | 95.4 | |

|

| ||

| Furniture | 95.4 | |

|

| ||

| Person | 94.8 | |

|

| ||

| Cafeteria | 87.7 | |

|

| ||

| Dish | 84.5 | |

|

| ||

| Living Room | 80.5 | |

|

| ||

| Room | 80.5 | |

|

| ||

| Indoors | 80.5 | |

|

| ||

| Sitting | 79.7 | |

|

| ||

| Table Lamp | 78.3 | |

|

| ||

| Lamp | 78.3 | |

|

| ||

| Shelf | 74.7 | |

|

| ||

| Buffet | 73.7 | |

|

| ||

| Food Court | 72.8 | |

|

| ||

| Table | 70.6 | |

|

| ||

| Home Decor | 69 | |

|

| ||

| People | 64.5 | |

|

| ||

| Face | 64.5 | |

|

| ||

| Dining Table | 59.9 | |

|

| ||

| Bookcase | 58.1 | |

|

| ||

Clarifai

created on 2023-10-25

Imagga

created on 2022-01-08

Google

created on 2022-01-08

| Food | 93.2 | |

|

| ||

| Table | 93.1 | |

|

| ||

| Tableware | 89.9 | |

|

| ||

| Chair | 88.6 | |

|

| ||

| Coat | 87.2 | |

|

| ||

| Picture frame | 86.6 | |

|

| ||

| Plate | 84.4 | |

|

| ||

| Black-and-white | 83.1 | |

|

| ||

| Sharing | 82.2 | |

|

| ||

| Window | 80.1 | |

|

| ||

| Dishware | 77.2 | |

|

| ||

| Event | 72.7 | |

|

| ||

| Monochrome photography | 72 | |

|

| ||

| Monochrome | 71 | |

|

| ||

| Serveware | 70.7 | |

|

| ||

| Room | 70.5 | |

|

| ||

| Suit | 69.3 | |

|

| ||

| Sitting | 68 | |

|

| ||

| Vintage clothing | 66.1 | |

|

| ||

| Cooking | 65.4 | |

|

| ||

Color Analysis

Face analysis

Amazon

Microsoft

AWS Rekognition

| Age | 6-16 |

| Gender | Male, 99.3% |

| Calm | 83.7% |

| Sad | 9.5% |

| Fear | 2.6% |

| Confused | 2.2% |

| Angry | 0.9% |

| Disgusted | 0.5% |

| Surprised | 0.3% |

| Happy | 0.3% |

AWS Rekognition

| Age | 9-17 |

| Gender | Female, 100% |

| Calm | 87.7% |

| Happy | 7.3% |

| Sad | 1.3% |

| Angry | 1.1% |

| Surprised | 1.1% |

| Confused | 0.6% |

| Disgusted | 0.6% |

| Fear | 0.4% |

AWS Rekognition

| Age | 18-26 |

| Gender | Female, 93.5% |

| Calm | 50.6% |

| Sad | 37.2% |

| Confused | 5.7% |

| Fear | 2.2% |

| Surprised | 1.9% |

| Angry | 1% |

| Disgusted | 0.9% |

| Happy | 0.5% |

AWS Rekognition

| Age | 12-20 |

| Gender | Female, 100% |

| Happy | 69% |

| Calm | 11.9% |

| Angry | 9.5% |

| Surprised | 3.4% |

| Sad | 2.1% |

| Confused | 1.5% |

| Disgusted | 1.4% |

| Fear | 1.1% |

AWS Rekognition

| Age | 16-22 |

| Gender | Female, 98.1% |

| Calm | 92.2% |

| Sad | 5.5% |

| Confused | 0.7% |

| Surprised | 0.5% |

| Disgusted | 0.4% |

| Angry | 0.3% |

| Fear | 0.3% |

| Happy | 0.2% |

Microsoft Cognitive Services

| Age | 32 |

| Gender | Female |

Microsoft Cognitive Services

| Age | 29 |

| Gender | Male |

Microsoft Cognitive Services

| Age | 12 |

| Gender | Male |

Microsoft Cognitive Services

| Age | 20 |

| Gender | Female |

Microsoft Cognitive Services

| Age | 19 |

| Gender | Female |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Possible |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| interior objects | 88% | |

|

| ||

| people portraits | 9.2% | |

|

| ||

| pets animals | 1.7% | |

|

| ||

Captions

Microsoft

created on 2022-01-08

| a group of people sitting around a living room | 98.6% | |

|

| ||

| a group of people sitting in a living room | 98.3% | |

|

| ||

| a group of people sitting at a table in a living room | 97.7% | |

|

| ||