Machine Generated Data

Tags

Amazon

created on 2022-01-08

| Furniture | 100 | |

|

| ||

| Person | 98 | |

|

| ||

| Human | 98 | |

|

| ||

| Person | 97.8 | |

|

| ||

| Person | 97.6 | |

|

| ||

| Living Room | 95.5 | |

|

| ||

| Room | 95.5 | |

|

| ||

| Indoors | 95.5 | |

|

| ||

| Interior Design | 91.3 | |

|

| ||

| Couch | 87.6 | |

|

| ||

| Chair | 83.9 | |

|

| ||

| Clock Tower | 77.6 | |

|

| ||

| Building | 77.6 | |

|

| ||

| Architecture | 77.6 | |

|

| ||

| Tower | 77.6 | |

|

| ||

| Person | 76.5 | |

|

| ||

| Armchair | 73.1 | |

|

| ||

| Bed | 58.8 | |

|

| ||

| Lamp | 57.1 | |

|

| ||

Clarifai

created on 2023-10-25

Imagga

created on 2022-01-08

| man | 21.5 | |

|

| ||

| person | 20.8 | |

|

| ||

| salon | 18 | |

|

| ||

| room | 18 | |

|

| ||

| chair | 16.9 | |

|

| ||

| home | 15.9 | |

|

| ||

| adult | 15.7 | |

|

| ||

| people | 15.6 | |

|

| ||

| interior | 14.1 | |

|

| ||

| black | 14 | |

|

| ||

| men | 13.7 | |

|

| ||

| work | 13.6 | |

|

| ||

| male | 13.5 | |

|

| ||

| house | 13.4 | |

|

| ||

| indoors | 13.2 | |

|

| ||

| shop | 12.6 | |

|

| ||

| barbershop | 12 | |

|

| ||

| device | 11.1 | |

|

| ||

| equipment | 11 | |

|

| ||

| patient | 10.9 | |

|

| ||

| window | 10.1 | |

|

| ||

| furniture | 9.4 | |

|

| ||

| lifestyle | 9.4 | |

|

| ||

| electric chair | 9.2 | |

|

| ||

| modern | 9.1 | |

|

| ||

| instrument | 9.1 | |

|

| ||

| light | 8.7 | |

|

| ||

| smiling | 8.7 | |

|

| ||

| sitting | 8.6 | |

|

| ||

| seat | 8.4 | |

|

| ||

| portrait | 8.4 | |

|

| ||

| happy | 8.1 | |

|

| ||

| decoration | 8.1 | |

|

| ||

| looking | 8 | |

|

| ||

| to | 8 | |

|

| ||

| working | 8 | |

|

| ||

| business | 7.9 | |

|

| ||

| mercantile establishment | 7.7 | |

|

| ||

| instrument of execution | 7.7 | |

|

| ||

| fun | 7.5 | |

|

| ||

| one | 7.5 | |

|

| ||

| case | 7.4 | |

|

| ||

| vintage | 7.4 | |

|

| ||

| office | 7.2 | |

|

| ||

| women | 7.1 | |

|

| ||

| job | 7.1 | |

|

| ||

Google

created on 2022-01-08

| Chair | 86.9 | |

|

| ||

| Art | 77.4 | |

|

| ||

| Vintage clothing | 74.8 | |

|

| ||

| Sitting | 68.8 | |

|

| ||

| Room | 66.9 | |

|

| ||

| Picture frame | 66.7 | |

|

| ||

| Illustration | 64.1 | |

|

| ||

| Stock photography | 63.4 | |

|

| ||

| Classic | 60.6 | |

|

| ||

| Lamp | 59.2 | |

|

| ||

| History | 57.3 | |

|

| ||

| Monochrome | 56.5 | |

|

| ||

| Retro style | 55.6 | |

|

| ||

| Vintage advertisement | 52.7 | |

|

| ||

| Machine | 51.8 | |

|

| ||

| Visual arts | 51.1 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 35-43 |

| Gender | Female, 99.9% |

| Happy | 93.2% |

| Calm | 2.9% |

| Sad | 1.6% |

| Disgusted | 0.7% |

| Confused | 0.6% |

| Fear | 0.4% |

| Surprised | 0.3% |

| Angry | 0.3% |

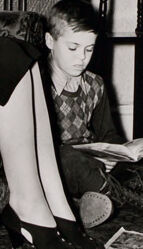

AWS Rekognition

| Age | 6-12 |

| Gender | Male, 100% |

| Sad | 70.8% |

| Calm | 11.9% |

| Confused | 6.3% |

| Disgusted | 4.1% |

| Angry | 2.5% |

| Fear | 1.9% |

| Surprised | 1.9% |

| Happy | 0.5% |

AWS Rekognition

| Age | 51-59 |

| Gender | Male, 99.8% |

| Calm | 74.4% |

| Sad | 10.4% |

| Confused | 5.2% |

| Angry | 3.5% |

| Disgusted | 2.2% |

| Fear | 2% |

| Surprised | 2% |

| Happy | 0.4% |

AWS Rekognition

| Age | 13-21 |

| Gender | Male, 99.5% |

| Disgusted | 87.1% |

| Calm | 4.6% |

| Confused | 3.9% |

| Sad | 2% |

| Angry | 1.1% |

| Surprised | 0.7% |

| Happy | 0.3% |

| Fear | 0.2% |

AWS Rekognition

| Age | 4-12 |

| Gender | Female, 75.6% |

| Happy | 96.7% |

| Disgusted | 0.9% |

| Calm | 0.9% |

| Sad | 0.7% |

| Confused | 0.4% |

| Angry | 0.2% |

| Surprised | 0.1% |

| Fear | 0.1% |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Possible |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| paintings art | 88.3% | |

|

| ||

| streetview architecture | 6.2% | |

|

| ||

| interior objects | 3.9% | |

|

| ||

Captions

Microsoft

created on 2022-01-08

| a person sitting in a living room | 80.2% | |

|

| ||

| a person sitting in a room | 80.1% | |

|

| ||

| a person sitting in a living room | 80% | |

|

| ||

Text analysis

Amazon

74

Joe

230

1940

Sarasota,

41.5%

Florida

Steinmetz

Steinmetz Studio

Studio

Sarasota, Florida Pennsylvania

Pennsylvania

10

74

41.57%

Joe 9. Steinmetz Studio

Sarasota, Florida nes

1940

230

74

41.57%

Joe

9.

Steinmetz

Studio

Sarasota,

Florida

nes

1940

230