Machine Generated Data

Tags

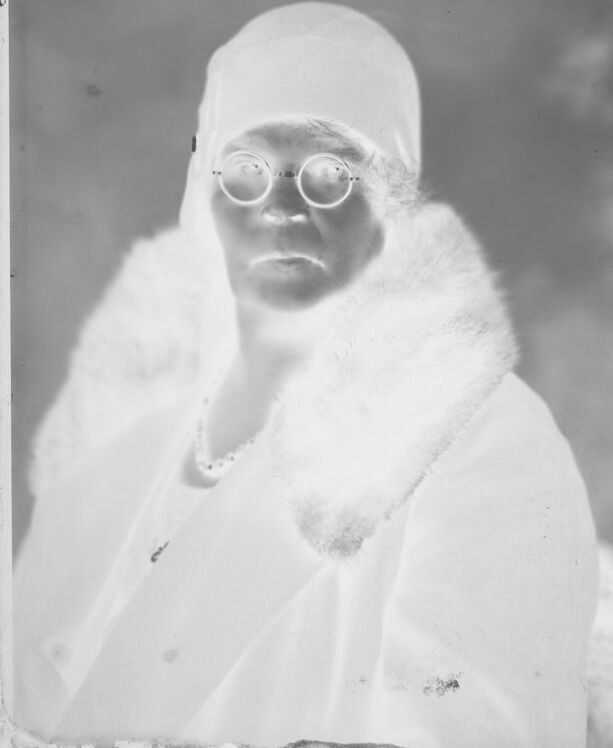

Amazon

created on 2021-12-14

Clarifai

created on 2023-10-15

| people | 99.6 | |

|

| ||

| portrait | 99.5 | |

|

| ||

| eyewear | 98.8 | |

|

| ||

| one | 98 | |

|

| ||

| adult | 97.8 | |

|

| ||

| man | 97.1 | |

|

| ||

| wear | 94.2 | |

|

| ||

| veil | 94.1 | |

|

| ||

| scientist | 93.5 | |

|

| ||

| monochrome | 92.6 | |

|

| ||

| leader | 91.8 | |

|

| ||

| eyeglasses | 85.7 | |

|

| ||

| facial hair | 84.3 | |

|

| ||

| facial expression | 83.2 | |

|

| ||

| old | 82.3 | |

|

| ||

| outerwear | 81.5 | |

|

| ||

| lid | 80.9 | |

|

| ||

| administration | 79.5 | |

|

| ||

| profile | 79.3 | |

|

| ||

| science | 78.6 | |

|

| ||

Imagga

created on 2021-12-14

| negative | 68.9 | |

|

| ||

| mask | 63.6 | |

|

| ||

| film | 53.5 | |

|

| ||

| covering | 43.5 | |

|

| ||

| photographic paper | 41.3 | |

|

| ||

| disguise | 35.5 | |

|

| ||

| face | 34.8 | |

|

| ||

| portrait | 33.6 | |

|

| ||

| attire | 31.4 | |

|

| ||

| clothing | 28.3 | |

|

| ||

| photographic equipment | 27.5 | |

|

| ||

| person | 25.8 | |

|

| ||

| adult | 22 | |

|

| ||

| people | 21.7 | |

|

| ||

| man | 19.5 | |

|

| ||

| attractive | 18.9 | |

|

| ||

| ruler | 18 | |

|

| ||

| pretty | 16.8 | |

|

| ||

| care | 16.5 | |

|

| ||

| male | 16.3 | |

|

| ||

| lady | 16.2 | |

|

| ||

| hat | 15.5 | |

|

| ||

| eyes | 15.5 | |

|

| ||

| sexy | 15.3 | |

|

| ||

| skin | 15.2 | |

|

| ||

| fun | 15 | |

|

| ||

| winter | 14.5 | |

|

| ||

| looking | 14.4 | |

|

| ||

| cosmetics | 14 | |

|

| ||

| fashion | 13.6 | |

|

| ||

| model | 13.2 | |

|

| ||

| happy | 13.2 | |

|

| ||

| expression | 12.8 | |

|

| ||

| human | 12.7 | |

|

| ||

| hair | 12.7 | |

|

| ||

| health | 12.5 | |

|

| ||

| coat | 12.3 | |

|

| ||

| smile | 12.1 | |

|

| ||

| cold | 12 | |

|

| ||

| costume | 11.9 | |

|

| ||

| makeup | 11.9 | |

|

| ||

| old | 11.8 | |

|

| ||

| black | 11.4 | |

|

| ||

| one | 11.2 | |

|

| ||

| blond | 11.1 | |

|

| ||

| elegance | 10.9 | |

|

| ||

| cute | 10.8 | |

|

| ||

| celebration | 10.4 | |

|

| ||

| head | 10.1 | |

|

| ||

| consumer goods | 10 | |

|

| ||

| wig | 9.9 | |

|

| ||

| spa | 9.9 | |

|

| ||

| medicine | 9.7 | |

|

| ||

| women | 9.5 | |

|

| ||

| healthy | 9.4 | |

|

| ||

| holiday | 9.3 | |

|

| ||

| snow | 9.2 | |

|

| ||

| joy | 9.2 | |

|

| ||

| close | 9.1 | |

|

| ||

| make | 9.1 | |

|

| ||

| dress | 9 | |

|

| ||

| medical | 8.8 | |

|

| ||

| beard | 8.8 | |

|

| ||

| body | 8.8 | |

|

| ||

| fur coat | 8.7 | |

|

| ||

| gorgeous | 8.2 | |

|

| ||

| smiling | 8 | |

|

| ||

| lifestyle | 7.9 | |

|

| ||

| seasonal | 7.9 | |

|

| ||

| happiness | 7.8 | |

|

| ||

| serious | 7.6 | |

|

| ||

| hairstyle | 7.6 | |

|

| ||

| studio | 7.6 | |

|

| ||

| clean | 7.5 | |

|

| ||

| art | 7.5 | |

|

| ||

| handsome | 7.1 | |

|

| ||

Google

created on 2021-12-14

| Glasses | 96.8 | |

|

| ||

| Vision care | 93.9 | |

|

| ||

| Goggles | 92.3 | |

|

| ||

| Sunglasses | 91.5 | |

|

| ||

| Eyewear | 88.9 | |

|

| ||

| Sleeve | 87.2 | |

|

| ||

| Rectangle | 82.4 | |

|

| ||

| Art | 81.4 | |

|

| ||

| Tints and shades | 75.3 | |

|

| ||

| Monochrome photography | 71.4 | |

|

| ||

| Monochrome | 70.4 | |

|

| ||

| Vintage clothing | 69.9 | |

|

| ||

| Room | 67.8 | |

|

| ||

| Stock photography | 62.4 | |

|

| ||

| Portrait | 60.4 | |

|

| ||

| Photographic paper | 58.5 | |

|

| ||

| Visual arts | 57.2 | |

|

| ||

| Portrait photography | 55.9 | |

|

| ||

| Retro style | 51.4 | |

|

| ||

Microsoft

created on 2021-12-14

| text | 99.6 | |

|

| ||

| human face | 98.3 | |

|

| ||

| person | 90.7 | |

|

| ||

| black and white | 90 | |

|

| ||

| smile | 88.4 | |

|

| ||

| clothing | 88 | |

|

| ||

| old | 86.8 | |

|

| ||

| glasses | 85.6 | |

|

| ||

| portrait | 84.2 | |

|

| ||

| white | 79.2 | |

|

| ||

| sunglasses | 74.1 | |

|

| ||

| black | 67.5 | |

|

| ||

| posing | 63 | |

|

| ||

| woman | 59.5 | |

|

| ||

| fashion accessory | 53 | |

|

| ||

| picture frame | 39.1 | |

|

| ||

| vintage | 35.2 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 27-43 |

| Gender | Male, 96.8% |

| Calm | 98.5% |

| Fear | 0.7% |

| Happy | 0.4% |

| Surprised | 0.2% |

| Angry | 0.1% |

| Sad | 0.1% |

| Confused | 0.1% |

| Disgusted | 0% |

Feature analysis

Categories

Imagga

| paintings art | 99.5% | |

|

| ||

Captions

Microsoft

created on 2021-12-14

| a vintage photo of a man | 93.1% | |

|

| ||

| an old photo of a man | 92.9% | |

|

| ||

| old photo of a man | 91.5% | |

|

| ||

Text analysis

Amazon

a

63

IT

a aliga

him

him fahris

fahris

aliga