Machine Generated Data

Tags

Amazon

created on 2021-12-14

Clarifai

created on 2023-10-25

Imagga

created on 2021-12-14

| bride | 35.6 | |

|

| ||

| dress | 33.4 | |

|

| ||

| groom | 32.8 | |

|

| ||

| person | 31.8 | |

|

| ||

| portrait | 29.8 | |

|

| ||

| wedding | 28.5 | |

|

| ||

| people | 26.2 | |

|

| ||

| adult | 24.6 | |

|

| ||

| love | 24.5 | |

|

| ||

| attractive | 21.7 | |

|

| ||

| fashion | 21.1 | |

|

| ||

| happiness | 20.4 | |

|

| ||

| pretty | 18.9 | |

|

| ||

| veil | 18.6 | |

|

| ||

| clothing | 18.1 | |

|

| ||

| married | 17.3 | |

|

| ||

| hair | 16.6 | |

|

| ||

| negative | 16.6 | |

|

| ||

| human | 16.5 | |

|

| ||

| face | 16.3 | |

|

| ||

| happy | 15.7 | |

|

| ||

| bouquet | 15.3 | |

|

| ||

| smile | 15 | |

|

| ||

| gown | 14.8 | |

|

| ||

| model | 14.8 | |

|

| ||

| cute | 13.6 | |

|

| ||

| bridal | 13.6 | |

|

| ||

| black | 13.2 | |

|

| ||

| flowers | 13 | |

|

| ||

| lifestyle | 13 | |

|

| ||

| sexy | 12.9 | |

|

| ||

| film | 12.7 | |

|

| ||

| marriage | 12.3 | |

|

| ||

| couple | 12.2 | |

|

| ||

| skin | 11.9 | |

|

| ||

| wed | 11.8 | |

|

| ||

| life | 11.8 | |

|

| ||

| posing | 11.6 | |

|

| ||

| wife | 11.4 | |

|

| ||

| one | 11.2 | |

|

| ||

| elegance | 10.9 | |

|

| ||

| sensuality | 10.9 | |

|

| ||

| smiling | 10.9 | |

|

| ||

| man | 10.8 | |

|

| ||

| male | 10.6 | |

|

| ||

| lady | 10.6 | |

|

| ||

| eyes | 10.3 | |

|

| ||

| women | 10.3 | |

|

| ||

| day | 10.2 | |

|

| ||

| joy | 10 | |

|

| ||

| studio | 9.9 | |

|

| ||

| photographic paper | 9.8 | |

|

| ||

| cheerful | 9.8 | |

|

| ||

| tenderness | 9.7 | |

|

| ||

| celebration | 9.6 | |

|

| ||

| blond | 9.4 | |

|

| ||

| romance | 8.9 | |

|

| ||

| seductive | 8.6 | |

|

| ||

| men | 8.6 | |

|

| ||

| jacket | 8.4 | |

|

| ||

| sensual | 8.2 | |

|

| ||

| book jacket | 8.1 | |

|

| ||

| cap | 8.1 | |

|

| ||

| romantic | 8 | |

|

| ||

| family | 8 | |

|

| ||

| looking | 8 | |

|

| ||

| shower cap | 8 | |

|

| ||

| engaged | 7.9 | |

|

| ||

| ceremony | 7.8 | |

|

| ||

| engagement | 7.7 | |

|

| ||

| expression | 7.7 | |

|

| ||

| professional | 7.7 | |

|

| ||

| outdoor | 7.6 | |

|

| ||

| females | 7.6 | |

|

| ||

| fun | 7.5 | |

|

| ||

| future | 7.4 | |

|

| ||

| pure | 7.4 | |

|

| ||

| event | 7.4 | |

|

| ||

| patient | 7.3 | |

|

| ||

| girls | 7.3 | |

|

| ||

| gorgeous | 7.3 | |

|

| ||

| pose | 7.2 | |

|

| ||

| summer | 7.1 | |

|

| ||

| husband | 7.1 | |

|

| ||

| modern | 7 | |

|

| ||

Google

created on 2021-12-14

| Art | 81.6 | |

|

| ||

| Vintage clothing | 74.4 | |

|

| ||

| Room | 65.2 | |

|

| ||

| Monochrome | 64.8 | |

|

| ||

| Monochrome photography | 64.2 | |

|

| ||

| Stock photography | 63.9 | |

|

| ||

| Sitting | 63.7 | |

|

| ||

| Visual arts | 63 | |

|

| ||

| Painting | 62.8 | |

|

| ||

| Advertising | 60.2 | |

|

| ||

| Illustration | 60.1 | |

|

| ||

| History | 58.8 | |

|

| ||

| Portrait | 58.5 | |

|

| ||

| Photographic paper | 57.9 | |

|

| ||

| Retro style | 52.4 | |

|

| ||

| Portrait photography | 50.8 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

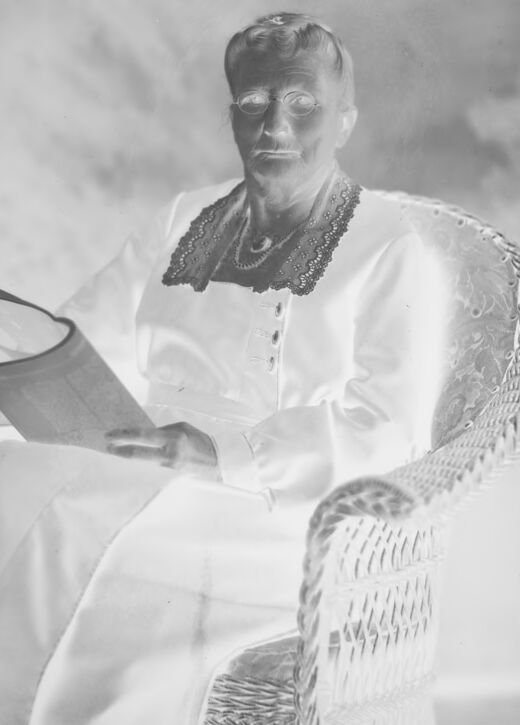

| Age | 37-55 |

| Gender | Male, 94.7% |

| Calm | 93.8% |

| Happy | 2.6% |

| Sad | 2.3% |

| Fear | 0.7% |

| Surprised | 0.3% |

| Confused | 0.2% |

| Angry | 0.1% |

| Disgusted | 0.1% |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Feature analysis

Amazon

Person

| Person | 97.4% | |

|

| ||

Categories

Imagga

| paintings art | 100% | |

|

| ||

Captions

Microsoft

created on 2021-12-14

| a vintage photo of a man | 90.2% | |

|

| ||

| an old photo of a man | 89.8% | |

|

| ||

| old photo of a man | 88.1% | |

|

| ||

Text analysis

777771

777771