Machine Generated Data

Tags

Amazon

created on 2021-12-14

| Person | 99.2 | |

|

| ||

| Human | 99.2 | |

|

| ||

| Person | 98.5 | |

|

| ||

| Person | 95.2 | |

|

| ||

| Person | 94.2 | |

|

| ||

| Person | 86.8 | |

|

| ||

| Person | 86.3 | |

|

| ||

| Person | 85.5 | |

|

| ||

| Mansion | 84.7 | |

|

| ||

| House | 84.7 | |

|

| ||

| Housing | 84.7 | |

|

| ||

| Building | 84.7 | |

|

| ||

| Person | 83.9 | |

|

| ||

| Person | 83.4 | |

|

| ||

| Path | 83.1 | |

|

| ||

| Person | 82.5 | |

|

| ||

| Person | 81.3 | |

|

| ||

| Person | 80.5 | |

|

| ||

| Person | 79.6 | |

|

| ||

| People | 77.5 | |

|

| ||

| Poster | 76.4 | |

|

| ||

| Advertisement | 76.4 | |

|

| ||

| Person | 74.6 | |

|

| ||

| Steamer | 69.4 | |

|

| ||

| Person | 67.8 | |

|

| ||

| Pedestrian | 65.4 | |

|

| ||

| Person | 65 | |

|

| ||

| Person | 64.3 | |

|

| ||

| Crowd | 62.5 | |

|

| ||

| Urban | 59.6 | |

|

| ||

| Person | 59.5 | |

|

| ||

| Drawing | 56.7 | |

|

| ||

| Art | 56.7 | |

|

| ||

Clarifai

created on 2023-10-25

Imagga

created on 2021-12-14

| snow | 42.7 | |

|

| ||

| city | 29.1 | |

|

| ||

| negative | 27 | |

|

| ||

| winter | 24.7 | |

|

| ||

| building | 22.6 | |

|

| ||

| film | 21.7 | |

|

| ||

| architecture | 21.2 | |

|

| ||

| old | 20.2 | |

|

| ||

| landscape | 17.8 | |

|

| ||

| house | 17.5 | |

|

| ||

| sky | 16.6 | |

|

| ||

| graffito | 16.4 | |

|

| ||

| travel | 16.2 | |

|

| ||

| weather | 16.2 | |

|

| ||

| drawing | 15.7 | |

|

| ||

| urban | 15.7 | |

|

| ||

| structure | 15.6 | |

|

| ||

| cold | 15.5 | |

|

| ||

| construction | 15.4 | |

|

| ||

| tree | 15.4 | |

|

| ||

| photographic paper | 15.3 | |

|

| ||

| sketch | 15.2 | |

|

| ||

| street | 14.7 | |

|

| ||

| fence | 14.7 | |

|

| ||

| trees | 14.2 | |

|

| ||

| road | 12.6 | |

|

| ||

| transportation | 11.7 | |

|

| ||

| park | 11.6 | |

|

| ||

| wall | 11.6 | |

|

| ||

| history | 11.6 | |

|

| ||

| vintage | 11.6 | |

|

| ||

| river | 11.6 | |

|

| ||

| scene | 11.3 | |

|

| ||

| window | 11.2 | |

|

| ||

| decoration | 11 | |

|

| ||

| transport | 11 | |

|

| ||

| historical | 10.3 | |

|

| ||

| town | 10.2 | |

|

| ||

| photographic equipment | 10.2 | |

|

| ||

| season | 10.1 | |

|

| ||

| frame | 10 | |

|

| ||

| black | 9.6 | |

|

| ||

| grunge | 9.4 | |

|

| ||

| light | 9.4 | |

|

| ||

| daily | 9.1 | |

|

| ||

| dirty | 9 | |

|

| ||

| outdoors | 9 | |

|

| ||

| rural | 8.8 | |

|

| ||

| forest | 8.7 | |

|

| ||

| water | 8.7 | |

|

| ||

| day | 8.6 | |

|

| ||

| picket fence | 8.6 | |

|

| ||

| ice | 8.5 | |

|

| ||

| outdoor | 8.4 | |

|

| ||

| exterior | 8.3 | |

|

| ||

| tourism | 8.2 | |

|

| ||

| tower | 8.1 | |

|

| ||

| night | 8 | |

|

| ||

| antique | 7.8 | |

|

| ||

| space | 7.8 | |

|

| ||

| outside | 7.7 | |

|

| ||

| industry | 7.7 | |

|

| ||

| frozen | 7.6 | |

|

| ||

| representation | 7.6 | |

|

| ||

| pattern | 7.5 | |

|

| ||

| industrial | 7.3 | |

|

| ||

| sea | 7 | |

|

| ||

Google

created on 2021-12-14

| Building | 87.1 | |

|

| ||

| Art | 81.2 | |

|

| ||

| Font | 77.9 | |

|

| ||

| Tints and shades | 76 | |

|

| ||

| Picture frame | 73.8 | |

|

| ||

| Monochrome photography | 73.5 | |

|

| ||

| Monochrome | 70.5 | |

|

| ||

| Window | 69 | |

|

| ||

| History | 68.8 | |

|

| ||

| Rectangle | 68.5 | |

|

| ||

| Room | 68 | |

|

| ||

| Visual arts | 66.7 | |

|

| ||

| Paper product | 66.1 | |

|

| ||

| Stock photography | 63 | |

|

| ||

| Illustration | 62.8 | |

|

| ||

| Plant | 59.8 | |

|

| ||

| Photographic paper | 59.2 | |

|

| ||

| Facade | 58.9 | |

|

| ||

| Vintage clothing | 56.6 | |

|

| ||

| Collection | 53.2 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 51-69 |

| Gender | Male, 59.9% |

| Calm | 98.2% |

| Happy | 1% |

| Disgusted | 0.3% |

| Sad | 0.2% |

| Angry | 0.2% |

| Surprised | 0.1% |

| Confused | 0% |

| Fear | 0% |

AWS Rekognition

| Age | 46-64 |

| Gender | Female, 70.2% |

| Calm | 60.4% |

| Sad | 36% |

| Angry | 1.9% |

| Confused | 0.8% |

| Happy | 0.5% |

| Surprised | 0.2% |

| Fear | 0.1% |

| Disgusted | 0.1% |

AWS Rekognition

| Age | 37-55 |

| Gender | Female, 52% |

| Calm | 54% |

| Sad | 10.7% |

| Happy | 9.6% |

| Confused | 8.5% |

| Angry | 8.3% |

| Disgusted | 5.9% |

| Fear | 1.6% |

| Surprised | 1.3% |

AWS Rekognition

| Age | 27-43 |

| Gender | Male, 66.6% |

| Calm | 94.6% |

| Surprised | 1.2% |

| Sad | 1% |

| Happy | 0.9% |

| Disgusted | 0.8% |

| Angry | 0.8% |

| Confused | 0.6% |

| Fear | 0.1% |

AWS Rekognition

| Age | 50-68 |

| Gender | Male, 51.7% |

| Calm | 86.7% |

| Sad | 9.4% |

| Happy | 1.4% |

| Angry | 1.2% |

| Confused | 0.5% |

| Disgusted | 0.4% |

| Fear | 0.3% |

| Surprised | 0.2% |

AWS Rekognition

| Age | 50-68 |

| Gender | Male, 55.9% |

| Happy | 34.6% |

| Calm | 26% |

| Fear | 21% |

| Angry | 6.2% |

| Sad | 5.1% |

| Surprised | 4.2% |

| Confused | 2% |

| Disgusted | 0.9% |

AWS Rekognition

| Age | 26-42 |

| Gender | Female, 73.5% |

| Happy | 56.2% |

| Calm | 21.6% |

| Sad | 11.1% |

| Angry | 6.4% |

| Fear | 2.5% |

| Surprised | 1% |

| Confused | 0.8% |

| Disgusted | 0.4% |

Feature analysis

Categories

Imagga

| text visuals | 42.9% | |

|

| ||

| paintings art | 23.4% | |

|

| ||

| interior objects | 19.5% | |

|

| ||

| cars vehicles | 6% | |

|

| ||

| streetview architecture | 4.5% | |

|

| ||

| food drinks | 2.3% | |

|

| ||

Captions

Microsoft

created by unknown on 2021-12-14

| a vintage photo of an old building | 83.5% | |

|

| ||

| a vintage photo of a building | 83.4% | |

|

| ||

| a vintage photo of a person | 77.3% | |

|

| ||

Clarifai

created by general-english-image-caption-blip on 2025-05-04

| a photograph of a group of people in white dresses and white dresses | -100% | |

|

| ||

Google Gemini

Created by gemini-2.0-flash-lite on 2025-05-04

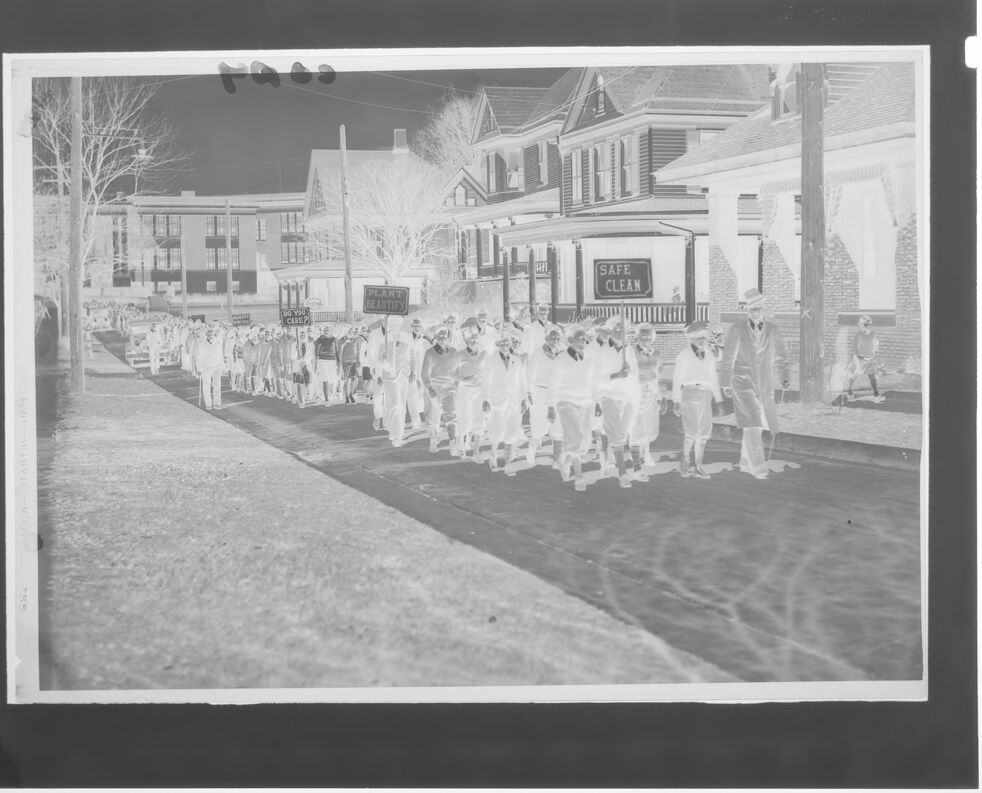

Here's a description of the image:

This is a black and white, negative image. It depicts a street scene with a large group of people marching down the road. They are walking on the asphalt, with a long shadow to the left. The group includes a few people wearing hats.

Along the side of the road, there are houses. One house has a sign that says "SAFE CLEAN" above its porch. Other signs in the crowd's hands are visible, including "DO YOU CARE?" and "PLANT BEAUTIFY". Bare trees are visible in the background. There is a car parked along the side of the road behind the marchers.

Created by gemini-2.0-flash on 2025-05-04

The image shows a grayscale photograph of a large group of people marching down a street lined with buildings. The photograph is slightly overexposed, giving it a bright, somewhat washed-out appearance.

The group is comprised of dozens of people, most of whom appear to be wearing light-colored shirts or uniforms. Some individuals carry signs, though the text on them is difficult to read due to the image quality. Possible messages include phrases like "PLANT & BEAUTIFY" and "DO YOU CARE?".

The street is bordered by buildings on both sides, ranging from smaller houses to larger, multi-story structures. One building has a sign that reads "SAFE CLEAN". Bare trees are visible, suggesting it could be late fall or winter.

In the background, some parked vehicles can be seen, and a few individuals appear to be observing the march. The overall impression is of a community event or demonstration of some sort.

Text analysis

Amazon