Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

Imagga

AWS Rekognition

| Age | 23-37 |

| Gender | Female, 85% |

| Happy | 47.4% |

| Calm | 21.4% |

| Sad | 16% |

| Angry | 6.4% |

| Surprised | 3.4% |

| Fear | 2.1% |

| Confused | 1.8% |

| Disgusted | 1.5% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Person | 99% | |

Categories

Imagga

created on 2021-12-14

| paintings art | 59.4% | |

| people portraits | 29.9% | |

| interior objects | 8% | |

| text visuals | 1.1% | |

Captions

Microsoft

created by unknown on 2021-12-14

| a group of people posing for a photo | 89.3% | |

| a group of people posing for the camera | 89.2% | |

| a group of people posing for a picture | 89.1% | |

Clarifai

Created by general-english-image-caption-clip on 2025-07-12

the funeral of person, the first black person to be elected president.

Salesforce

Created by general-english-image-caption-blip on 2025-05-19

a photograph of a group of people standing around a table

OpenAI GPT

Created by gpt-4o-2024-11-20 on 2025-06-08

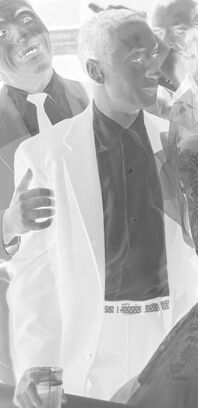

The image shows a group of individuals gathered in what appears to be a social or celebratory event, with several people dressed formally. The setting includes visible decorations, such as flowers, and signage featuring a "Miller High Life" logo, suggesting a party or reception. There is a table covered with a cloth in the foreground, and the atmosphere indicates a festive occasion.

Created by gpt-4o-2024-08-06 on 2025-06-08

The image depicts a group of people gathered together in what appears to be a social setting, possibly a party or event. Several individuals are standing near each other, dressed in formal attire, with some holding drinks in their hands. The atmospheric details suggest a lively and engaging environment, with the presence of decorative elements and the display of social activities around them. The visible elements in the environment include signs and decorations, one of which is a sign for "Miller High Life," indicating a possible theme or sponsorship. The image appears to be taken indoors, with a backdrop that might be a bar or gathering space, contributing to a congenial ambiance.

Anthropic Claude

Created by anthropic.claude-3-haiku-20240307-v1:0 on 2025-06-13

The image appears to depict a group of people gathered at what looks like a social event or celebration. The individuals are dressed in formal attire, suggesting it may be a wedding or similar formal occasion. The group is gathered around a table, with some holding drinks, and they seem to be engaged in conversation and laughter. The image has a vintage or black-and-white aesthetic, giving it a nostalgic feel.

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-13

This appears to be a vintage black and white photograph taken in what looks like a bar or restaurant setting. There's a Miller High Life sign visible in the background. A group of people are lined up together, all appearing to be in good spirits and smiling. Most are wearing light-colored shirts or clothing. The lighting in the photo creates a bright, overexposed effect, particularly from what seems to be a window behind the group. In the foreground, there appears to be some kind of dark-colored surface, possibly a table or counter. The overall atmosphere of the photo suggests this was a social gathering or celebration of some kind.

Meta Llama

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-05-25

The image depicts a black-and-white photograph of a group of people gathered around a table, with the majority holding glasses. The individuals are dressed in formal attire, consisting of suits and dresses, suggesting a celebratory or special occasion.

In the foreground, a table covered with a dark cloth is prominently displayed, while the background features various signs and advertisements, including one for Miller High Life beer. The overall atmosphere of the image exudes joy and festivity, as the people appear to be enjoying themselves in a lively setting.

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-05-25

The image depicts a group of people gathered at a bar, with a man in the foreground holding a drink and smiling. The scene is set in a dimly lit room, with the group standing around a long table covered with a black cloth. The atmosphere appears to be lively and celebratory, with the group's smiles and laughter suggesting a joyful occasion.

- A group of people are gathered at a bar.

- The group consists of approximately 15 individuals, all dressed in formal attire.

- They are standing around a long table covered with a black cloth.

- The atmosphere appears to be lively and celebratory.

- A man in the foreground is holding a drink and smiling.

- He is wearing a dark suit and tie.

- He has short white hair and is holding a glass of beer or other beverage.

- His smile suggests that he is enjoying himself.

- The room is dimly lit, with a few lights visible in the background.

- The walls are adorned with various decorations, including a large clock and a sign that reads "Miller High Life".

- The overall ambiance is relaxed and convivial.

In summary, the image captures a moment of joy and camaraderie among a group of friends or acquaintances at a bar. The man in the foreground adds a sense of personality to the scene, while the dimly lit room and formal attire suggest a more subdued and sophisticated atmosphere.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-05-29

The image is a black-and-white photograph of a group of people gathered together in what appears to be a social setting, possibly a party or formal event. The photograph is taken from a low angle, giving the impression that the viewer is looking up at the people. The individuals are dressed in formal attire, with some men wearing suits and ties and some women wearing dresses. They are standing in a line, facing the camera, and appear to be smiling and posing for the photograph. The background of the image is blurry, with a sign visible on the wall that reads "Miller High Life."

Created by amazon.nova-pro-v1:0 on 2025-05-29

The image is a black-and-white photograph of a group of people gathered in a room. They are standing together, and some are holding glasses. The man in the middle is wearing a white suit, while the woman next to him is wearing a dress. They are all smiling and seem to be posing for the camera. There is a table with a black cover in front of them.

Google Gemini

Created by gemini-2.0-flash-lite on 2025-04-29

Here's a description of the image:

Overall Impression: The image appears to be a candid shot of a group of people at a gathering or party. The photograph has a unique look because it appears to be a negative image. The figures are clustered together, and the atmosphere seems jovial.

Composition:

- Group of People: The main focus is a group of individuals, all looking at the camera and smiling.

- Line of Figures: The people are mostly lined up, suggesting they may be waiting for something (like a table or a performance).

- Environment: It appears to be an indoor setting, possibly a bar or a similar establishment. A Miller High Life sign is on the right.

Notable Details:

- Expressions: Most people have broad smiles on their faces, conveying a sense of enjoyment.

- Dress: The people are dressed in suits and cocktail dresses, suggesting a formal occasion.

Style & Quality:

- Negative Image: The most striking feature is the negative format, which gives the image a surreal and somewhat eerie quality.

- Monochrome: The image is entirely in black and white, emphasizing the shapes and textures.

In summary: This image captures a moment of festivity. The negative format and composition create a unique visual experience.

Created by gemini-2.0-flash on 2025-04-29

Here is a description of the image:

The image is a black-and-white photograph depicting a group of formally dressed people gathered indoors, possibly at a social event or gathering. They stand behind a long table draped with a dark cloth. Most of the individuals are holding glasses, suggesting they are toasting or celebrating.

The men in the group are wearing suits or jackets, and one woman is visible in an elegant dress. All of them appear to be smiling or in a celebratory mood. A prominent "Miller High Life" advertisement is visible in the background.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-05-25

The image is a black-and-white photograph depicting a group of people at what appears to be a formal event or gathering. The individuals are dressed in formal attire, with the men wearing suits and ties and the women in elegant dresses. They are standing close together, smiling and holding drinks in their hands, suggesting a celebratory atmosphere.

In the background, there is a table with a dark tablecloth, and some decorative elements are visible, including what looks like a Miller High Life beer advertisement. The setting appears to be indoors, possibly in a banquet hall or a similar venue. The overall mood of the photograph is joyful and festive, capturing a moment of camaraderie and celebration among the group.

Qwen

Created by Qwen/Qwen2.5-VL-7B-Instruct on 2025-07-09

The image appears to be a black and white photograph of a group of people gathered in a social setting, possibly a party or event. The individuals are dressed in formal attire, with men wearing suits and women in elegant dresses. The group is standing closely together, looking towards the camera with expressions ranging from smiles to neutral looks. In the foreground, there is a table covered with a black cloth, and some objects, possibly glasses and a bottle, are placed on it. The background shows a room with posters, a sign for "Miller High Life," and other decorations that suggest it might be a festive or celebratory event. The lighting is bright, and the overall atmosphere seems lively and social.

Created by Qwen/Qwen2.5-VL-72B-Instruct on 2025-07-09

This black-and-white image appears to be of a group of people in formal attire gathered around a table. The individuals are smudged and overlapping, creating a ghostly or double-exposure effect. They seem to be in a festive or celebratory mood, as some are holding drinks and others are smiling. The background shows a room with various posters or signs on the wall, and there is a "Miller High Life" sign visible on the right side of the image. The overall effect gives the impression of a lively party or social gathering.