Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 23-33 |

| Gender | Female, 83.7% |

| Sad | 90% |

| Calm | 6.6% |

| Angry | 0.9% |

| Confused | 0.8% |

| Happy | 0.6% |

| Disgusted | 0.4% |

| Fear | 0.3% |

| Surprised | 0.3% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Person | 99.3% | |

Categories

Imagga

created on 2022-01-23

| paintings art | 100% | |

Captions

Microsoft

created by unknown on 2022-01-23

| a group of people posing for a photo | 78.6% | |

| a group of people posing for the camera | 78.5% | |

| a group of people posing for a picture | 78.4% | |

Clarifai

Created by general-english-image-caption-clip on 2025-07-13

actor in a hospital bed.

Salesforce

Created by general-english-image-caption-blip on 2025-05-17

a photograph of a group of nurses in white uniforms are standing around a table

OpenAI GPT

Created by gpt-4o-2024-11-20 on 2025-06-16

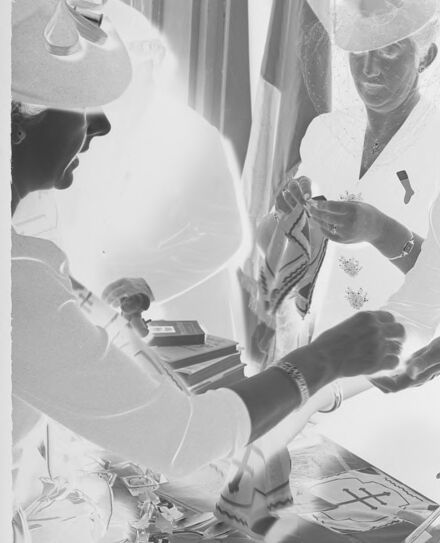

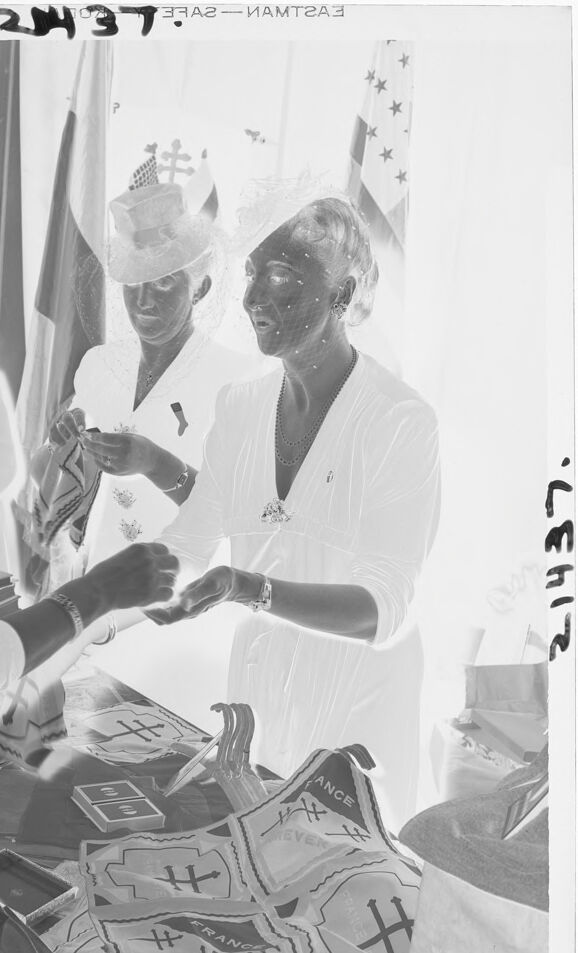

The image appears to show a group of women gathered at a table, engaging in a transaction or exchange. They are dressed formally, wearing hats and decorative clothing, with some jewelry visible. The table is covered with items, including fabric pieces with patterns and text, potentially banners or patches, as well as books and other objects. Flags are visible in the background, hinting at a formal or patriotic event. The reversed colors suggest that the image is a photographic negative.

Created by gpt-4o-2024-08-06 on 2025-06-16

The image appears to show several people gathered around a table that has various cloth items with the word "FRANCE" and symbols on them. The setting seems formal, as evidenced by people wearing hats and suits. Flags are visible in the background, suggesting an event or occasion possibly related to international or cultural significance. One individual seems to be gesturing or exchanging items with another, indicating interaction or transaction. The overall image is presented in a negative format, where the colors are inverted.

Anthropic Claude

Created by anthropic.claude-3-haiku-20240307-v1:0 on 2025-06-16

The image appears to depict a ceremonial or religious event. It shows several individuals wearing ceremonial attire, including hats and robes adorned with symbols and insignia. The individuals appear to be engaged in some kind of ritual or ceremony, with various objects and paraphernalia visible in the scene. The overall atmosphere suggests a solemn and formal occasion.

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-16

This appears to be a vintage black and white photograph showing several people dressed in white uniforms or clothing gathered around what looks like a table or counter. They appear to be handling or sorting through various items, possibly papers or documents with some kind of cross symbols visible on them. The image has a somewhat overexposed or bright quality typical of older photographs. The setting appears to be indoors, possibly in some kind of institutional or medical setting given the white attire and formal appearance of the scene.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-05-31

The image is a black-and-white photograph of three women standing behind a table, with one woman handing a bag to another.

- The woman on the left is wearing a hat and a white dress. She is facing the other two women and handing a bag to the woman in the middle.

- The woman in the middle is wearing a white dress and a hat. She is facing the woman on the left and receiving the bag.

- The woman on the right is wearing a white dress and a hat. She is facing the woman in the middle and appears to be looking at the bag being handed over.

- The table in front of the women has several bags on it, as well as some other items that are not clearly visible.

- In the background, there are several flags hanging on the wall, including what appears to be an American flag and a French flag.

- The overall atmosphere of the image suggests that the women are participating in some kind of event or ceremony, possibly related to their nationalities or cultural heritage.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-05-31

The image is a black-and-white photograph of three women in white dresses and hats, standing behind a table with various items on it. The woman on the left is handing something to the woman in the middle, who is wearing a hat with a veil. The woman on the right is looking at the woman in the middle.

The table has several items on it, including what appears to be a stack of papers or cards, a small box, and a few other objects that are not clearly visible. In the background, there are some flags or banners hanging from the ceiling, but they are not clearly visible due to the lighting and the angle of the photo.

Overall, the image suggests that the women are participating in some kind of event or activity, possibly related to a charity or fundraising effort. The presence of flags and banners in the background adds to this interpretation, as they are often used to decorate event spaces and create a festive atmosphere.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-05-30

The image is a black-and-white photograph of three women standing around a table, possibly engaged in an activity related to a political campaign. The woman on the left is wearing a hat and appears to be holding a small object, possibly a campaign button or a donation envelope. The woman in the middle is wearing a white dress and is holding a piece of paper, possibly a campaign flyer or a voter registration form. The woman on the right is wearing a hat and is holding a bag, possibly containing campaign materials or donations.

The table in front of them is covered with a white cloth and has various items on it, including a small box, a piece of paper, and a bag. Behind them, there are two flags, one of which is the American flag. The image has a slightly blurry effect, which may be due to the age of the photograph or the quality of the reproduction.

The image captures a moment of political engagement and community involvement, with the women working together to support a political campaign or cause. The use of black-and-white photography adds a sense of historical significance and nostalgia to the image, evoking a sense of the past and the long history of political activism and participation in American society.

Created by amazon.nova-pro-v1:0 on 2025-05-30

The black-and-white photo shows three women standing in front of a desk. The woman on the left is holding a plastic bag with a ribbon on top, and the woman in the middle is holding a piece of paper. The woman on the right is holding the hand of the woman on the left. Behind them are flags with stars. There are also some items on the desk, including a bag and a box.

Google Gemini

Created by gemini-2.0-flash on 2025-05-18

Here is a description of the image:

This is a black and white inverted image of three women at a table. The woman on the left of the image is wearing a large hat. To the right of her, a woman is wearing a small hat and another woman with a hat and veil is standing with her. The women are standing over a table laden with items that appear to include flags or pennants, playing cards, and other trinkets. The flags of France, The Vatican, and the United States of America can be seen in the background. The negative number "24437" is written across the top of the image.

Created by gemini-2.0-flash-lite on 2025-05-18

Certainly! Here's a description of the image:

Overall Impression:

The image appears to be a negative print of a photograph, likely from the mid-20th century. It captures a scene with three women, possibly at an event or a charitable gathering. They seem to be engaging in some transaction over a table laden with items.

Key Elements:

- Women: Three women are the focal points. They are dressed in light-colored clothing and wear hats, suggesting a formal occasion. One woman has a veil attached to her hat.

- Table & Items: A table is in the foreground. It holds items that appear to be for sale or display. You can make out playing cards, souvenir flags labeled with "France," and possibly other decorative items.

- Interaction: The women are interacting with each other over the table. One is handling an item, suggesting a purchase or exchange.

- Background: The background includes flags, giving a possible hint of the event's context. The American flag is identifiable among others.

Overall Style:

The photograph's style and setting suggest a historical event, possibly a charitable fair or an event promoting French culture, given the flags and "France" labeled souvenirs. The use of negative film gives it a slightly surreal and artistic aesthetic.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-05-31

The image is a black-and-white photograph depicting a formal event, likely a ceremony or a meeting. It shows three individuals engaged in conversation or a formal exchange.

Setting: The setting appears to be an indoor venue with a flag visible in the background, suggesting it could be a government or official building.

Individuals:

- The person on the left is partially obscured but appears to be wearing a hat and glasses.

- The person in the center is wearing a formal outfit with a hat, possibly a uniform, indicating a position of authority or significance.

- The person on the right is dressed in a white outfit, possibly a traditional or ceremonial garment, and is adorned with jewelry, including a necklace and earrings.

Activity: The individuals seem to be exchanging items or documents. The person on the right is handing something to the person in the center, while the person on the left appears to be observing or participating in the exchange.

Table: The table in front of them is covered with various items, including bags and what appears to be documents or certificates with text and symbols on them.

The overall atmosphere suggests a formal or ceremonial occasion, possibly related to government, diplomacy, or a cultural event.

Qwen

Created by Qwen/Qwen2.5-VL-7B-Instruct on 2025-07-05

This black-and-white photograph captures a group of women gathered around a table displaying various items, seemingly at a formal or ceremonial event. The women are dressed in elegant attire, with two wearing distinctive hats adorned with what appear to be religious symbols. They are interacting with each other, with one woman handing an object to another, and there are flags in the background, suggesting the event might be related to a national or international occasion. The table is covered with items that have emblems and text, including phrases like "France," indicating a possible cultural or diplomatic context. The overall atmosphere appears to be one of celebration or ceremony.

Created by Qwen/Qwen2.5-VL-72B-Instruct on 2025-07-05

This black-and-white photograph captures a group of women engaged in an activity, likely a social or political gathering. They are dressed in elegant attire, with hats and accessories, suggesting a formal event. The women are handling and examining various items on a table, which include cards, ribbons, and other objects. In the background, there are flags, possibly indicating a patriotic or international theme to the event. The overall atmosphere appears to be one of collaboration and discussion.