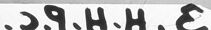

Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 49-57 |

| Gender | Female, 82.6% |

| Calm | 87.5% |

| Happy | 10.7% |

| Surprised | 0.6% |

| Sad | 0.4% |

| Confused | 0.3% |

| Disgusted | 0.2% |

| Fear | 0.1% |

| Angry | 0.1% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Person | 99.6% | |

Categories

Imagga

created on 2022-01-23

| paintings art | 97.9% | |

Captions

Microsoft

created by unknown on 2022-01-23

| a vintage photo of a man | 89.8% | |

| a person standing posing for the camera | 89.7% | |

| a person posing for the camera | 89.6% | |

Salesforce

Created by general-english-image-caption-blip on 2025-05-22

a photograph of a woman in a white dress and a hat

OpenAI GPT

Created by gpt-4o-2024-11-20 on 2025-06-18

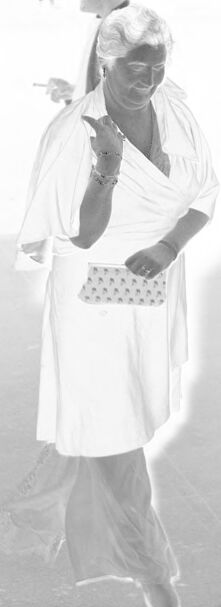

The image appears to be a photographic negative. It features a person wearing a light-colored dress and carrying a clutch purse. The background includes several other figures and indistinct objects such as a pole and possibly a wheelchair. There is handwritten text along the bottom edge of the negative. The scene is outdoors, with ample space and lighting.

Created by gpt-4o-2024-08-06 on 2025-06-18

The image appears to be a photographic negative, showing a person standing in the foreground. The figure is wearing a light-colored dress and holding a small, patterned clutch purse. Jewelry such as bracelets and a ring are visible on their left hand. In the background, there are several other figures and an object resembling a wheelchair. The ground appears to be paved, and there is a pole to the right of the main figure. Handwritten text is visible on the bottom edge, although it is reversed due to the negative format.

Anthropic Claude

Created by anthropic.claude-3-haiku-20240307-v1:0 on 2025-06-20

The image shows a person wearing a long, flowing white robe or dress, standing in an outdoor setting with some indistinct background elements. The person appears to be holding some kind of object, possibly a book or other item, in their hands. The image has a hazy, atmospheric quality, giving it a dreamlike or ethereal feel.

Created by us.anthropic.claude-3-opus-20240229-v1:0 on 2025-06-20

The black and white photograph depicts an elderly African American woman standing outdoors. She is wearing a simple white dress or robe and has her hair covered with a white scarf or wrap on her head. The woman has a serious, contemplative expression on her face as she looks slightly down and to the side. The background appears hazy or faded, giving an ethereal quality to the image. At the bottom, there is a handwritten caption that reads "FS 1412 Untitled, New S.C.H.H.E."

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-20

This is a black and white photograph that appears to be historical in nature. In the image, there is a person wearing white clothing, including what looks like a white dress or uniform. They are standing outdoors and appear to be holding something in their hands. The photo has a somewhat overexposed or bright quality to it, which is common in older photographs. There appears to be some text or notation at the bottom of the image, though it's not clearly legible. The background is fairly minimal, with what might be a pole or post visible in the scene.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-06-10

The image is a black-and-white photograph of a woman standing in front of a white background, with a pole to her right and a faint image of a person or object behind her. The woman is wearing a white dress and has short hair. She is holding a small purse in her left hand and appears to be looking down at it.

Here are the key features of the image:

- Woman:

- Wearing a white dress

- Has short hair

- Holding a small purse in her left hand

- Looking down at the purse

- Background:

- White

- Faint image of a person or object behind the woman

- Pole:

- Located to the right of the woman

- Appears to be a metal pole

- Other objects:

- A faint image of a person or object behind the woman

- A small, illegible text at the bottom of the image

Overall, the image appears to be a candid shot of a woman standing in front of a white background, possibly in a studio or outdoor setting. The faint image of a person or object behind her suggests that there may have been another subject or object in the scene that was not fully visible.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-06-10

The image is a black and white photograph of a woman walking, with a man in the background. The woman is wearing a white dress or tunic, and has short hair. She is holding a small purse in her left hand and appears to be looking down at something on the ground. The man in the background is also wearing a white outfit, but his features are not clearly visible due to the poor quality of the image.

The background of the image is blurry, but it appears to be a room with a pole or column in the center. There are some indistinct objects on the walls, but they are not clearly visible. The overall atmosphere of the image is one of mystery and intrigue, as the subjects' faces are not clearly visible and the background is unclear.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-06-08

The black-and-white image shows a woman in a white dress standing on the street. She is smiling and appears to be posing for a photo. She is holding a purse in her left hand and a cigarette in her right hand. She is wearing a bracelet and a ring on her left hand. Behind her, there are two people standing, one of whom is holding a cigarette. The image has a watermark on the bottom left corner.

Created by amazon.nova-pro-v1:0 on 2025-06-08

The black and white image shows a woman with white hair wearing a white dress, bracelets, and a ring. She is holding a small bag and appears to be walking on a concrete floor. She is smiling and giving a peace sign with her right hand. Behind her, there is a blurry image of a man holding a cigarette. The image has a watermark in the bottom left corner.

Google Gemini

Created by gemini-2.0-flash-lite on 2025-05-18

Here's a description of the image:

Overall Impression:

The image is a negative of a photograph. It appears to be an outdoor scene, possibly taken in the mid-20th century due to the clothing styles and the vintage look of the picture. The focus is on a woman in the center, with other figures in the background.

Main Subject:

- A woman is the central figure. She is wearing a light-colored, flowing outfit. She has her hair pulled back and is holding a small patterned purse. She is gesturing with her hand, and appears to be looking to her right.

Background:

- There are other people in the background, appearing out of focus. They are wearing light-colored clothing.

- There is a tall, thin pole standing to the right of the woman.

- A partially visible object that appears to be a wheeled vehicle.

Additional details:

- The image has a lot of highlights due to being a negative.

- There is some text or labeling at the bottom of the image.

- The edges of the image have the characteristic notches and markings of vintage film negatives.

In Summary:

This is a portrait-style photograph from a vintage negative, likely depicting a social event. The woman in the foreground is the clear focus, with the background figures and setting adding context to the scene.

Created by gemini-2.0-flash on 2025-05-18

Here is a description of the image:

The image shows a woman holding a clutch in a negative format. She is wearing a dress and a light wrap. The image quality is poor. In the background, there are a few other people, one of whom seems to be a man. There is also some kind of vehicle.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-05-31

The image is a black-and-white photograph featuring two individuals dressed in traditional attire. The foreground figure is an older person wearing a white garment that appears to be a robe or a traditional dress, with a cloth draped over their head. They are holding a staff or walking stick in their right hand and seem to be in a standing position. The background figure, also dressed in white, is partially obscured and appears to be carrying something on their head, possibly a bundle or a container.

The setting appears to be outdoors, with a simple, possibly rural backdrop. The photograph has some visible damage or wear, such as scratches and spots, indicating it might be an old or historical image. There are also some handwritten annotations on the borders of the photograph, but they are not entirely legible. The overall scene suggests a cultural or traditional context, possibly from a specific region or community.