Machine Generated Data

Tags

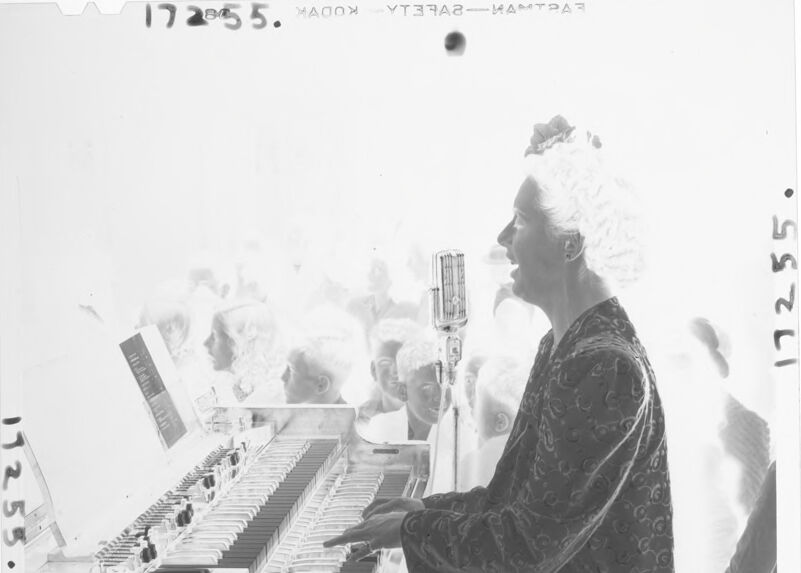

Amazon

created on 2022-01-23

| Person | 99 | |

|

| ||

| Human | 99 | |

|

| ||

| Electronics | 90.2 | |

|

| ||

| Keyboard | 84.2 | |

|

| ||

| Leisure Activities | 79.2 | |

|

| ||

| Musician | 77.3 | |

|

| ||

| Musical Instrument | 77.3 | |

|

| ||

| Piano | 71.3 | |

|

| ||

| Person | 59 | |

|

| ||

| Person | 45.7 | |

|

| ||

Clarifai

created on 2023-10-26

Imagga

created on 2022-01-23

Google

created on 2022-01-23

| Musical instrument | 93 | |

|

| ||

| Piano | 91.3 | |

|

| ||

| Organist | 88.5 | |

|

| ||

| Keyboard | 88.1 | |

|

| ||

| Musician | 85.9 | |

|

| ||

| Musical keyboard | 84.3 | |

|

| ||

| Music | 81.2 | |

|

| ||

| Musical instrument accessory | 79.8 | |

|

| ||

| Pianist | 79.8 | |

|

| ||

| Jazz pianist | 78.1 | |

|

| ||

| Font | 78.1 | |

|

| ||

| Recital | 72.3 | |

|

| ||

| Electronic instrument | 71.7 | |

|

| ||

| Keyboard player | 71.4 | |

|

| ||

| Art | 70.1 | |

|

| ||

| Electronic musical instrument | 70 | |

|

| ||

| Sitting | 65.8 | |

|

| ||

| Stock photography | 61.9 | |

|

| ||

| Monochrome photography | 61.6 | |

|

| ||

| Vintage clothing | 61.4 | |

|

| ||

Microsoft

created on 2022-01-23

| text | 99.9 | |

|

| ||

| piano | 97.6 | |

|

| ||

| black and white | 86.2 | |

|

| ||

| person | 81.4 | |

|

| ||

| musical instrument | 72.5 | |

|

| ||

| musical keyboard | 68.1 | |

|

| ||

| clothing | 63 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 37-45 |

| Gender | Female, 57% |

| Calm | 69% |

| Sad | 26.6% |

| Confused | 1.2% |

| Surprised | 0.8% |

| Fear | 0.8% |

| Angry | 0.6% |

| Disgusted | 0.6% |

| Happy | 0.3% |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| paintings art | 100% | |

|

| ||

Captions

Microsoft

created on 2022-01-23

| a person standing in front of a laptop | 43.4% | |

|

| ||

| a person sitting at a desk | 43.3% | |

|

| ||

| a person standing in front of a computer | 43.2% | |

|

| ||

Text analysis

Amazon

17255

17255.

MAOOX

17 2005 5.

11255.

11255.

17

2005

5.

11255.