Machine Generated Data

Tags

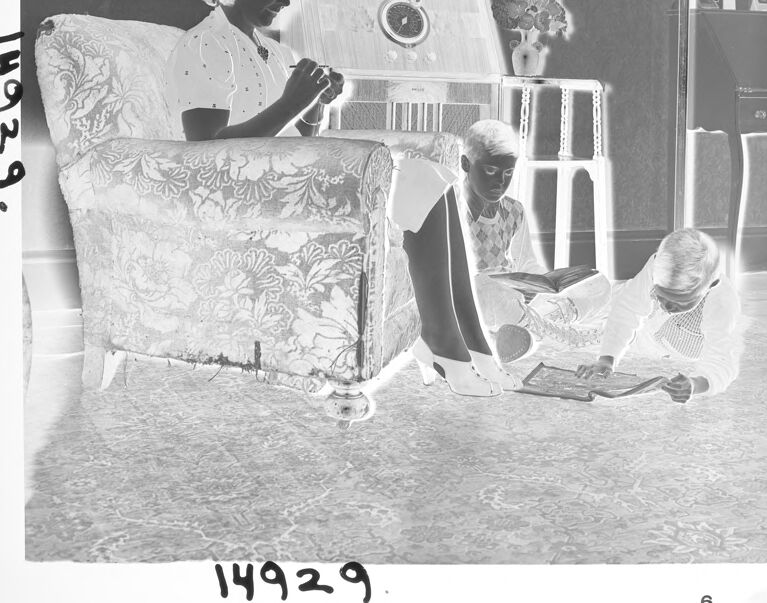

Amazon

created on 2021-12-14

| Furniture | 98.3 | |

|

| ||

| Person | 96.3 | |

|

| ||

| Human | 96.3 | |

|

| ||

| Person | 94.7 | |

|

| ||

| Person | 90.4 | |

|

| ||

| Text | 88.5 | |

|

| ||

| Interior Design | 87.3 | |

|

| ||

| Indoors | 87.3 | |

|

| ||

| Home Decor | 84.7 | |

|

| ||

| Drawing | 71.4 | |

|

| ||

| Art | 71.4 | |

|

| ||

| Living Room | 68.8 | |

|

| ||

| Room | 68.8 | |

|

| ||

| Chair | 68.2 | |

|

| ||

| Bed | 67.7 | |

|

| ||

| Photography | 61.4 | |

|

| ||

| Photo | 61.4 | |

|

| ||

| Rug | 60.1 | |

|

| ||

| Person | 57.8 | |

|

| ||

| Sketch | 57.6 | |

|

| ||

| Page | 57.6 | |

|

| ||

| Linen | 56.9 | |

|

| ||

| Armchair | 56.1 | |

|

| ||

| Female | 55.5 | |

|

| ||

| Couch | 55.4 | |

|

| ||

| Person | 50.9 | |

|

| ||

Clarifai

created on 2023-10-15

Imagga

created on 2021-12-14

| electric chair | 24.2 | |

|

| ||

| people | 20.6 | |

|

| ||

| instrument of execution | 19.7 | |

|

| ||

| instrument | 19.6 | |

|

| ||

| person | 19.1 | |

|

| ||

| man | 16.2 | |

|

| ||

| silhouette | 14.1 | |

|

| ||

| device | 14 | |

|

| ||

| design | 13.5 | |

|

| ||

| male | 12.8 | |

|

| ||

| team | 12.5 | |

|

| ||

| businessman | 12.4 | |

|

| ||

| business | 12.1 | |

|

| ||

| symbol | 12.1 | |

|

| ||

| sexy | 12 | |

|

| ||

| home | 12 | |

|

| ||

| celebration | 12 | |

|

| ||

| teamwork | 11.1 | |

|

| ||

| work | 11.1 | |

|

| ||

| flag | 11 | |

|

| ||

| occupation | 11 | |

|

| ||

| black | 10.8 | |

|

| ||

| perfume | 10.7 | |

|

| ||

| crowd | 10.6 | |

|

| ||

| patriotic | 10.5 | |

|

| ||

| nation | 10.4 | |

|

| ||

| gift | 10.3 | |

|

| ||

| lights | 10.2 | |

|

| ||

| businesswoman | 10 | |

|

| ||

| president | 9.8 | |

|

| ||

| cheering | 9.8 | |

|

| ||

| film | 9.8 | |

|

| ||

| speech | 9.8 | |

|

| ||

| audience | 9.7 | |

|

| ||

| art | 9.7 | |

|

| ||

| stadium | 9.7 | |

|

| ||

| decoration | 9.5 | |

|

| ||

| happy | 9.4 | |

|

| ||

| adult | 9.4 | |

|

| ||

| holiday | 9.3 | |

|

| ||

| bright | 9.3 | |

|

| ||

| human | 9 | |

|

| ||

| supporters | 8.9 | |

|

| ||

| job | 8.8 | |

|

| ||

| nighttime | 8.8 | |

|

| ||

| vibrant | 8.8 | |

|

| ||

| icon | 8.7 | |

|

| ||

| leader | 8.7 | |

|

| ||

| boss | 8.6 | |

|

| ||

| party | 8.6 | |

|

| ||

| presentation | 8.4 | |

|

| ||

| vivid | 8.4 | |

|

| ||

| color | 8.3 | |

|

| ||

| negative | 8.3 | |

|

| ||

| paint | 8.1 | |

|

| ||

| toiletry | 8.1 | |

|

| ||

| medicine | 7.9 | |

|

| ||

| couple | 7.8 | |

|

| ||

| glass | 7.8 | |

|

| ||

| research | 7.6 | |

|

| ||

| clothing | 7.6 | |

|

| ||

| fashion | 7.5 | |

|

| ||

| house | 7.5 | |

|

| ||

| fun | 7.5 | |

|

| ||

| technology | 7.4 | |

|

| ||

| cheerful | 7.3 | |

|

| ||

| family | 7.1 | |

|

| ||

| medical | 7.1 | |

|

| ||

| happiness | 7 | |

|

| ||

| paper | 7 | |

|

| ||

| modern | 7 | |

|

| ||

Google

created on 2021-12-14

| Art | 81.5 | |

|

| ||

| Chair | 80.6 | |

|

| ||

| Font | 80.4 | |

|

| ||

| Monochrome | 68.7 | |

|

| ||

| Monochrome photography | 68 | |

|

| ||

| Vintage clothing | 67 | |

|

| ||

| Room | 66.3 | |

|

| ||

| Sitting | 64.8 | |

|

| ||

| Photo caption | 64.6 | |

|

| ||

| Illustration | 63.5 | |

|

| ||

| Visual arts | 63.1 | |

|

| ||

| Advertising | 62.6 | |

|

| ||

| Paper product | 62.4 | |

|

| ||

| Stock photography | 62.2 | |

|

| ||

| Newsprint | 61.6 | |

|

| ||

| Paper | 60.2 | |

|

| ||

| Photographic paper | 55.9 | |

|

| ||

| Machine | 54.5 | |

|

| ||

| Suit | 54.2 | |

|

| ||

| Vintage advertisement | 52.4 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 19-31 |

| Gender | Female, 92.1% |

| Calm | 98.1% |

| Sad | 1.6% |

| Confused | 0.1% |

| Happy | 0.1% |

| Angry | 0.1% |

| Surprised | 0.1% |

| Disgusted | 0% |

| Fear | 0% |

AWS Rekognition

| Age | 25-39 |

| Gender | Female, 69.2% |

| Calm | 98.1% |

| Sad | 1.6% |

| Surprised | 0.1% |

| Angry | 0% |

| Happy | 0% |

| Confused | 0% |

| Fear | 0% |

| Disgusted | 0% |

AWS Rekognition

| Age | 37-55 |

| Gender | Female, 63.9% |

| Happy | 88.3% |

| Calm | 6.7% |

| Sad | 2.1% |

| Angry | 1.1% |

| Surprised | 0.9% |

| Confused | 0.4% |

| Fear | 0.2% |

| Disgusted | 0.2% |

AWS Rekognition

| Age | 23-35 |

| Gender | Male, 63.7% |

| Happy | 55.2% |

| Calm | 21.8% |

| Confused | 11.5% |

| Surprised | 7% |

| Sad | 1.9% |

| Angry | 1.5% |

| Disgusted | 0.5% |

| Fear | 0.4% |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Unlikely |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| paintings art | 99.9% | |

|

| ||

Captions

Microsoft

created on 2021-12-14

| an old photo of a man | 64.4% | |

|

| ||

| old photo of a man | 61% | |

|

| ||

| a man sitting in a room | 51.2% | |

|

| ||

Text analysis

Amazon

6

14929

14929.

14929

6

14929.

14929

6

14929.