Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

Imagga

AWS Rekognition

| Age | 39-57 |

| Gender | Male, 95.2% |

| Calm | 80.5% |

| Sad | 17.3% |

| Confused | 0.9% |

| Surprised | 0.6% |

| Happy | 0.3% |

| Angry | 0.2% |

| Disgusted | 0.1% |

| Fear | 0% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Person | 99.5% | |

Categories

Imagga

created on 2021-12-14

| paintings art | 99.9% | |

Captions

Microsoft

created by unknown on 2021-12-14

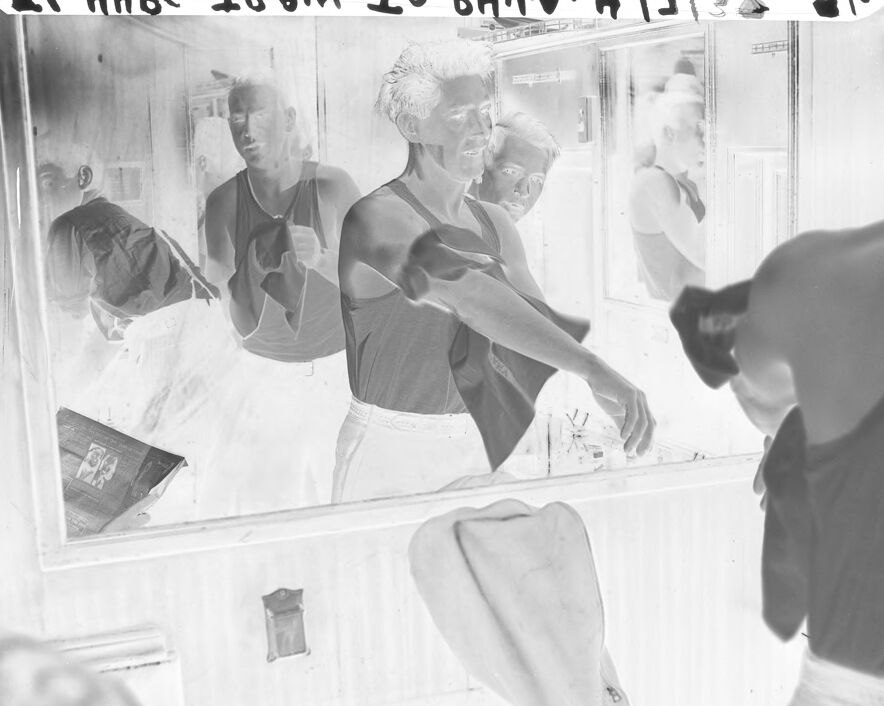

| a person standing in front of a mirror posing for the camera | 43.5% | |

| a group of people standing in front of a mirror posing for the camera | 26.1% | |

| a person standing in front of a mirror | 26% | |

Clarifai

Created by general-english-image-caption-clip on 2025-07-10

photograph of a woman in a bathtub.

Salesforce

Created by general-english-image-caption-blip on 2025-05-03

a photograph of a man is standing in front of a mirror

OpenAI GPT

Created by gpt-4o-2024-11-20 on 2025-06-14

The image is a black-and-white negative photograph depicting a person in front of a mirror. The individual is wearing a tank top and appears to be drying themselves or adjusting their clothing with a towel held in their hand. The mirrored reflection shows the same person and surroundings. The setting appears to be a bathroom, with visible fixtures such as a counter, a light switch, and a towel rack. Notable details include the inverted tones characteristic of negative film, and writing or markings at the top edge of the image.

Created by gpt-4o-2024-08-06 on 2025-06-14

The image is a negative photograph depicting a person standing in front of a mirror in a bathroom. The individual is wearing a sleeveless top and appears to be in the act of grooming, possibly drying their face or hair with a towel. The reflection of the person is visible in the mirror, capturing different angles. The bathroom sink area is visible, along with various items that might be personal care products. The photo also contains handwriting or printing across the top edge and along the right side, which appears inverted and oriented for the negative image.

Anthropic Claude

Created by anthropic.claude-3-haiku-20240307-v1:0 on 2025-06-13

The image appears to show several individuals, likely athletes or fitness enthusiasts, in what seems to be a locker room or changing area. The individuals are shown in various stages of undress, with some wearing athletic clothing and others shirtless. The image has a grainy, black and white aesthetic, suggesting it may be an older photograph. The overall scene depicts an intimate, behind-the-scenes glimpse into the private space of these individuals as they prepare or change before or after some physical activity.

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-13

This appears to be a black and white photograph with a unique multiple exposure or overlapping effect. The image shows what looks like the same person captured in several successive positions, creating a motion-like sequence from left to right. The figure appears to be leaning or moving forward in each frame. The background seems to be indoors with some architectural elements visible. The artistic technique used here creates an interesting visual effect that suggests movement and the passage of time within a single frame.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-05-27

The image depicts a black-and-white photograph of a man standing in front of a mirror, with his back to the viewer. He is wearing a tank top and white pants, and appears to be engaged in an activity, possibly shaving or applying cologne. The mirror reflects multiple images of the man, creating a sense of depth and visual interest.

In the foreground, the man's reflection is prominent, while the background of the image is less clear. The overall atmosphere of the image is one of quiet contemplation, as if the man is lost in thought as he prepares for the day ahead. The use of black and white photography adds a sense of timelessness and simplicity to the image, drawing the viewer's attention to the subject's expression and posture.

The image also features some handwritten notes at the top, which appear to be illegible. These notes may provide additional context or information about the image, but their meaning is unclear without further clarification.

Overall, the image presents a serene and introspective scene, inviting the viewer to reflect on their own daily routines and the importance of taking time for oneself.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-05-27

The image is a black and white photograph of a man in front of a mirror, with the photo negative superimposed over it. The man is wearing a tank top and has short hair. He is standing in front of a large mirror, with his reflection visible behind him. The mirror appears to be attached to a wall, and there are various objects on the counter below it, including a book or magazine, a small container, and what looks like a towel or cloth.

The overall atmosphere of the image suggests that the man is getting ready for something, possibly a workout or a shower. The presence of the mirror and the objects on the counter implies that he is in a bathroom or dressing room. The use of a negative image superimposed over the original photo adds a sense of depth and complexity to the image, creating a layered effect that draws the viewer's eye.

Amazon Nova

Created by amazon.nova-pro-v1:0 on 2025-05-26

The black-and-white image depicts a person standing in front of a mirror, possibly in a dressing room. The person is wearing a sleeveless shirt and white pants. He is holding a piece of clothing in his left hand, and his right hand is stretched out. The mirror reflects the person, the room, and a shelf with some objects on it. There is a watermark on the top right corner.

Created by amazon.nova-lite-v1:0 on 2025-05-26

The image is a black-and-white photograph of a group of people in a room with a mirror. The people in the image are wearing sleeveless shirts and pants. The person in the foreground is holding a camera and appears to be taking a picture of the others in the room. The people in the mirror are also wearing sleeveless shirts and pants, and one of them is holding a camera. The image has a watermark in the top left corner.

Google Gemini

Created by gemini-2.0-flash-lite on 2025-04-30

Here's a description of the image:

The image is a black and white negative of what appears to be a bathroom scene. The most prominent feature is a mirror reflecting a series of men.

- Central Figure: A man wearing a dark tank top and white pants is standing in front of the mirror, holding a towel and possibly washing or drying his hands.

- Reflections: The mirror reflects other men, some of whom appear to be partially obscured. The reflection shows another man in a tank top and white pants, also appearing to be in the act of washing.

- Setting: The scene seems to be indoors, possibly a bathroom, with a sink and a few simple accessories. The overall impression is one of a candid or spontaneous moment.

- Other details: The lighting appears to be coming from above. There is some text along the top edge of the picture.

Created by gemini-2.0-flash on 2025-06-16

The photograph is a black and white, high-contrast shot featuring multiple figures in what appears to be a bathroom setting with a large mirror. The overall composition has an eerie, almost ghostly quality due to the high contrast and negative-like aesthetic.

In the foreground, a figure is partially visible, their shoulder and upper torso filling the right side of the frame. They are wearing a tank top.

Reflected in the mirror are several other figures. To the left, a man in a shirt is seen, and beside him, another man in a tank top faces forward, apparently washing his hands in front of a sink. Adjacent to him is another person, slightly out of focus and peering into the scene. Further back in the reflection, another figure is partially visible, giving the impression of a crowded or shared space.

Below the mirror, a sink area is suggested with what looks like a towel draped over it. A small decorative plaque is visible on the wall.

The image also shows the edges of the film used for the photograph, including frame markings and text, indicating its origin from a physical photographic print.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-05-31

The image is a black-and-white photograph showing a man getting a haircut. The scene is set in what appears to be a barbershop or a similar setting. The man receiving the haircut is seated in a chair, facing a large mirror that reflects multiple images of himself and the barber. The barber is standing to the right of the seated man, holding a pair of scissors and actively cutting the man's hair.

The reflections in the mirror show different angles of the man and the barber, creating a sense of depth and repetition. The man's expression is calm, and he appears to be looking straight ahead. The barber is focused on his task, with his head slightly tilted as he works on the haircut.

The environment includes typical barbershop elements such as a counter with various items on it, including what looks like a small box or container and a cloth. The overall atmosphere of the image suggests a routine activity captured in a candid moment.

Qwen

Created by Qwen/Qwen2.5-VL-7B-Instruct on 2025-07-05

The image depicts a scene inside what appears to be a ship or a large transport vehicle, possibly a military or commercial vessel, given the context and attire of the individuals. The photo is monochromatic, suggesting it might be an older photograph, likely from the mid-20th century.

In the center of the image, a man with short hair and a muscular build is seen adjusting his sleeve with his right hand. He is wearing a sleeveless shirt and white pants. Behind him, a second individual is partially visible, also wearing a sleeveless shirt and white pants, and appears to be engaged in some activity. The background shows a mirror reflecting parts of the ship's interior, including a door, various equipment, and a person who seems to be adjusting something near the door.

The photograph has a vintage quality, and there is a label or caption in the upper portion of the image, which appears to be handwritten or typed, possibly providing additional context about the image, such as the date and location. The overall atmosphere suggests a moment of preparation or rest during a journey, possibly related to a work or military operation.

Created by Qwen/Qwen2.5-VL-72B-Instruct on 2025-07-05

This is a black-and-white photograph showing a group of men in a reflective and layered composition. The image appears to have multiple exposures or reflections, creating an overlapping effect. The men are wearing sleeveless shirts and are positioned in front of a mirror. The mirror reflects their images, adding depth to the scene. There is a hat placed on a counter or shelf in the foreground, along with some other small objects. The photograph has a vintage feel, suggesting it may be from an earlier time period. The text at the top of the image is partially obscured and difficult to read, but it seems to be a caption or title related to the photograph.