Machine Generated Data

Tags

Amazon

created on 2022-01-23

Clarifai

created on 2023-10-26

Imagga

created on 2022-01-23

| silhouette | 37.3 | |

|

| ||

| art | 25.2 | |

|

| ||

| cartoon | 23.2 | |

|

| ||

| sport | 19 | |

|

| ||

| people | 17.9 | |

|

| ||

| man | 17.1 | |

|

| ||

| team | 17 | |

|

| ||

| person | 17 | |

|

| ||

| player | 16.6 | |

|

| ||

| negative | 16.5 | |

|

| ||

| male | 15.6 | |

|

| ||

| ball | 14 | |

|

| ||

| event | 13.9 | |

|

| ||

| clip art | 13 | |

|

| ||

| symbol | 12.8 | |

|

| ||

| athlete | 12.5 | |

|

| ||

| film | 12.3 | |

|

| ||

| competition | 11.9 | |

|

| ||

| championship | 11.7 | |

|

| ||

| match | 11.6 | |

|

| ||

| design | 11.3 | |

|

| ||

| training | 11.1 | |

|

| ||

| kick | 10.7 | |

|

| ||

| audience | 10.7 | |

|

| ||

| skill | 10.6 | |

|

| ||

| soccer | 10.6 | |

|

| ||

| goal | 10.6 | |

|

| ||

| football | 10.6 | |

|

| ||

| crowd | 10.6 | |

|

| ||

| muscular | 10.5 | |

|

| ||

| flag | 10.1 | |

|

| ||

| shoot | 9.9 | |

|

| ||

| cheering | 9.8 | |

|

| ||

| nighttime | 9.8 | |

|

| ||

| stadium | 9.7 | |

|

| ||

| business | 9.7 | |

|

| ||

| patriotic | 9.6 | |

|

| ||

| bust | 9.6 | |

|

| ||

| icon | 9.5 | |

|

| ||

| plaything | 9.5 | |

|

| ||

| photographic paper | 9.5 | |

|

| ||

| nation | 9.5 | |

|

| ||

| lights | 9.3 | |

|

| ||

| field | 9.2 | |

|

| ||

| pass | 8.8 | |

|

| ||

| work | 8.6 | |

|

| ||

| teamwork | 8.3 | |

|

| ||

| artwork | 8.2 | |

|

| ||

| park | 8.2 | |

|

| ||

| winter | 7.7 | |

|

| ||

| professional | 7.6 | |

|

| ||

| sculpture | 7.4 | |

|

| ||

| playing | 7.3 | |

|

| ||

| creation | 7 | |

|

| ||

| vibrant | 7 | |

|

| ||

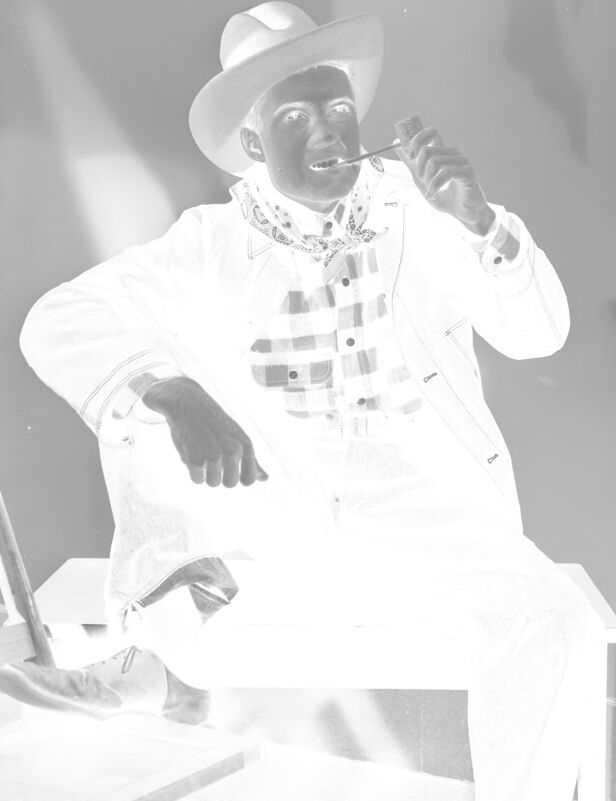

Google

created on 2022-01-23

| Hat | 94.2 | |

|

| ||

| Sun hat | 90.7 | |

|

| ||

| Fedora | 90.4 | |

|

| ||

| Sleeve | 87.2 | |

|

| ||

| Costume hat | 86.2 | |

|

| ||

| Style | 83.9 | |

|

| ||

| Performing arts | 83.9 | |

|

| ||

| Black-and-white | 82.7 | |

|

| ||

| Artist | 80.1 | |

|

| ||

| Suit | 79.2 | |

|

| ||

| Entertainment | 77.9 | |

|

| ||

| Font | 77.5 | |

|

| ||

| Fashion design | 77.1 | |

|

| ||

| Cowboy hat | 77 | |

|

| ||

| Music | 76.2 | |

|

| ||

| Art | 75.1 | |

|

| ||

| Eyewear | 74.5 | |

|

| ||

| Dance | 74.3 | |

|

| ||

| Necklace | 73.6 | |

|

| ||

| Monochrome photography | 72.6 | |

|

| ||

Microsoft

created on 2022-01-23

| text | 99.9 | |

|

| ||

| human face | 93.7 | |

|

| ||

| hat | 90.4 | |

|

| ||

| fashion accessory | 89.3 | |

|

| ||

| clothing | 84.3 | |

|

| ||

| music | 76.5 | |

|

| ||

| person | 74.2 | |

|

| ||

| black and white | 63.8 | |

|

| ||

| concert | 53.6 | |

|

| ||

| fedora | 50.2 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 38-46 |

| Gender | Male, 99.9% |

| Surprised | 58.6% |

| Happy | 14.1% |

| Calm | 12.4% |

| Sad | 5.6% |

| Confused | 4.3% |

| Disgusted | 3.1% |

| Angry | 1.1% |

| Fear | 0.9% |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Unlikely |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| paintings art | 100% | |

|

| ||

Captions

Microsoft

created on 2022-01-23

| a close up of a book | 34.6% | |

|

| ||

| close up of a book | 29.8% | |

|

| ||

| a hand holding a book | 29.7% | |

|

| ||

Text analysis

Amazon

10931.

22399 10931.

22399

To931.

To931.