Machine Generated Data

Tags

Amazon

created on 2022-01-23

| Person | 99 | |

|

| ||

| Human | 99 | |

|

| ||

| Trumpet | 96.9 | |

|

| ||

| Cornet | 96.9 | |

|

| ||

| Horn | 96.9 | |

|

| ||

| Musical Instrument | 96.9 | |

|

| ||

| Brass Section | 96.9 | |

|

| ||

| Person | 96.9 | |

|

| ||

| Person | 92.4 | |

|

| ||

| Person | 92 | |

|

| ||

| Person | 91.9 | |

|

| ||

| Musician | 89.5 | |

|

| ||

| Person | 78.8 | |

|

| ||

| Person | 77.7 | |

|

| ||

| Music Band | 75.7 | |

|

| ||

| Photography | 63.3 | |

|

| ||

| Photo | 63.3 | |

|

| ||

| Portrait | 63.2 | |

|

| ||

| Face | 63.2 | |

|

| ||

| Bugle | 55.4 | |

|

| ||

Clarifai

created on 2023-10-26

Imagga

created on 2022-01-23

| brass | 56.3 | |

|

| ||

| wind instrument | 48.5 | |

|

| ||

| musical instrument | 33.8 | |

|

| ||

| cornet | 30 | |

|

| ||

| dancer | 26.7 | |

|

| ||

| person | 26.5 | |

|

| ||

| people | 23.4 | |

|

| ||

| performer | 21.7 | |

|

| ||

| man | 21.5 | |

|

| ||

| adult | 18.9 | |

|

| ||

| body | 18.4 | |

|

| ||

| male | 17 | |

|

| ||

| human | 16.5 | |

|

| ||

| health | 15.3 | |

|

| ||

| fashion | 14.3 | |

|

| ||

| sexy | 13.6 | |

|

| ||

| portrait | 13.6 | |

|

| ||

| entertainer | 13.4 | |

|

| ||

| device | 13.2 | |

|

| ||

| elegance | 12.6 | |

|

| ||

| lady | 12.2 | |

|

| ||

| standing | 12.2 | |

|

| ||

| active | 11.9 | |

|

| ||

| style | 11.9 | |

|

| ||

| dance | 11.7 | |

|

| ||

| model | 11.7 | |

|

| ||

| horn | 11.5 | |

|

| ||

| medical | 11.5 | |

|

| ||

| men | 11.2 | |

|

| ||

| anatomy | 10.6 | |

|

| ||

| attractive | 10.5 | |

|

| ||

| biology | 10.4 | |

|

| ||

| fitness | 9.9 | |

|

| ||

| business | 9.7 | |

|

| ||

| trombone | 9.7 | |

|

| ||

| indoors | 9.7 | |

|

| ||

| motion | 9.4 | |

|

| ||

| clothes | 9.4 | |

|

| ||

| face | 9.2 | |

|

| ||

| pretty | 9.1 | |

|

| ||

| exercise | 9.1 | |

|

| ||

| science | 8.9 | |

|

| ||

| outfit | 8.7 | |

|

| ||

| brunette | 8.7 | |

|

| ||

| lifestyle | 8.7 | |

|

| ||

| dancing | 8.7 | |

|

| ||

| professional | 8.4 | |

|

| ||

| action | 8.3 | |

|

| ||

| inside | 8.3 | |

|

| ||

| sport | 8.2 | |

|

| ||

| healthy | 8.2 | |

|

| ||

| happy | 8.1 | |

|

| ||

| stylish | 8.1 | |

|

| ||

| dress | 8.1 | |

|

| ||

| recreation | 8.1 | |

|

| ||

| businessman | 7.9 | |

|

| ||

| women | 7.9 | |

|

| ||

| vertical | 7.9 | |

|

| ||

| x ray | 7.8 | |

|

| ||

| black | 7.8 | |

|

| ||

| skeleton | 7.8 | |

|

| ||

| arm | 7.6 | |

|

| ||

| showing | 7.5 | |

|

| ||

| life | 7.5 | |

|

| ||

| fun | 7.5 | |

|

| ||

| makeup | 7.3 | |

|

| ||

| sensual | 7.3 | |

|

| ||

| figure | 7.2 | |

|

| ||

| suit | 7.2 | |

|

| ||

| medicine | 7 | |

|

| ||

Google

created on 2022-01-23

| Musical instrument | 94.5 | |

|

| ||

| Brass instrument | 87.4 | |

|

| ||

| Wind instrument | 86.6 | |

|

| ||

| Musician | 86.3 | |

|

| ||

| Style | 84 | |

|

| ||

| Black-and-white | 83.6 | |

|

| ||

| Suit | 83.2 | |

|

| ||

| Trumpeter | 80.9 | |

|

| ||

| Music | 78.9 | |

|

| ||

| Art | 78.8 | |

|

| ||

| Woodwind instrument | 78.5 | |

|

| ||

| Classical music | 76.7 | |

|

| ||

| Entertainment | 74.9 | |

|

| ||

| Monochrome photography | 74 | |

|

| ||

| Monochrome | 73.3 | |

|

| ||

| Event | 73.1 | |

|

| ||

| Performing arts | 69.9 | |

|

| ||

| Cello | 66.6 | |

|

| ||

| Jazz | 64.8 | |

|

| ||

| Concert | 63.5 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

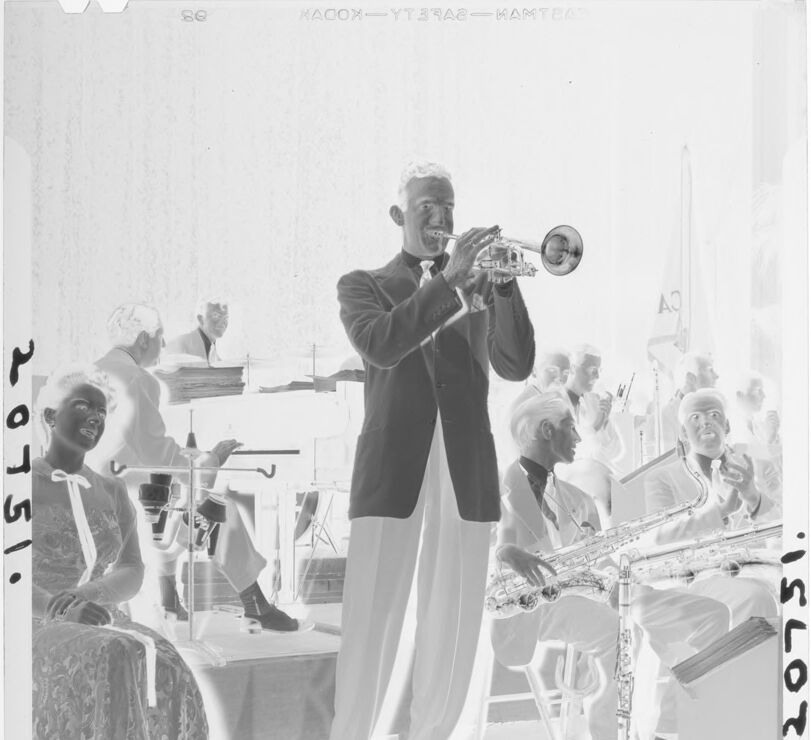

| Age | 31-41 |

| Gender | Male, 99.9% |

| Calm | 32.9% |

| Sad | 32.2% |

| Confused | 20.3% |

| Fear | 8.7% |

| Surprised | 2.5% |

| Disgusted | 2% |

| Angry | 0.8% |

| Happy | 0.6% |

AWS Rekognition

| Age | 42-50 |

| Gender | Male, 79.8% |

| Calm | 47.1% |

| Happy | 34.4% |

| Surprised | 6.5% |

| Sad | 3.3% |

| Confused | 3% |

| Disgusted | 2.4% |

| Angry | 2.2% |

| Fear | 1% |

AWS Rekognition

| Age | 31-41 |

| Gender | Male, 95.5% |

| Confused | 33.6% |

| Fear | 32.6% |

| Sad | 11.8% |

| Surprised | 10% |

| Calm | 4.1% |

| Disgusted | 3.9% |

| Angry | 2.8% |

| Happy | 1.3% |

AWS Rekognition

| Age | 24-34 |

| Gender | Male, 75.1% |

| Happy | 90.2% |

| Confused | 3.8% |

| Calm | 2.1% |

| Angry | 1.3% |

| Surprised | 1.1% |

| Disgusted | 0.8% |

| Sad | 0.4% |

| Fear | 0.3% |

AWS Rekognition

| Age | 25-35 |

| Gender | Female, 78% |

| Calm | 97.9% |

| Sad | 0.7% |

| Fear | 0.3% |

| Surprised | 0.3% |

| Disgusted | 0.3% |

| Angry | 0.2% |

| Confused | 0.2% |

| Happy | 0.1% |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| paintings art | 100% | |

|

| ||

Captions

Microsoft

created on 2022-01-23

| a vintage photo of a person | 75.2% | |

|

| ||

| a vintage photo of a man and a woman standing in a room | 64.6% | |

|

| ||

| a vintage photo of a person | 64.5% | |

|

| ||

Text analysis

Amazon

20751.

so

AO

inron

KACON YTSRA2-MAMTEA

ISLOT

20751•

KACON

YTSRA2-MAMTEA

ISLOT

20751•