Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 29-39 |

| Gender | Female, 64.7% |

| Happy | 97.1% |

| Fear | 1.6% |

| Sad | 0.4% |

| Calm | 0.3% |

| Surprised | 0.3% |

| Confused | 0.1% |

| Disgusted | 0.1% |

| Angry | 0.1% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Person | 99.2% | |

Categories

Imagga

created on 2022-01-23

| paintings art | 95.7% | |

| streetview architecture | 3.7% | |

Captions

Microsoft

created by unknown on 2022-01-23

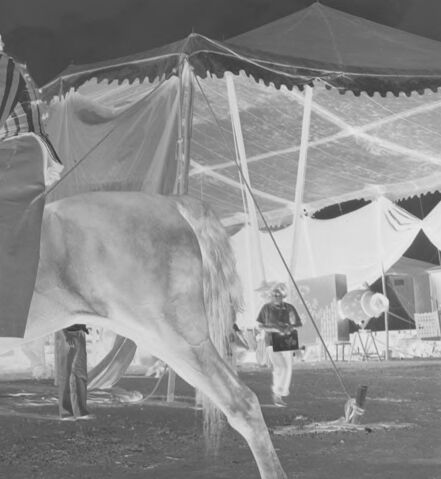

| a person standing in front of a horse | 89.5% | |

| a person standing in front of a horse | 88.6% | |

| a person standing next to a horse | 88.3% | |

Salesforce

Created by general-english-image-caption-blip on 2025-05-22

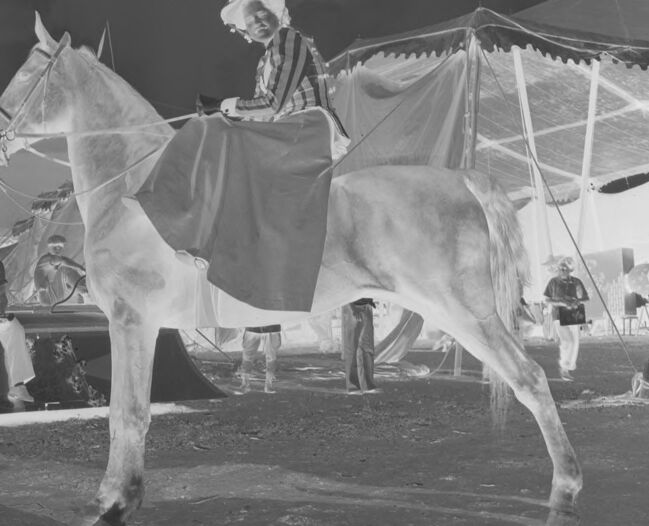

a photograph of a woman in a dress and hat sitting on a horse

OpenAI GPT

Created by gpt-4o-2024-11-20 on 2025-06-17

This image is a photographic negative, making the light and dark areas inverted. It depicts a person sitting on a horse in what appears to be an outdoor setting with tents and possibly a fair or festival environment. The person on the horse is wearing a hat, likely a cowboy-style hat, and is dressed in a striped outfit. In the background, we see several people, some seated and others standing, near decorated structures or carts. The negative format renders the image with reversed tones, and the overall scene has a nostalgic or vintage quality.

Created by gpt-4o-2024-08-06 on 2025-06-17

The image appears to be a black-and-white negative photograph. It shows a person sitting on a horse. The person is wearing a cowboy hat and a striped shirt, suggesting a Western or rodeo theme. The horse is standing still with its foreleg slightly raised. In the background, there is a tent, likely indicating a fair or circus setting. Several people are near the tent, with some sitting and others standing, possibly spectators or participants. The overall scene gives a lively outdoor atmosphere. The sky and ground appear inverted due to the negative, with clouds and shadows in the sky standing out prominently in the dark areas.

Anthropic Claude

Created by anthropic.claude-3-haiku-20240307-v1:0 on 2025-06-16

The image shows a circus scene from the past. In the foreground, there is a person riding a white horse, dressed in a striped costume. The person appears to be a performer or entertainer. Behind them, there are several tents or canopies, suggesting this is a circus or carnival setting. The background is dark, creating a dramatic and atmospheric mood. The overall scene conveys a sense of the spectacle and entertainment associated with a traditional circus performance.

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-16

This is a black and white photograph that appears to be from a circus or carnival setting. In the image, a rider sits sidesaddle on a white or light-colored horse. The horse appears to be a show horse, standing elegantly with its legs positioned in a formal stance. The rider is wearing what looks like a striped outfit. In the background, you can see circus tents or canopies and some other people. The lighting and composition create a dramatic effect, with what appears to be a dark sky or backdrop contrasting with the light-colored horse. The scene has a classic, vintage circus atmosphere to it.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-05-31

The image is a black-and-white photograph of a man riding a horse in a circus setting. The man is dressed in a striped shirt and a hat, and he is holding a flag in his hand. He is sitting on the horse, which is facing to the left. The horse is wearing a blanket or saddle pad on its back.

In the background, there are several people standing around, some of whom appear to be performers or workers at the circus. There are also several tents and other structures visible, suggesting that the scene is taking place in a large outdoor area.

Overall, the image suggests that the man and the horse are part of a circus act, possibly a horse-riding or acrobatic performance. The presence of the flag and the man's attire suggest that they may be performing a patriotic or nationalistic routine.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-05-31

This image is a black and white photograph of a woman riding a horse, with a circus tent in the background. The woman is wearing a long dress and a hat, and she is holding the reins of the horse. The horse is white and appears to be walking or standing still. In the background, there are several people standing around the circus tent, which has a large entrance and a flag on top. The overall atmosphere of the image suggests that it was taken during a circus performance or event.

Amazon Nova

Created by amazon.nova-pro-v1:0 on 2025-05-30

A black and white photo shows a woman riding a horse. The woman is wearing a hat and a long dress. She is holding the reins of the horse. Behind her, there are people sitting on chairs, and some are standing. There is a large tent with a flag on top of it. There are other tents and some objects on the ground.

Created by amazon.nova-lite-v1:0 on 2025-05-30

The image depicts a black-and-white photograph of a woman riding a horse in an outdoor setting, possibly at a carnival or fair. The woman is wearing a cowboy hat and a long dress, and she appears to be posing for the camera while holding the reins of the horse. The horse is standing on the ground, and its tail is flowing behind it. In the background, there are tents, a flag, and people standing around, suggesting a festive atmosphere. The image has a vintage look, with a slightly blurry effect and a watermark on the bottom right corner.

Google Gemini

Created by gemini-2.0-flash-lite on 2025-05-18

Certainly! Here's a description of the image:

Overall Impression:

The image appears to be a vintage, negative photograph capturing a scene that looks like a circus or a similar entertainment setting. The lighting and composition suggest it's a snapshot from the early to mid-20th century.

Main Subject:

- The central figure is a person dressed in a striped blouse and a skirt, wearing a hat, riding a white horse. They are posed and looking towards the camera.

Background and Surroundings:

- Behind the horse and rider, there are canvas tents and structures that evoke a circus tent appearance.

- Several people are present, giving a sense of an active event or preparation.

- The sky is overcast, suggesting either dusk or a cloudy day.

Details:

- There's a small flag visible in the background.

- The overall feel of the image is one of classic, historical photography.

- The negative format gives a unique look of the photo.

Created by gemini-2.0-flash on 2025-05-18

Here's a description of the image:

This is a black and white photographic negative. It features a light-colored horse with a woman seated upon it. She is wearing a hat and a striped garment. The horse is saddled with a dark-colored cloth.

In the background, there are several tents, which appear to be part of a circus or fairground setting. People are milling around, with some seated on a trailer. A flag is visible atop one of the tents. The sky is filled with dark, ominous clouds.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-05-31

The image depicts a scene that appears to be from a fair or circus. In the foreground, there is a person riding a horse. The horse is covered with a decorative cloth or blanket that has a fringe, and the rider is dressed in what looks like a traditional or ceremonial outfit, including a hat with a large feather.

In the background, there are several tents, some of which have flags or banners on top. A group of people is gathered around a table, possibly engaged in conversation or some form of activity. The overall setting suggests a festive or celebratory atmosphere, likely part of a larger event or fair. The photograph is in black and white, indicating it might be from an earlier era.