Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 24-34 |

| Gender | Male, 94.5% |

| Sad | 62.7% |

| Calm | 20.7% |

| Confused | 10.9% |

| Disgusted | 3.7% |

| Angry | 1.1% |

| Fear | 0.4% |

| Happy | 0.3% |

| Surprised | 0.2% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Person | 99% | |

Categories

Imagga

created on 2022-01-23

| paintings art | 97.8% | |

| beaches seaside | 1.5% | |

Captions

Microsoft

created by unknown on 2022-01-23

| a sign on a dirt road | 65.5% | |

| an old photo of a person | 39% | |

| a sign on the side of a dirt field | 38.9% | |

Clarifai

Created by general-english-image-caption-clip on 2025-07-15

photograph of a group of young men running through a forest.

Salesforce

Created by general-english-image-caption-blip on 2025-05-21

a photograph of a group of people standing in a forest

OpenAI GPT

Created by gpt-4o-2024-11-20 on 2025-06-10

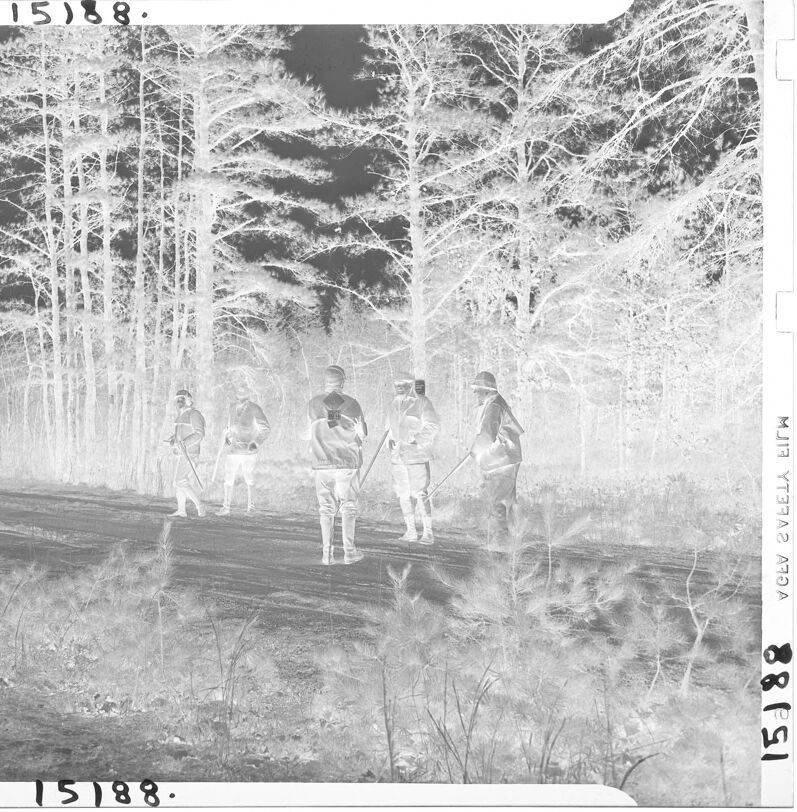

This is a black-and-white negative image showing a group of people outdoors in a forested area. There are six individuals, some holding objects resembling rifles or tools. They appear to be standing on or near a dirt path surrounded by trees and vegetation. The trees have a skeletal look due to the high contrast of the image negative, and the sky is rendered dark due to the inversion of the colors. The numbers "15188" are written at the top and bottom edges of the negative, which may be a reference or cataloging number.

Created by gpt-4o-2024-08-06 on 2025-06-10

This is a negative image depicting a group of people standing on a dirt path in a wooded area. The image appears to be in a forest setting with tall trees in the background. The people in the image are wearing clothing that suggests they might be hunters or outdoors enthusiasts, as they are holding what appear to be rifles or shotguns. The figures are distributed along the path, with some walking and others standing still. Because this is a negative image, the dark areas appear light and vice versa. The texture of the foliage and trees is quite detailed, and the scene captures a sense of rugged outdoor activity. There are numbers written at the top and bottom of the image, reading "15188."

Anthropic Claude

Created by anthropic.claude-3-haiku-20240307-v1:0 on 2025-06-16

The image appears to be a black and white photograph depicting a group of people standing on a path in a snowy, forested area. The trees in the background are bare and covered in snow, creating a stark and wintry scene. The people in the image are standing together, but their faces are not clearly visible. The overall atmosphere of the image is one of a quiet, peaceful winter landscape.

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-16

This is a black and white historical photograph showing a group of people playing golf. The scene appears to be set on a golf course with tall pine trees in the background, creating a striking contrast with their white branches against a dark sky. The players are standing on what appears to be a fairway or rough area of the course, and they're all holding golf clubs. They're dressed in what looks like early 20th century golf attire, including knickers or short pants and caps. The image has a dreamy, almost ethereal quality due to the contrast and lighting, with the trees appearing particularly luminous against the darker background. The number "15188" appears to be a reference or catalog number for the photograph.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-06-10

The image is a black and white photograph of a group of people walking along a road in the woods, with trees and grass visible in the background.

The group consists of seven individuals, all dressed in casual attire, including pants, shirts, and hats. They are carrying long sticks or poles, which may be used for walking or possibly for hunting or other activities. The road they are walking on appears to be a dirt or gravel path, and it stretches out into the distance, disappearing behind the trees.

In the foreground, there is a patch of grass and weeds growing alongside the road. The trees in the background are tall and slender, with branches that stretch up towards the sky. The overall atmosphere of the image suggests a peaceful and serene scene, with the group of people enjoying a leisurely walk through the woods.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-06-10

The image is a black-and-white photograph of a group of people standing on a road in a wooded area. The photo has been edited to appear as if it was taken with an infrared camera, with the trees and vegetation appearing white and the sky appearing dark.

There are eight people visible in the image, all wearing casual clothing and hats. They are standing on a dirt road that runs through the center of the image, surrounded by tall trees and underbrush. Some of the individuals are holding sticks or other objects, but it's unclear what they are doing or what the purpose of the gathering is.

In the foreground, there are several plants and weeds growing along the side of the road. The background of the image shows a dense forest with many trees, some of which have lost their leaves. The overall atmosphere of the image suggests a rural or wilderness setting, possibly during the fall or winter season.

The image has a white border around it, with some handwritten notes and numbers in the corners. These notes appear to be related to the development or processing of the photograph, but they do not provide any additional information about the content or context of the image. Overall, the image presents a serene and peaceful scene, with a sense of mystery surrounding the purpose and identity of the individuals depicted.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-06-09

The image depicts a monochromatic photograph of a group of people standing in a line along a dirt road. The setting appears to be a forest or a wooded area, with tall, leafless trees lining both sides of the road. The photograph is dated 15188, and there is a watermark on the right side of the image that reads "MJR VELLA AREA." The people in the photograph are dressed in uniforms and are holding long sticks or poles, possibly indicating that they are engaged in some kind of outdoor activity or training. The image has a vintage or historical feel, suggesting that it may be an old photograph or a reproduction of an old photograph.

Created by amazon.nova-pro-v1:0 on 2025-06-09

The image is a black-and-white photograph of a group of people standing on a dirt road surrounded by tall trees. The photograph has a vintage look, and the people are dressed in uniforms, possibly military or police. They are holding sticks or poles, and some of them are wearing hats. The road is narrow and appears to be in a rural area. The trees are tall and have a dense canopy, and the ground is covered in grass and weeds.

Google Gemini

Created by gemini-2.0-flash on 2025-05-17

Here's a description of the image:

The image is a black-and-white photographic negative. It depicts a group of people standing on what appears to be a dirt road or path in a wooded area. The background is filled with tall, thin trees.

There are six people visible in the image. They appear to be wearing jackets or coats and some may have headgear. Several of them are holding long, slender objects, possibly sticks or rods.

The foliage in the foreground and around the path is also visible, appearing lighter in the negative. The overall composition of the image suggests a rural or outdoor setting, possibly a forest or park.

Created by gemini-2.0-flash-lite on 2025-05-17

Certainly! Here's a description of the image:

Overall Impression:

The image is a black-and-white, negative image, possibly from a film negative. It depicts a group of people in a wooded setting.

Key Elements:

- Setting: The scene appears to be a forest or woodland area, with tall, slender trees dominating the background. There's a path or dirt road visible in the foreground.

- People: Several people are present. They seem to be wearing some sort of uniform, perhaps military or work attire. They are positioned along the path, some holding what appear to be walking sticks or similar items.

- Composition: The composition leads the eye from the front, where there is some ground cover, towards the people in the middle ground, and finally to the dense trees in the background.

Additional Notes:

- The image's negative appearance (black areas are light and vice versa) gives a distinct visual quality.

- The focus seems to be on the people and the environment, implying an activity taking place.

Let me know if you would like me to focus on any particular aspect of the image!

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-06-05

The image is a black-and-white photograph that appears to be taken in a wooded area. The scene shows a group of seven people walking along a dirt path or road, with tall trees in the background. The individuals are dressed in casual clothing, some wearing hats, and a few are carrying items such as bags or tools. The trees are leafless, suggesting it might be late autumn or winter. The lighting in the image is somewhat hazy, which could be due to the photographic quality or atmospheric conditions. The number "15188" is visible at the top and bottom of the image, possibly indicating a catalog or reference number. The right side of the image has some text, but it is not entirely clear. The overall setting suggests a rural or forested environment, and the group appears to be engaged in some outdoor activity or journey.

Qwen

Created by Qwen/Qwen2.5-VL-7B-Instruct on 2025-07-09

The image appears to be a black-and-white photograph taken in a forested area. It shows a group of five individuals walking along a dirt path. The individuals are dressed in what looks like early 20th-century attire, including hats and long coats, suggesting a historical context. The path is flanked by trees, and the overall scene has a serene, natural atmosphere. The photograph has a label "15188" at the top and bottom, indicating it might be a document or archival image. The lighting and exposure appear to be adjusted to highlight the outlines of the trees and the figures, which gives the photograph a somewhat ethereal or ghostly quality.

Created by Qwen/Qwen2.5-VL-72B-Instruct on 2025-07-09

This is a black-and-white photograph, likely a historical or archival image, featuring a group of people in a forested area. The scene appears to be set in a clearing with tall trees in the background. There are five individuals visible in the image. They are dressed in what seems to be early 20th-century attire, including coats, hats, and boots. The individuals appear to be engaged in some form of outdoor activity, possibly related to forestry or land management, as they are holding tools that resemble axes or similar implements. The image has a vintage quality, with a number "15188" printed in the upper left corner and along the right edge, suggesting it is part of a catalog or collection. The overall atmosphere is serene and natural, with the individuals standing on a dirt path surrounded by trees and underbrush.