Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 4-14 |

| Gender | Female, 54.6% |

| Calm | 72.7% |

| Happy | 14.3% |

| Sad | 5.7% |

| Angry | 5.2% |

| Surprised | 0.9% |

| Fear | 0.6% |

| Disgusted | 0.3% |

| Confused | 0.3% |

Feature analysis

Amazon

| Person | 99.4% | |

Categories

Imagga

| paintings art | 44.1% | |

| streetview architecture | 33.1% | |

| interior objects | 18.7% | |

| pets animals | 1.4% | |

| people portraits | 1.1% | |

Captions

Microsoft

created by unknown on 2021-12-15

| an old photo of a man | 87.6% | |

| a man sitting in front of a building | 70.6% | |

| old photo of a man | 70.5% | |

Clarifai

created by general-english-image-caption-blip on 2025-05-18

| a photograph of a man in a robe is sitting in a chair | -100% | |

Google Gemini

Created by gemini-2.0-flash on 2025-05-16

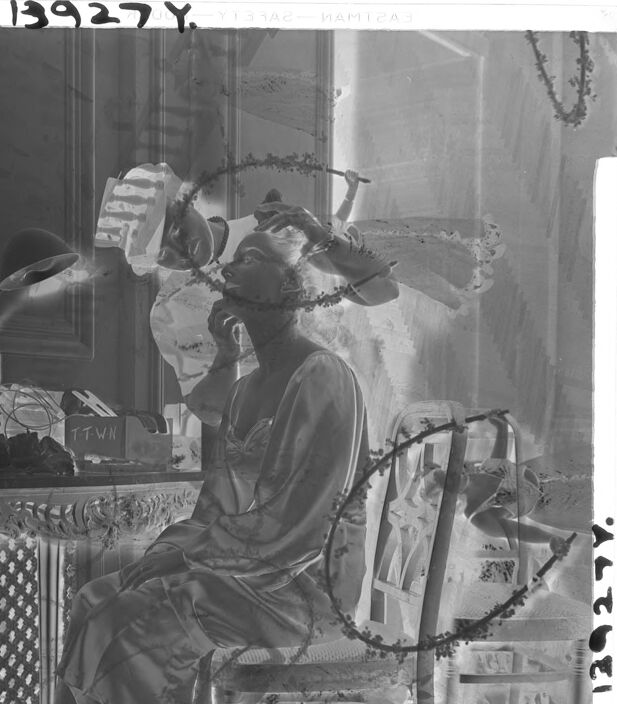

Here is a description of the image:

The image appears to be a black and white negative of a photograph. A woman is seated on a chair in a room, likely getting her makeup done. She is wearing what seems to be a gown or robe. Above her, another person is working on her hair or makeup. There is a vanity or table to the left with a lamp, a box labeled "TTWN," and various other items. The surroundings seem to be an interior space. The negative shows a few numbers printed along the top and side edges. Finally, some sort of decoration that looks like a wreath with berries or thorns is visible in front of everything in the photo.

Created by gemini-2.0-flash-lite on 2025-05-16

Here's a description of the image:

Overall: This appears to be a black and white negative image of a staged scene. The composition is slightly surreal, with a dreamlike quality.

Main Subject: A woman is seated on a wooden chair, possibly wearing a nightgown or a flowing dressing robe. She has a pensive or reflective expression.

Setting and Elements:

- Furniture: A highly ornamented table is in the background, appearing to be behind the woman.

- Other Figures: There appear to be other people interacting around the main figure, perhaps getting ready or serving as attendants.

- Floral or Vine Details: There are vine-like elements are overlaid upon the image which gives the piece a sense of nature's intrusion into the scene.

Technical Aspects:

- Negative: The image is a negative, where light and dark values are inverted.

- Lighting: The lighting is soft, diffused, and appears to come from the left side of the frame.

Atmosphere: The photograph has a quiet, contemplative mood. The composition feels staged and slightly theatrical, suggesting an artistic portrait.