Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 49-67 |

| Gender | Male, 75.1% |

| Calm | 86.9% |

| Fear | 6% |

| Surprised | 2.7% |

| Happy | 1.3% |

| Sad | 1.2% |

| Confused | 0.8% |

| Angry | 0.6% |

| Disgusted | 0.6% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Person | 99.1% | |

Categories

Imagga

created on 2021-12-15

| paintings art | 87.5% | |

| beaches seaside | 10% | |

Captions

Microsoft

created by unknown on 2021-12-15

| a vintage photo of a group of people posing for the camera | 84.9% | |

| a vintage photo of a group of people posing for a picture | 84.8% | |

| a vintage photo of a man | 84.7% | |

Clarifai

Created by general-english-image-caption-clip on 2025-07-12

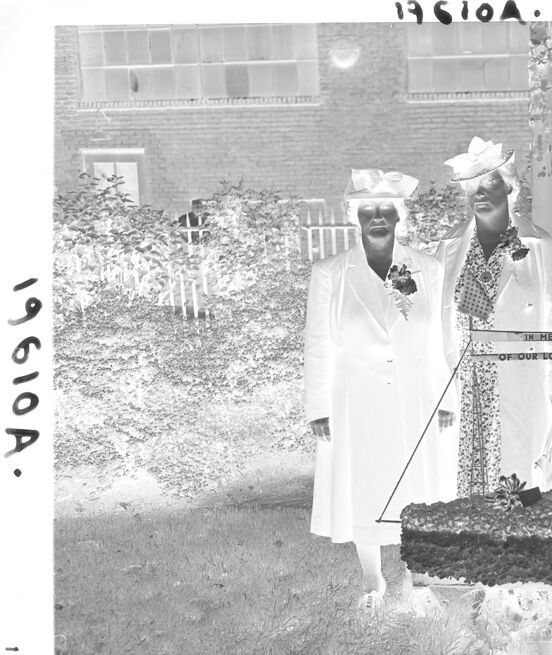

a group of women dressed in white and black pose in front of a fountain.

Salesforce

Created by general-english-image-caption-blip on 2025-05-22

a photograph of a group of people in white dresses and hats

OpenAI GPT

Created by gpt-4o-2024-11-20 on 2025-06-18

The image depicts four women standing outdoors, dressed in formal attire that suggests a ceremonial or commemorative occasion. They are positioned around a floral arrangement with a sign that reads "IN MEMORY OF OUR LOVED ONES." The setting appears to be a garden or a well-maintained grassy area, with a building visible in the background. The negative format of the photo inverses the colors, giving it an unusual appearance where light tones appear dark and vice versa. The women wear hats and coats, and their clothing styles reflect a vintage era.

Created by gpt-4o-2024-08-06 on 2025-06-18

The image appears to be a black and white photograph of four women standing outdoors on a grassy area next to a brick path. The women are dressed in formal attire, with hats and coats. They are gathered around a floral display or arrangement that is suspended or resting on a stand. The display includes a banner that reads "IN MEMORY OF OUR LOVED ONES." The background features a brick building with large windows and some foliage, such as bushes and trees. The photograph has a negative effect, meaning the colors are inverted.

Anthropic Claude

Created by anthropic.claude-3-haiku-20240307-v1:0 on 2025-06-18

The image shows a group of four women standing in a garden or outdoor setting. They are all wearing elaborate, formal attire with hats, fur coats, and decorative accessories. In the center of the image is a large floral arrangement or memorial display on a pedestal. The women appear to be posing for a photograph, though their expressions are not clearly visible. The overall scene suggests some kind of ceremonial or commemorative event, though the specific context is not clear from the image alone.

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-18

This appears to be a black and white historical photograph from around the 1920s or 1930s. Several people are standing in a line outdoors, all wearing white dresses and hats in what appears to be summer attire. There's a brick building visible in the background and what looks like a garden or landscaped area with a pathway. The image has "1951OA" written at the top, suggesting this might be related to some kind of organization or event from that time period. There appears to be some kind of memorial or marker in the ground near where they are standing. The style of dress and composition suggests this might be a formal or commemorative occasion.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-06-09

The image is a black-and-white photograph of four women standing in front of a memorial, with a brick building and garden in the background. The women are dressed in white dresses and hats, and the memorial features a sign that reads "In Memory of Our Loved Ones." The overall atmosphere of the image suggests a somber and respectful occasion, possibly a funeral or memorial service.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-06-09

The image is a black-and-white photograph of four women standing in front of a memorial, with a brick building visible in the background. The women are dressed in formal attire, including hats and coats, and are positioned around a memorial that features a sign with the inscription "IN MEMORY OF OUR LOVED ONES." The memorial also includes a floral arrangement and a small tree or plant.

The photograph appears to be from the early 20th century, based on the clothing and hairstyles of the women. The image is likely a commemorative or memorial photo, taken to honor the memory of loved ones who have passed away. The presence of the memorial and the formal attire of the women suggest that the occasion may have been a funeral or memorial service.

Overall, the image provides a glimpse into the past, capturing a moment of remembrance and tribute to those who have been lost. It serves as a reminder of the importance of honoring our loved ones and preserving their memories for future generations.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-06-06

A black and white image of four women dressed in white robes and hats is standing on a brick walkway. They are holding a wooden sign that reads "In Memory of Our Loved Ones." The women are standing next to a large flower arrangement that is on fire. The image has a watermark on the top left corner that reads "196104" and a number "196104" on the bottom left corner.

Created by amazon.nova-pro-v1:0 on 2025-06-06

The black-and-white photo features four women standing in front of a building. They are all wearing hats and white dresses. They are standing next to each other, and they are holding a wooden structure with a sign that reads "In Memory of Our Loved Ones." They are standing on a brick pathway in front of a grassy area. Behind them are plants and a building with glass windows.

Google Gemini

Created by gemini-2.0-flash-lite on 2025-05-16

Here is a description of the image:

This is a black and white image, likely a negative of a photograph. It appears to depict four women standing together, likely posed for a picture. They are dressed in what seems like formal attire, possibly for a memorial or similar occasion.

Here are some key observations:

- Attire: All the women are wearing long, white coats or dresses, and hats. Some are also wearing fur stoles or accessories. The style suggests a mid-20th century era.

- Memorial: In the foreground, a floral arrangement or a wreath is present. There's a sign that reads "IN MEMORY OF OUR LOVED ONES". There also a display with an American flag.

- Setting: The scene appears to be outdoors, possibly a yard in front of a brick building with large windows. A picket fence is visible in the background.

- Overall: The image captures a solemn moment, likely a memorial service or a dedication to the deceased.

It's a poignant image, offering a glimpse into a specific moment in time.

Created by gemini-2.0-flash on 2025-05-16

Here is a description of the image:

The image shows an black and white photo of four women posing in front of a memorial. They are wearing formal attire, with long coats and hats. The memorial is decorated with flowers and a sign that reads "In Memory of Our Loved Ones." The women are standing on a brick path, with grass and shrubs in the background. A building with multiple windows can be seen in the background as well. The image seems to have been printed as a photographic negative, as the lighter elements of the scene appear dark, and vice versa. There are two annotations in the image with the alphanumeric code 19610A.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-06-01

The image is a black-and-white photograph depicting four women standing outdoors in what appears to be a garden or courtyard area. They are gathered around a memorial or monument, which is adorned with flowers and has a plaque that reads "IN MEMORY OF OUR LOVED ONES."

The women are dressed in formal attire, with some wearing hats and fur stoles, suggesting that the occasion is likely a somber or commemorative event. The background includes a brick building and some well-maintained shrubbery, adding to the formal and respectful atmosphere of the scene. The photograph appears to be quite old, possibly from the mid-20th century, based on the clothing styles and the overall quality of the image. The number "19610A" is written on the top and left side of the photograph, which could be an identification or catalog number.

Qwen

Created by Qwen/Qwen2.5-VL-7B-Instruct on 2025-07-05

This is a black-and-white photograph dated "19610A." It features four women standing in a garden, dressed in formal attire, suggesting a formal or ceremonial occasion. They are wearing long white dresses with hats, and their outfits and hairstyles indicate a specific cultural or traditional context. The women are positioned around a floral arrangement that is placed on a stand, which has a plaque that reads "IN MEMORY OF OUR LOVED ONES." The background includes a brick building, a fence, and some greenery. The setting appears to be a tribute or memorial event, likely honoring someone significant to the group.

Created by Qwen/Qwen2.5-VL-72B-Instruct on 2025-07-05

This black-and-white image shows four women dressed in formal white attire, including hats and some with fur accents, standing outdoors in a garden setting. They are positioned around a small memorial or flower arrangement placed on a stand, which has a sign that reads "IN MEMORY OF OUR LOVED ONES." The background features a brick building with windows, a hedge, and a metal fence. The photograph has a vintage quality, suggesting it was taken in the early 20th century. The image also has a watermark with the text "19610A."