Machine Generated Data

Tags

Amazon

created on 2022-01-09

| Person | 99.1 | |

|

| ||

| Human | 99.1 | |

|

| ||

| Person | 98.9 | |

|

| ||

| Person | 98.8 | |

|

| ||

| Hat | 97.6 | |

|

| ||

| Clothing | 97.6 | |

|

| ||

| Apparel | 97.6 | |

|

| ||

| Person | 96.6 | |

|

| ||

| Person | 91.8 | |

|

| ||

| Hat | 84 | |

|

| ||

| Sunglasses | 81.6 | |

|

| ||

| Accessories | 81.6 | |

|

| ||

| Accessory | 81.6 | |

|

| ||

| Tie | 77.3 | |

|

| ||

| Face | 77.2 | |

|

| ||

| Finger | 76.4 | |

|

| ||

| Crowd | 74.4 | |

|

| ||

| People | 73.1 | |

|

| ||

| Text | 69.6 | |

|

| ||

| Person | 64.6 | |

|

| ||

| Head | 63.8 | |

|

| ||

| Overcoat | 60.6 | |

|

| ||

| Coat | 60.6 | |

|

| ||

| Suit | 60.1 | |

|

| ||

| Musician | 56.6 | |

|

| ||

| Musical Instrument | 56.6 | |

|

| ||

| Sleeve | 56.3 | |

|

| ||

| Person | 49.7 | |

|

| ||

Clarifai

created on 2023-10-25

Imagga

created on 2022-01-09

| man | 48.4 | |

|

| ||

| surgeon | 37.7 | |

|

| ||

| person | 36.5 | |

|

| ||

| male | 36.2 | |

|

| ||

| people | 29.5 | |

|

| ||

| senior | 23.4 | |

|

| ||

| patient | 22.2 | |

|

| ||

| adult | 21.9 | |

|

| ||

| portrait | 19.4 | |

|

| ||

| men | 18.9 | |

|

| ||

| professional | 18.3 | |

|

| ||

| work | 18 | |

|

| ||

| medical | 17.7 | |

|

| ||

| worker | 16.9 | |

|

| ||

| elderly | 16.3 | |

|

| ||

| doctor | 16 | |

|

| ||

| job | 15.9 | |

|

| ||

| nurse | 15.9 | |

|

| ||

| happy | 15 | |

|

| ||

| hospital | 14.8 | |

|

| ||

| occupation | 14.7 | |

|

| ||

| working | 14.1 | |

|

| ||

| old | 13.9 | |

|

| ||

| equipment | 12.8 | |

|

| ||

| home | 12.8 | |

|

| ||

| business | 12.7 | |

|

| ||

| retired | 12.6 | |

|

| ||

| health | 12.5 | |

|

| ||

| indoors | 12.3 | |

|

| ||

| mask | 11.9 | |

|

| ||

| surgery | 11.7 | |

|

| ||

| room | 11.5 | |

|

| ||

| hand | 11.4 | |

|

| ||

| case | 11.4 | |

|

| ||

| sitting | 11.2 | |

|

| ||

| glasses | 11.1 | |

|

| ||

| medicine | 10.6 | |

|

| ||

| retirement | 10.6 | |

|

| ||

| grandfather | 10.5 | |

|

| ||

| illness | 10.5 | |

|

| ||

| mature | 10.2 | |

|

| ||

| smiling | 10.1 | |

|

| ||

| face | 9.9 | |

|

| ||

| clinic | 9.9 | |

|

| ||

| handsome | 9.8 | |

|

| ||

| businessman | 9.7 | |

|

| ||

| looking | 9.6 | |

|

| ||

| specialist | 9.5 | |

|

| ||

| sick person | 9.5 | |

|

| ||

| happiness | 9.4 | |

|

| ||

| casual | 9.3 | |

|

| ||

| care | 9.1 | |

|

| ||

| human | 9 | |

|

| ||

| pensioner | 9 | |

|

| ||

| profession | 8.6 | |

|

| ||

| industry | 8.5 | |

|

| ||

| hat | 8.5 | |

|

| ||

| teacher | 8.3 | |

|

| ||

| office | 8.2 | |

|

| ||

| team | 8.1 | |

|

| ||

| to | 8 | |

|

| ||

| operation | 7.9 | |

|

| ||

| uniform | 7.8 | |

|

| ||

| education | 7.8 | |

|

| ||

| emergency | 7.7 | |

|

| ||

| clothing | 7.6 | |

|

| ||

| adults | 7.6 | |

|

| ||

| camera | 7.4 | |

|

| ||

| industrial | 7.3 | |

|

| ||

| lifestyle | 7.2 | |

|

| ||

| helmet | 7 | |

|

| ||

Google

created on 2022-01-09

| Hat | 89 | |

|

| ||

| Gesture | 85.3 | |

|

| ||

| Style | 83.9 | |

|

| ||

| Black-and-white | 83.6 | |

|

| ||

| Sleeve | 83 | |

|

| ||

| Cap | 76.6 | |

|

| ||

| Art | 76.3 | |

|

| ||

| Monochrome | 75.8 | |

|

| ||

| Monochrome photography | 75.7 | |

|

| ||

| Snapshot | 74.3 | |

|

| ||

| Sun hat | 73.6 | |

|

| ||

| Baseball cap | 70 | |

|

| ||

| Font | 66.6 | |

|

| ||

| Event | 65.9 | |

|

| ||

| Vintage clothing | 65.5 | |

|

| ||

| Stock photography | 64.3 | |

|

| ||

| Photo caption | 62 | |

|

| ||

| Uniform | 58.2 | |

|

| ||

| Illustration | 56.1 | |

|

| ||

| Crew | 55.8 | |

|

| ||

Microsoft

created on 2022-01-09

| person | 98.9 | |

|

| ||

| text | 98.5 | |

|

| ||

| clothing | 91.9 | |

|

| ||

| man | 85.8 | |

|

| ||

| hat | 79.1 | |

|

| ||

| helmet | 73.5 | |

|

| ||

| human face | 71.1 | |

|

| ||

| black and white | 68 | |

|

| ||

| fashion accessory | 64.5 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 45-51 |

| Gender | Male, 99.2% |

| Happy | 63.9% |

| Confused | 12.5% |

| Surprised | 12.4% |

| Sad | 4.3% |

| Disgusted | 2.9% |

| Fear | 2.6% |

| Angry | 1% |

| Calm | 0.5% |

AWS Rekognition

| Age | 42-50 |

| Gender | Male, 87.6% |

| Calm | 96.3% |

| Confused | 1.2% |

| Happy | 0.7% |

| Sad | 0.6% |

| Surprised | 0.5% |

| Disgusted | 0.5% |

| Angry | 0.2% |

| Fear | 0.2% |

AWS Rekognition

| Age | 49-57 |

| Gender | Male, 74.4% |

| Surprised | 65.4% |

| Angry | 19.6% |

| Confused | 5% |

| Happy | 3.3% |

| Calm | 2.3% |

| Sad | 2% |

| Fear | 1.2% |

| Disgusted | 1.1% |

AWS Rekognition

| Age | 48-54 |

| Gender | Male, 98.9% |

| Calm | 79.4% |

| Happy | 6.1% |

| Sad | 4.3% |

| Surprised | 3.4% |

| Confused | 2.3% |

| Disgusted | 2.1% |

| Fear | 1.4% |

| Angry | 1.1% |

AWS Rekognition

| Age | 47-53 |

| Gender | Male, 98.8% |

| Calm | 99.8% |

| Sad | 0.1% |

| Happy | 0% |

| Angry | 0% |

| Surprised | 0% |

| Disgusted | 0% |

| Confused | 0% |

| Fear | 0% |

AWS Rekognition

| Age | 26-36 |

| Gender | Female, 87.1% |

| Calm | 50.4% |

| Fear | 37.7% |

| Sad | 4.3% |

| Happy | 3% |

| Surprised | 1.6% |

| Confused | 1.2% |

| Disgusted | 1.1% |

| Angry | 0.6% |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Possible |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Unlikely |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| events parties | 70.3% | |

|

| ||

| people portraits | 27.3% | |

|

| ||

| streetview architecture | 1.3% | |

|

| ||

Captions

Microsoft

created by unknown on 2022-01-09

| a group of people standing around each other | 83.2% | |

|

| ||

| a group of people posing for a photo | 83.1% | |

|

| ||

| a group of people posing for the camera | 83% | |

|

| ||

Clarifai

created by general-english-image-caption-blip on 2025-05-22

| a photograph of a group of men in white robes and hats | -100% | |

|

| ||

Google Gemini

Created by gemini-2.0-flash on 2025-05-17

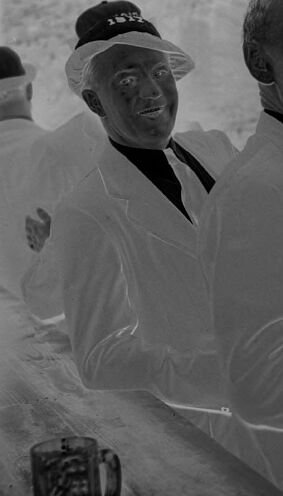

Certainly! Here's a description of the image:

The image is a black and white photograph, appearing to be an older print. It shows a group of men gathered together, likely at an event or gathering.

Key details include:

- Setting: The scene appears to be either indoors or under a tent, given the light and possible tent structure in the background.

- Men: The group primarily consists of men. They are dressed in similar clothing, possibly a uniform of some kind. Many of them are wearing hats.

- Expressions: The men in the foreground are smiling.

- Hats: The hats are distinctive. One hat is labeled "1917", while others have unique shapes and brims.

- Object: There is also what looks like a glass or beer stein visible on a surface.

The photograph has an aged feel, evident in its monochrome presentation and what appears to be a slightly degraded quality, making it a historical image.

Created by gemini-2.0-flash-lite on 2025-05-17

Certainly! Here's a description of the image:

Overall Impression:

The image is a black and white, negative photograph of what appears to be a gathering of men. The scene is likely outdoors or in a tent, given the light filtering through and the backdrop. The men are dressed in suits and hats, suggesting a formal or celebratory occasion.

Key Elements:

- The Men: Several men are clustered together, smiling and engaging with each other. Some are holding glasses or mugs.

- Clothing: The men are primarily wearing white suits, black ties, and hats. Some hats appear to have specific inscriptions, like "1917".

- Atmosphere: The photograph conveys a sense of camaraderie and enjoyment. The smiles and interactions among the men suggest a relaxed and social environment.

- Background: The background is blurred, hinting at a location, possibly a tent or a shaded area with light coming through.

- Composition: The men are in a close group, with some arms around each other, creating a sense of intimacy.

Overall, the photograph captures a moment of celebration or social gathering among a group of men, likely in the early 20th century.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-06-02

The image is a black-and-white photograph depicting a group of men gathered in what appears to be a social or celebratory setting. The men are dressed in formal attire, including suits, ties, and hats. Some of the men are holding beer mugs, suggesting that they are enjoying a drink together. The expressions on their faces indicate that they are engaged in conversation and are in good spirits.

The setting appears to be indoors, possibly in a tent or a large hall, as suggested by the background which includes what looks like a tent wall or a large fabric backdrop. The atmosphere seems jovial and relaxed, with the men appearing to be enjoying each other's company.

The photograph has a vintage feel, likely taken in the mid-20th century, given the style of clothing and the overall composition. The image also has some markings or text at the bottom, which might be related to the photograph's identification or cataloging.

Text analysis

Amazon