Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 23-31 |

| Gender | Female, 95.3% |

| Calm | 65.8% |

| Sad | 13.4% |

| Happy | 8.6% |

| Fear | 7.7% |

| Confused | 1.6% |

| Disgusted | 1.2% |

| Surprised | 1% |

| Angry | 0.6% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Person | 99% | |

Categories

Imagga

created on 2022-01-08

| beaches seaside | 47.4% | |

| paintings art | 37.9% | |

| nature landscape | 8.4% | |

| text visuals | 2.8% | |

| streetview architecture | 1.7% | |

Captions

Microsoft

created by unknown on 2022-01-08

| a group of people sitting on a bench | 41.9% | |

| a group of people sitting at a table | 41.8% | |

| a group of people posing for a photo | 41.7% | |

Clarifai

Created by general-english-image-caption-clip on 2025-07-15

a photograph of a train.

Salesforce

Created by general-english-image-caption-blip on 2025-05-22

a photograph of a group of people standing in front of a bus

OpenAI GPT

Created by gpt-4o-2024-11-20 on 2025-06-16

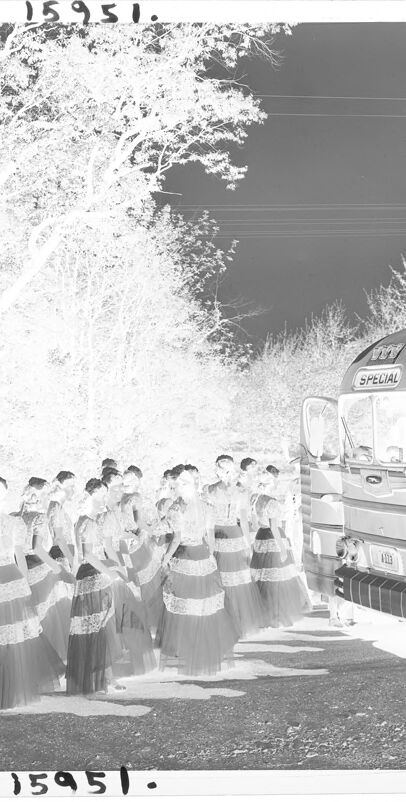

This image is a photographic negative showing a group of women dressed in identical outfits, likely formal or performance gowns with lacy layers and full skirts, standing near a vintage bus with a sign that reads "SPECIAL." The bus appears to be parked on a road or pathway surrounded by trees in a wooded area. There is a cart or wagon visible to the left. The negative effect creates a stark contrast, inverting the colors, making it a monochromatic, high-contrast image.

Created by gpt-4o-2024-08-06 on 2025-06-16

The image is a photographic negative depicting a group of women dressed in matching outfits, standing in formation. They are wearing long, flowing dresses with horizontal stripes. The setting appears to be outdoors, as there are a number of trees visible in the background. To the right of the group, there is a large bus with the word "SPECIAL" displayed on a sign above its windshield. The bus is a vintage style with distinct horizontal lines and rounded shapes. The women are standing on a path or road next to the bus. The negative effect makes the sky appear dark and the trees and ground light, with the colors inverted. The use of negative film suggests this image is from an earlier time when film photography was common.

Anthropic Claude

Created by anthropic.claude-3-haiku-20240307-v1:0 on 2025-06-19

The image shows a group of people in traditional dress, possibly a religious or cultural procession, walking along a road near a wooded area. There appears to be a special vehicle, likely a bus or van, parked on the side of the road. The image has a black and white, historical aesthetic, suggesting this is an older photograph.

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-19

This appears to be a black and white photograph from 1951 (as indicated by the number on the image) showing a group of people lined up near a bus. The group is wearing what appears to be matching outfits with tiered or layered skirts, suggesting this might be a choir, dance group, or performance ensemble. The scene is set outdoors with trees visible in the background. A vintage-style bus is visible on the right side of the image. The lighting creates a bright, almost ethereal quality to the photograph. There's also what appears to be a bicycle visible on the left side of the image.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-06-09

The image depicts a group of women in traditional attire standing alongside a bus, with trees and foliage visible in the background. The women are dressed in long skirts and tops, possibly for a cultural or ceremonial event. They appear to be gathered near the bus, which is parked on the side of the road or in a designated area. The bus features a sign on the front that reads "SPECIAL," suggesting it may be a charter or tour bus. The overall atmosphere of the image suggests a sense of community and celebration, with the women likely participating in a cultural or social gathering.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-06-09

The image depicts a group of women in traditional Hawaiian attire standing on the side of a road, with a bus parked nearby. The women are dressed in long, dark skirts and tops adorned with lace or floral patterns, and they appear to be waiting for something or someone. The bus, which is parked on the right side of the image, has a sign on the front that reads "SPECIAL" in bold letters. In the background, there are trees and bushes, suggesting that the scene is set in a rural or natural area. Overall, the image conveys a sense of anticipation and expectation, as if the women are waiting for an event or activity to begin.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-06-07

The black-and-white photograph shows a group of women standing in a line, possibly on the side of the road. They are wearing dresses with lace trims, and their hair is tied back. They are looking in the same direction, possibly towards the bus. The bus is parked on the side of the road, and its front is facing the women. The bus has a sign that reads "SPECIAL" on the front. The area is surrounded by trees, and there are power lines in the distance.

Created by amazon.nova-pro-v1:0 on 2025-06-07

The image is a black-and-white photograph of a group of people standing in a row, possibly on a road. The people are wearing long dresses with lace and have their hair tied up. The dresses have a white and black pattern. There is a bus parked on the right side of the road. A motorcycle is parked on the left side of the road. The road is surrounded by trees.

Google Gemini

Created by gemini-2.0-flash-lite on 2025-05-16

Here's a description of the image:

Overall Impression:

The image appears to be an inverted, or negative, photograph. This means that light and dark areas are reversed.

Foreground:

- A group of people, likely women, are standing in a line. They are dressed in what seem to be gowns or dresses, possibly formal attire.

- A large bus is present, with "SPECIAL" labeled above the front windshield.

Background:

- The scene is set outdoors, likely in a wooded area.

- Tall trees are visible.

- The sky is dark.

Additional Details:

- A vehicle or object resembling a wagon is visible on the left side.

- Someone appears to be standing at the side, near the wagon.

- The photograph's number "15951." is written at the top and bottom.

Possible Interpretations:

The image could be a snapshot of a special event, perhaps a performance, or gathering, involving the group of women and the bus. The setting suggests the event is taking place outdoors. The inverted nature of the photo may complicate immediate details.

Created by gemini-2.0-flash on 2025-05-16

The image is an inverted, black and white photograph of a group of young women in long dresses lined up alongside a large bus, possibly for a special event or performance. The women are wearing similar gowns with a patterned design on the bodice and horizontal stripes across the skirt. The bus has a rounded shape and the word "SPECIAL" displayed above the windshield. The scene takes place outdoors, with trees lining the background. In the distance, there is a small vehicle or piece of equipment, and a figure can be seen standing near it. The overall tone of the photo suggests a formal or ceremonial occasion. The numbers "15951" appear at the top and bottom of the image, indicating a catalog or reference number.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-06-01

The image is a black-and-white photograph showing a group of people dressed in traditional attire, possibly participating in a cultural or ceremonial event. The individuals are wearing long, patterned skirts and blouses, and some appear to be holding objects or performing a dance. They are standing in a line, facing forward, and are positioned near a modern bus that has the word "SPECIAL" written on it. The scene is set outdoors, with trees and foliage visible in the background, suggesting a natural or rural setting. There is also a bicycle leaning against a tree on the left side of the image. The photograph has a somewhat grainy quality and includes a number "15951" printed at the top and bottom, which might be a reference or catalog number.

Qwen

Created by Qwen/Qwen2.5-VL-7B-Instruct on 2025-07-05

The image is a black-and-white photograph that appears to be from an older era, judging by the style of the bus and the clothing worn by the individuals. The bus is large and retro, with a streamlined design that suggests it could be from the mid-20th century. It is labeled "SPECIAL," and its front features a logo, though the details of the logo are not clear.

In the foreground, a group of individuals dressed in traditional attire stands in a line, facing away from the camera. Their clothing consists of long, flowing dresses with intricate patterns, indicating a cultural or ceremonial significance. The individuals are positioned in a line, suggesting they might be participating in a procession or performance.

The background includes trees and some natural elements, indicating that the photograph was taken outdoors in a wooded or rural area. The lighting in the image is bright, possibly suggesting a daytime scene with sunlight filtering through the trees. The photo has a number, "15951," imprinted on it, which could be an identifier or catalog number.

Created by Qwen/Qwen2.5-VL-72B-Instruct on 2025-07-05

This black-and-white image, marked with the number "15951" at the top and bottom, appears to be an infrared photograph. It shows a group of people dressed in traditional attire, possibly for a cultural or ceremonial event. The individuals are gathered near a large bus labeled "SPECIAL." The background features trees with a distinctive white appearance, characteristic of infrared photography. The overall scene suggests a rural or natural setting.