Machine Generated Data

Tags

Amazon

created on 2022-01-09

Clarifai

created on 2023-10-25

Imagga

created on 2022-01-09

Google

created on 2022-01-09

| Photograph | 94.1 | |

|

| ||

| Smile | 93.4 | |

|

| ||

| Adaptation | 79.2 | |

|

| ||

| Eyewear | 77.1 | |

|

| ||

| Chair | 75.7 | |

|

| ||

| Snapshot | 74.3 | |

|

| ||

| Vintage clothing | 73.5 | |

|

| ||

| Art | 71.9 | |

|

| ||

| Monochrome photography | 70.7 | |

|

| ||

| Monochrome | 69.9 | |

|

| ||

| Room | 66.1 | |

|

| ||

| Stock photography | 64.8 | |

|

| ||

| Fashion design | 64.1 | |

|

| ||

| Sitting | 62.1 | |

|

| ||

| Necklace | 61.8 | |

|

| ||

| Photo caption | 59.2 | |

|

| ||

| Knee | 58.3 | |

|

| ||

| Retro style | 55.5 | |

|

| ||

| History | 54.8 | |

|

| ||

| Publication | 53.2 | |

|

| ||

Microsoft

created on 2022-01-09

| text | 99.6 | |

|

| ||

| person | 98.1 | |

|

| ||

| human face | 95.3 | |

|

| ||

| black and white | 94.7 | |

|

| ||

| clothing | 90.4 | |

|

| ||

| smile | 76.1 | |

|

| ||

| white | 70.5 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

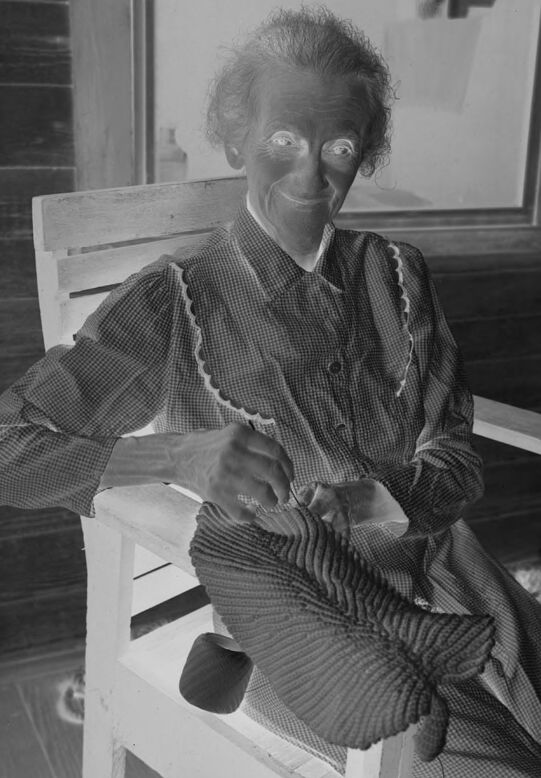

| Age | 54-62 |

| Gender | Female, 89.8% |

| Happy | 59.3% |

| Confused | 10.4% |

| Calm | 9.2% |

| Surprised | 7.2% |

| Sad | 4.8% |

| Fear | 3.6% |

| Disgusted | 3.2% |

| Angry | 2.3% |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| paintings art | 92.4% | |

|

| ||

| people portraits | 4.3% | |

|

| ||

| streetview architecture | 2.2% | |

|

| ||

Captions

Microsoft

created on 2022-01-09

| an old photo of a person | 78.9% | |

|

| ||

| old photo of a person | 74.8% | |

|

| ||

| an old photo of a person | 74.7% | |

|

| ||

Text analysis

Amazon

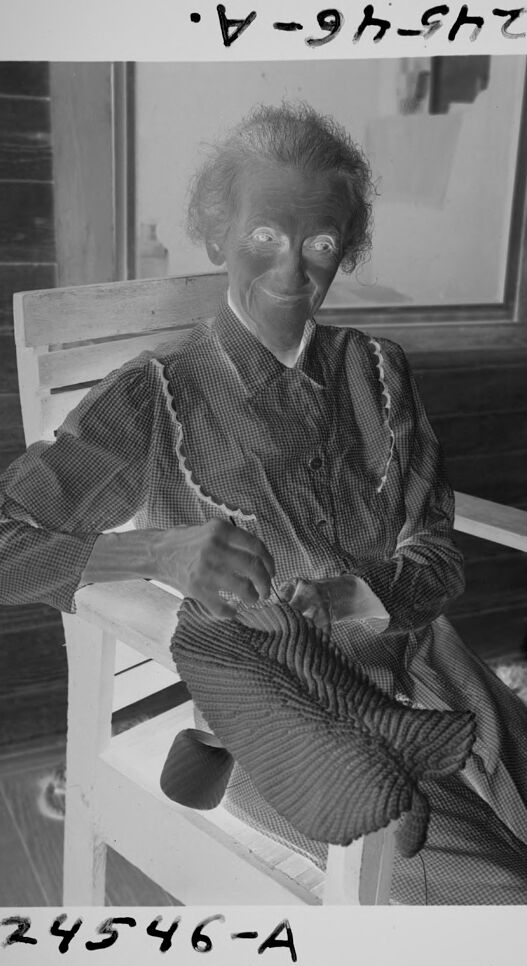

24546-A

24546-A.

24546-A

24546-A.

24546-A