Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 36-44 |

| Gender | Male, 97.3% |

| Calm | 42% |

| Fear | 18.7% |

| Disgusted | 18.2% |

| Surprised | 12.4% |

| Happy | 3.7% |

| Confused | 2.1% |

| Sad | 1.5% |

| Angry | 1.3% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Person | 98.5% | |

Categories

Imagga

created on 2022-01-08

| paintings art | 34.8% | |

| streetview architecture | 22.8% | |

| interior objects | 15% | |

| people portraits | 14.7% | |

| beaches seaside | 8.9% | |

| text visuals | 1.7% | |

Captions

Microsoft

created by unknown on 2022-01-08

| a group of people posing for a photo | 95.5% | |

| a group of people posing for the camera | 95.4% | |

| a group of people posing for a picture | 95.3% | |

Clarifai

Created by general-english-image-caption-clip on 2025-07-13

a group of children in costume.

Salesforce

Created by general-english-image-caption-blip on 2025-05-22

a photograph of a group of people in costumes and masks

OpenAI GPT

Created by gpt-4o-2024-11-20 on 2025-06-16

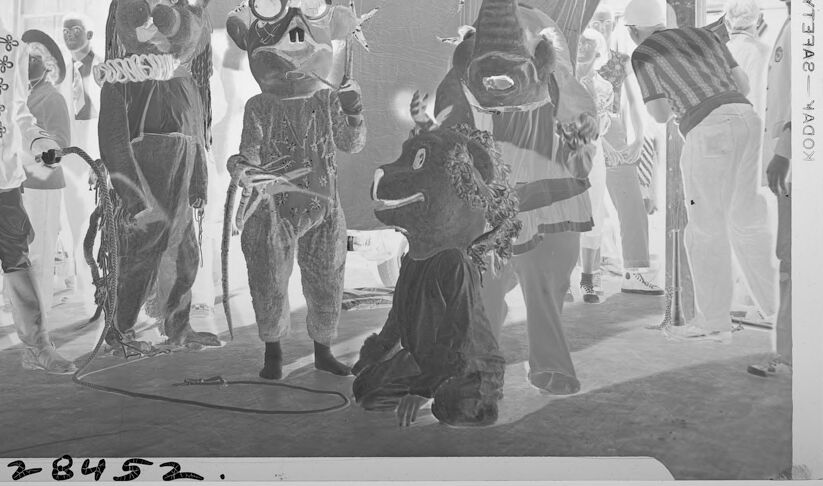

This image appears to be a photographic negative, which displays inverted colors and tones. It depicts a group of people dressed in costumes, including several wearing large animal-themed masks or full-body suits. Among the costumes are characters resembling bears, mice, and an elephant. There is also a person dressed in a uniform with decorative buttons and a bowler hat, possibly resembling a circus ringmaster or performer. The scene seems to take place indoors, possibly in a tent or large hall, with star-shaped decorations hanging as part of the background. The atmosphere is playful and theatrical.

Created by gpt-4o-2024-08-06 on 2025-06-16

The image appears to be a black-and-white photographic negative of a group of people dressed in various costumes, likely for a performance or event. The costumes include animal suits and theatrical clothing, which include a bear, a mouse with large glasses, and an elephant with a long trunk. There is also a person dressed in a military-style outfit with a bowler hat and what appears to be a fake mustache. The group is posed under a large curtain backdrop decorated with star shapes in what looks like a stage or theater setting. The inclusion of people in more ordinary clothing mingling around suggests it might be a behind-the-scenes moment or preparation for a show. The handwritten numbers and "Kodak Safety" on the edge of the negative indicate it is a vintage photograph.

Anthropic Claude

Created by anthropic.claude-3-haiku-20240307-v1:0 on 2025-06-18

The image appears to depict a group of people dressed in elaborate, whimsical costumes. The costumes include animal-like masks, oversized hats, and other fantastical elements. The setting seems to be a stage or performance space, with decorative backdrops and lighting. The overall scene has a festive, theatrical quality, suggesting some kind of costume party or performance.

Created by us.anthropic.claude-3-opus-20240229-v1:0 on 2025-06-18

The black and white photograph depicts a group of people in elaborate costumes posing together on a stage or set. The costumes appear to be animal-themed, with some individuals dressed as monkeys or apes, while others seem to be wearing more abstract or fantastical outfits. There are star-shaped decorations hanging above the group.

The costumes incorporate various materials like fur, fabric, and possibly paper mache or other sculpted elements for the headpieces and masks. The overall aesthetic has a theatrical, whimsical quality, perhaps for a stage production or themed event.

The image captures an interesting moment with the costumed performers gathered together, some with exaggerated poses and expressions conveyed through their body language, adding to the playful and imaginative atmosphere of the scene. The grainy quality and style of the photograph suggests it may be from the early to mid 20th century.

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-18

This appears to be a historical black and white photograph showing a group of people in what looks like a celebratory or festive gathering. The individuals are wearing various styles of clothing including hats and casual wear typical of an earlier era. The image has a somewhat grainy quality characteristic of older photography. There are some decorative star-like shapes visible in the background, suggesting this might be from a party or celebration. The photograph appears to be taken indoors, with white pillars or posts visible in the frame. The lighting creates interesting shadows and contrasts throughout the scene. At the bottom of the image, there appears to be a number (28932), suggesting this might be from an archived collection or catalog.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-06-10

The image is a black and white photograph of a group of people in costumes, likely taken at a carnival or circus event. The photo is labeled "KODAK SAFETY" on the right side, indicating that it was taken using a safety film process.

In the foreground, there are several individuals dressed in elaborate costumes, including a man in a top hat and a woman in a long dress. Some of the people are wearing masks or headpieces, adding to the festive atmosphere. A few of the individuals appear to be holding props or instruments, such as a cane or a trumpet.

In the background, there are more people visible, although they are less clearly defined due to the low contrast and graininess of the image. There appears to be a large banner or backdrop behind the group, which may be part of the carnival or circus setting.

Overall, the image captures a lively and colorful scene, with a sense of excitement and energy emanating from the people and their costumes. The use of black and white photography adds a sense of nostalgia and timelessness to the image, making it feel like a relic from a bygone era.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-06-10

This black-and-white photograph captures a lively scene of individuals dressed in various costumes, including animal masks and outfits. The group is gathered in front of a large, dark-colored backdrop adorned with star-shaped decorations.

In the foreground, a person wearing a bear costume stands alongside another individual dressed as a mouse, while a third person is attired in a bull costume. To the left of the image, a man is dressed in a military-style uniform, complete with a hat and gloves.

The background of the image features a group of people, some of whom are also wearing costumes. The overall atmosphere of the photograph suggests that it was taken at a festive event or celebration, possibly a carnival or circus.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-06-08

The image shows a group of people dressed in animal costumes, performing in a circus-like setting. They are standing in a row, with some of them holding props or instruments. The costumes include a bear, a monkey, a lion, and a dog. The image has a vintage look, with a black-and-white filter and a slightly blurry effect. In the background, there is a curtain with stars and other decorations. The image also has a watermark with the number "28452" and some text.

Created by amazon.nova-pro-v1:0 on 2025-06-08

A black-and-white photo of people in costumes standing in front of a large canvas with starfish and an elephant. They are probably performing a show, as they are wearing costumes. The elephant is kneeling on the ground, while the others are standing. There are people standing behind them, watching the show. The photo has a watermark on the right side that reads "YVETTE YAKOV."

Google Gemini

Created by gemini-2.0-flash-lite on 2025-05-17

Here's a description of the image:

Overall Impression:

The image is a vintage photograph, appearing as a negative print (where light and dark values are reversed). It depicts a group of people, some in costumes, likely at an event or performance. The setting seems to be outdoors, possibly under a makeshift tent or awning.

Key Elements:

- Costumed Figures: Several individuals are wearing elaborate costumes, resembling animal-like characters or perhaps fantasy creatures. There's a bear-like figure, a mouse or rat-like figure with goggles, and another with an elephant head. One appears to be wearing a lion costume.

- Dressed Man: A man in a military-style jacket and a bowler hat is the most prominent figure. He appears to be a central element.

- Background: Other people are visible in the background, some seemingly watching or participating in the event. There's also a hint of a basketball hoop on a wall.

- Decorations: There are star-shaped decorations suspended, along with draped fabric.

- General atmosphere: The photograph has a slightly eerie or whimsical feel, reflecting the era in which it was taken.

Created by gemini-2.0-flash on 2025-05-17

The image appears to be a black and white photograph, perhaps from an old film negative, depicting a scene of costumed individuals and onlookers, possibly at a parade or festival.

In the foreground, there are several figures dressed in elaborate costumes. These costumes include various animal-like masks or full-body outfits. One figure is wearing a bowler hat and a distinctive jacket with many button-like closures. He seems to be holding a leash or rope. Other costumes resemble a bear-like creature, a rodent-like figure with goggles, and an elephant head. There's also a figure in a costume that appears to be kneeling, with a dog-like head.

Behind the costumed figures, there's a crowd of people, some of whom seem to be watching the spectacle. The backdrop includes a large piece of fabric or a banner with star-like decorations. The environment appears to be outdoors, possibly near a building with a basketball hoop visible in the background. The overall impression is that of a festive and somewhat surreal event.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-06-01

The image depicts a group of individuals dressed in elaborate costumes, likely participating in a theatrical performance or a masquerade event. The costumes are diverse and detailed, suggesting a theme that might be fantastical or whimsical. Here is a detailed breakdown of the scene:

Characters and Costumes:

- Leftmost Figure: Dressed in a military-style uniform with a hat, featuring decorative elements and possibly epaulettes.

- Second Figure: Wearing a bear costume with a ruffled collar and large, round ears.

- Third Figure: Dressed in a costume resembling an animal, possibly a mouse or rat, with large round ears and goggles.

- Fourth Figure: Wearing a costume that includes a tall, conical hat with star-like decorations and a mask.

- Fifth Figure: Dressed in a costume resembling an animal, possibly a horse or donkey, with a large headpiece and decorative elements.

Setting:

- The scene appears to be indoors, possibly in a gymnasium or a large hall, as indicated by the basketball hoop in the background.

- The backdrop includes a curtain or stage setup, suggesting a performance or show.

Activity:

- The individuals are standing in a line, possibly preparing for a performance or posing for the photograph.

- Some figures are interacting with each other, adding to the lively atmosphere of the event.

Photographic Details:

- The image is in black and white, giving it a vintage or historical feel.

- There is a watermark or stamp on the right side of the image, indicating it might be from a professional photography service.

Overall, the image captures a moment of festivity and creativity, with participants fully engaged in their costumed roles.

Qwen

Created by Qwen/Qwen2.5-VL-7B-Instruct on 2025-07-03

This black-and-white photograph depicts a lively scene, likely from a cultural or community event. The setting appears to be indoors, possibly a tent or a large hall, given the visible structure and decor.

In the foreground, there are four individuals dressed in costumes that resemble animals, possibly as part of a performance or parade. The costumes include large, animal-like heads, and the individuals are wearing costumes that could be associated with a specific theme or character, such as a rat, a dog, and possibly other animals. One person is kneeling, while the others stand, and they are interacting with people in the background.

The individuals in the background are dressed more casually and seem to be observing or participating in the event. Some appear to be wearing hats, and there is a sense of movement and activity, suggesting a festive or celebratory atmosphere.

The image has a vintage feel, indicated by the photographic style and the overall composition. The lower part of the image has a number "28452" written on it, which might be an identification or catalog number for the photograph.

Created by Qwen/Qwen2.5-VL-72B-Instruct on 2025-07-03

This black-and-white image depicts a lively scene, likely from a cultural or theatrical performance. Several individuals are dressed in elaborate animal costumes, possibly representing bears, elephants, and other animals, complete with masks and detailed outfits. Some of the performers are wearing suits and hats, suggesting a mix of formal and whimsical attire. The setting appears to be under a tent or canopy, with a backdrop featuring decorative elements. The overall atmosphere is festive and engaging, with participants actively involved in the performance. The image has a vintage quality, indicating it may be from an earlier time period.