Machine Generated Data

Tags

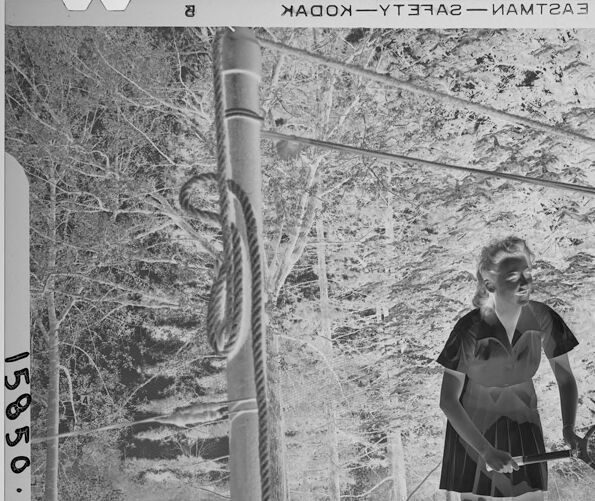

Amazon

created on 2022-01-08

Clarifai

created on 2023-10-25

| people | 99.8 | |

|

| ||

| tennis racket | 99.2 | |

|

| ||

| racket | 99.2 | |

|

| ||

| adult | 98.9 | |

|

| ||

| sports equipment | 98.8 | |

|

| ||

| recreation | 98.5 | |

|

| ||

| tennis | 98.4 | |

|

| ||

| tennis player | 98 | |

|

| ||

| one | 98 | |

|

| ||

| two | 97.9 | |

|

| ||

| woman | 97.1 | |

|

| ||

| group together | 97 | |

|

| ||

| man | 96.4 | |

|

| ||

| wear | 96.3 | |

|

| ||

| athlete | 95.8 | |

|

| ||

| three | 92.9 | |

|

| ||

| Wimbledon | 92.9 | |

|

| ||

| competition | 92.8 | |

|

| ||

| web | 92.6 | |

|

| ||

| court | 91.2 | |

|

| ||

Imagga

created on 2022-01-08

| man | 32.2 | |

|

| ||

| barrier | 31.6 | |

|

| ||

| obstruction | 23.5 | |

|

| ||

| people | 22.9 | |

|

| ||

| male | 22.1 | |

|

| ||

| crutch | 21.7 | |

|

| ||

| person | 20.4 | |

|

| ||

| adult | 20.1 | |

|

| ||

| business | 19.4 | |

|

| ||

| staff | 17.9 | |

|

| ||

| sport | 16.9 | |

|

| ||

| stick | 16.4 | |

|

| ||

| structure | 16 | |

|

| ||

| businessman | 15.9 | |

|

| ||

| outdoors | 15.7 | |

|

| ||

| attractive | 15.4 | |

|

| ||

| portrait | 14.2 | |

|

| ||

| suit | 13.8 | |

|

| ||

| city | 12.5 | |

|

| ||

| urban | 12.2 | |

|

| ||

| men | 12 | |

|

| ||

| happy | 11.9 | |

|

| ||

| professional | 11.9 | |

|

| ||

| work | 11.8 | |

|

| ||

| architecture | 11.7 | |

|

| ||

| black | 11.6 | |

|

| ||

| lifestyle | 11.6 | |

|

| ||

| job | 11.5 | |

|

| ||

| sexy | 11.2 | |

|

| ||

| corporate | 11.2 | |

|

| ||

| outside | 11.1 | |

|

| ||

| day | 11 | |

|

| ||

| outdoor | 10.7 | |

|

| ||

| fashion | 10.5 | |

|

| ||

| pretty | 10.5 | |

|

| ||

| women | 10.3 | |

|

| ||

| company | 10.2 | |

|

| ||

| active | 10 | |

|

| ||

| exercise | 10 | |

|

| ||

| building | 9.9 | |

|

| ||

| worker | 9.8 | |

|

| ||

| ball | 9.8 | |

|

| ||

| human | 9.7 | |

|

| ||

| tennis | 9.7 | |

|

| ||

| fun | 9.7 | |

|

| ||

| success | 9.7 | |

|

| ||

| wall | 9.6 | |

|

| ||

| street | 9.2 | |

|

| ||

| office | 8.9 | |

|

| ||

| court | 8.8 | |

|

| ||

| athlete | 8.7 | |

|

| ||

| walk | 8.6 | |

|

| ||

| competition | 8.2 | |

|

| ||

| student | 8.1 | |

|

| ||

| stylish | 8.1 | |

|

| ||

| fitness | 8.1 | |

|

| ||

| posing | 8 | |

|

| ||

| cute | 7.9 | |

|

| ||

| standing | 7.8 | |

|

| ||

| modern | 7.7 | |

|

| ||

| hand | 7.6 | |

|

| ||

| career | 7.6 | |

|

| ||

| one | 7.5 | |

|

| ||

| silhouette | 7.4 | |

|

| ||

| holding | 7.4 | |

|

| ||

| style | 7.4 | |

|

| ||

| action | 7.4 | |

|

| ||

| sidewalk | 7.4 | |

|

| ||

| alone | 7.3 | |

|

| ||

| playing | 7.3 | |

|

| ||

| businesswoman | 7.3 | |

|

| ||

| smiling | 7.2 | |

|

| ||

| recreation | 7.2 | |

|

| ||

| handsome | 7.1 | |

|

| ||

Google

created on 2022-01-08

| Gesture | 85.3 | |

|

| ||

| Black-and-white | 83.6 | |

|

| ||

| Art | 80.3 | |

|

| ||

| Tints and shades | 77.4 | |

|

| ||

| Monochrome photography | 75.4 | |

|

| ||

| Monochrome | 73.2 | |

|

| ||

| Visual arts | 67.9 | |

|

| ||

| Rectangle | 67.8 | |

|

| ||

| Recreation | 67.5 | |

|

| ||

| Tree | 65.2 | |

|

| ||

| Vintage clothing | 65 | |

|

| ||

| Street | 64 | |

|

| ||

| Net | 62.9 | |

|

| ||

| Shadow | 61.5 | |

|

| ||

| Circle | 58.3 | |

|

| ||

| Racquet sport | 57.3 | |

|

| ||

| Racket | 56.8 | |

|

| ||

| Human leg | 54.2 | |

|

| ||

Microsoft

created on 2022-01-08

| outdoor | 99 | |

|

| ||

| text | 98.7 | |

|

| ||

| street | 90.5 | |

|

| ||

| black and white | 87.7 | |

|

| ||

| tennis | 86.1 | |

|

| ||

| athletic game | 85.6 | |

|

| ||

| sport | 83.7 | |

|

| ||

| footwear | 83.6 | |

|

| ||

| white | 67.5 | |

|

| ||

| monochrome | 61.5 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 35-43 |

| Gender | Male, 77.1% |

| Calm | 99.3% |

| Sad | 0.3% |

| Happy | 0.1% |

| Surprised | 0.1% |

| Confused | 0.1% |

| Angry | 0.1% |

| Disgusted | 0% |

| Fear | 0% |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Feature analysis

Amazon

Person

Shoe

Tennis Racket

Categories

Imagga

| streetview architecture | 99.1% | |

|

| ||

Captions

Microsoft

created on 2022-01-08

| a person with a racket | 82.9% | |

|

| ||

| a person is holding a racket | 74.2% | |

|

| ||

| a person holding a sign | 74.1% | |

|

| ||

Text analysis

Amazon

a

15850.

HAGON-YT3RA2- MAMTZA 3

15850.

HAGON-YT3RA2-

MAMTZA

3

15850.