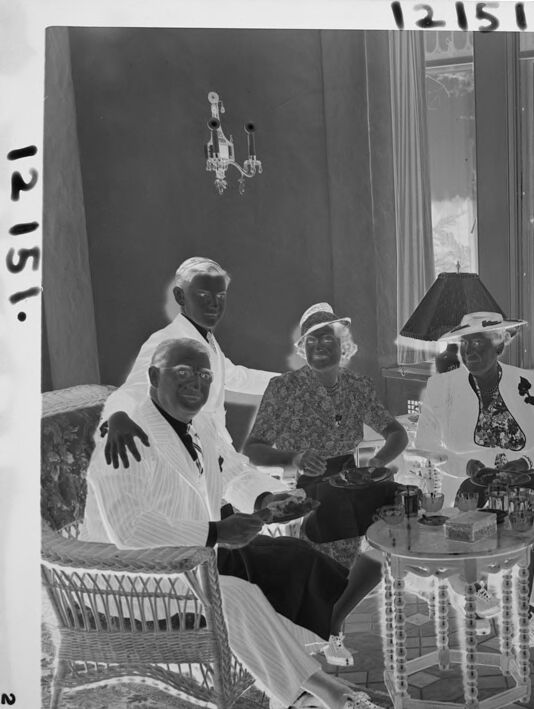

Machine Generated Data

Tags

Amazon

created on 2022-01-09

| Furniture | 99.7 | |

|

| ||

| Person | 98.6 | |

|

| ||

| Human | 98.6 | |

|

| ||

| Couch | 98.4 | |

|

| ||

| Clothing | 97.8 | |

|

| ||

| Apparel | 97.8 | |

|

| ||

| Person | 96.8 | |

|

| ||

| Person | 96.3 | |

|

| ||

| Person | 93.1 | |

|

| ||

| Person | 92.5 | |

|

| ||

| Person | 92.1 | |

|

| ||

| Person | 91 | |

|

| ||

| Chair | 91 | |

|

| ||

| Living Room | 88.3 | |

|

| ||

| Room | 88.3 | |

|

| ||

| Indoors | 88.3 | |

|

| ||

| Face | 80 | |

|

| ||

| Sitting | 78 | |

|

| ||

| People | 77.2 | |

|

| ||

| Wood | 66 | |

|

| ||

| Photography | 64.2 | |

|

| ||

| Photo | 64.2 | |

|

| ||

| Portrait | 64.2 | |

|

| ||

| Plant | 63.4 | |

|

| ||

| Baby | 58.2 | |

|

| ||

| Hat | 55.6 | |

|

| ||

| Flower | 55.4 | |

|

| ||

| Blossom | 55.4 | |

|

| ||

Clarifai

created on 2023-10-26

| people | 99.9 | |

|

| ||

| group | 99.4 | |

|

| ||

| adult | 97.8 | |

|

| ||

| music | 97 | |

|

| ||

| group together | 96.9 | |

|

| ||

| man | 96.6 | |

|

| ||

| woman | 96.3 | |

|

| ||

| musician | 94.7 | |

|

| ||

| child | 90.4 | |

|

| ||

| several | 90.2 | |

|

| ||

| education | 90 | |

|

| ||

| many | 90 | |

|

| ||

| leader | 89.2 | |

|

| ||

| instrument | 87.8 | |

|

| ||

| actress | 87.7 | |

|

| ||

| stringed instrument | 87.6 | |

|

| ||

| furniture | 86.1 | |

|

| ||

| wear | 85.9 | |

|

| ||

| administration | 85.4 | |

|

| ||

| recreation | 83.4 | |

|

| ||

Imagga

created on 2022-01-09

| brass | 100 | |

|

| ||

| wind instrument | 100 | |

|

| ||

| musical instrument | 74.7 | |

|

| ||

| man | 26.9 | |

|

| ||

| people | 25.1 | |

|

| ||

| person | 23.7 | |

|

| ||

| male | 23.4 | |

|

| ||

| adult | 21.5 | |

|

| ||

| black | 19.8 | |

|

| ||

| men | 18 | |

|

| ||

| sport | 14.8 | |

|

| ||

| boy | 14.8 | |

|

| ||

| silhouette | 12.4 | |

|

| ||

| couple | 12.2 | |

|

| ||

| youth | 11.9 | |

|

| ||

| active | 11.7 | |

|

| ||

| group | 11.3 | |

|

| ||

| to | 10.6 | |

|

| ||

| play | 10.3 | |

|

| ||

| business | 10.3 | |

|

| ||

| music | 10.1 | |

|

| ||

| dark | 10 | |

|

| ||

| handsome | 9.8 | |

|

| ||

| kid | 9.7 | |

|

| ||

| businessman | 9.7 | |

|

| ||

| professional | 9.6 | |

|

| ||

| love | 9.5 | |

|

| ||

| happy | 9.4 | |

|

| ||

| holding | 9.1 | |

|

| ||

| human | 9 | |

|

| ||

| style | 8.9 | |

|

| ||

| together | 8.8 | |

|

| ||

| lifestyle | 8.7 | |

|

| ||

| chair | 8.5 | |

|

| ||

| portrait | 8.4 | |

|

| ||

| child | 8.3 | |

|

| ||

| fashion | 8.3 | |

|

| ||

| teen | 8.3 | |

|

| ||

| cornet | 8.2 | |

|

| ||

| one | 8.2 | |

|

| ||

| exercise | 8.2 | |

|

| ||

| musician | 8 | |

|

| ||

| family | 8 | |

|

| ||

| happiness | 7.8 | |

|

| ||

| modern | 7.7 | |

|

| ||

| dance | 7.6 | |

|

| ||

| player | 7.5 | |

|

| ||

| fun | 7.5 | |

|

| ||

| alone | 7.3 | |

|

| ||

| teenager | 7.3 | |

|

| ||

| girls | 7.3 | |

|

| ||

| success | 7.2 | |

|

| ||

| smiling | 7.2 | |

|

| ||

| looking | 7.2 | |

|

| ||

| body | 7.2 | |

|

| ||

| home | 7.2 | |

|

| ||

| art | 7.2 | |

|

| ||

| posing | 7.1 | |

|

| ||

| interior | 7.1 | |

|

| ||

Google

created on 2022-01-09

| Furniture | 93 | |

|

| ||

| Black | 89.5 | |

|

| ||

| Chair | 89.4 | |

|

| ||

| Black-and-white | 84.3 | |

|

| ||

| Style | 83.8 | |

|

| ||

| Adaptation | 79.2 | |

|

| ||

| Snapshot | 74.3 | |

|

| ||

| Monochrome | 73.5 | |

|

| ||

| Monochrome photography | 73.4 | |

|

| ||

| Event | 73 | |

|

| ||

| Suit | 72.4 | |

|

| ||

| Vintage clothing | 71.9 | |

|

| ||

| Classic | 70.2 | |

|

| ||

| Room | 68.9 | |

|

| ||

| Sitting | 68.4 | |

|

| ||

| Font | 65.2 | |

|

| ||

| Team | 63.2 | |

|

| ||

| History | 62.7 | |

|

| ||

| Photo caption | 60.3 | |

|

| ||

| Curtain | 56.2 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 34-42 |

| Gender | Male, 99.9% |

| Sad | 88.4% |

| Calm | 9.4% |

| Happy | 0.7% |

| Confused | 0.6% |

| Fear | 0.4% |

| Surprised | 0.3% |

| Angry | 0.1% |

| Disgusted | 0.1% |

AWS Rekognition

| Age | 50-58 |

| Gender | Male, 93% |

| Calm | 99.9% |

| Sad | 0.1% |

| Disgusted | 0% |

| Angry | 0% |

| Confused | 0% |

| Happy | 0% |

| Surprised | 0% |

| Fear | 0% |

AWS Rekognition

| Age | 21-29 |

| Gender | Female, 96.7% |

| Calm | 96.9% |

| Happy | 1.7% |

| Confused | 0.7% |

| Surprised | 0.3% |

| Angry | 0.1% |

| Disgusted | 0.1% |

| Sad | 0.1% |

| Fear | 0.1% |

AWS Rekognition

| Age | 37-45 |

| Gender | Male, 98.6% |

| Calm | 76.9% |

| Sad | 15.2% |

| Confused | 3.7% |

| Disgusted | 1.4% |

| Surprised | 1.4% |

| Angry | 0.7% |

| Fear | 0.5% |

| Happy | 0.3% |

AWS Rekognition

| Age | 47-53 |

| Gender | Male, 76.7% |

| Surprised | 30.7% |

| Sad | 14.6% |

| Happy | 13.9% |

| Disgusted | 12.9% |

| Calm | 9.4% |

| Fear | 7.3% |

| Confused | 6.2% |

| Angry | 5% |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| people portraits | 87.7% | |

|

| ||

| events parties | 6.2% | |

|

| ||

| food drinks | 2.7% | |

|

| ||

| paintings art | 1.5% | |

|

| ||

Captions

Microsoft

created on 2022-01-09

| a group of people sitting at a table | 78.9% | |

|

| ||

| a group of people sitting around a table | 78.8% | |

|

| ||

| a group of people in a room | 78.7% | |

|

| ||

Text analysis

Amazon

2

12151.

12155

12151

12151.

2

12151

12151.

2