Machine Generated Data

Tags

Amazon

created on 2022-01-08

Clarifai

created on 2023-10-25

Imagga

created on 2022-01-08

Google

created on 2022-01-08

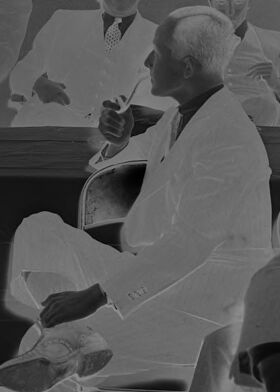

| Black | 89.6 | |

|

| ||

| Window | 79.8 | |

|

| ||

| Font | 74.7 | |

|

| ||

| Monochrome photography | 68.9 | |

|

| ||

| Monochrome | 68.5 | |

|

| ||

| Team | 68.2 | |

|

| ||

| Art | 68 | |

|

| ||

| Event | 68 | |

|

| ||

| Room | 65.4 | |

|

| ||

| Sitting | 64.3 | |

|

| ||

| History | 63.5 | |

|

| ||

| Photo caption | 59.9 | |

|

| ||

| Collaboration | 50.1 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 49-57 |

| Gender | Male, 93.5% |

| Sad | 42.8% |

| Calm | 20.4% |

| Happy | 19.3% |

| Angry | 5.1% |

| Confused | 4% |

| Disgusted | 3.9% |

| Surprised | 2.5% |

| Fear | 1.9% |

AWS Rekognition

| Age | 45-53 |

| Gender | Male, 99.8% |

| Sad | 98.7% |

| Calm | 0.5% |

| Confused | 0.3% |

| Happy | 0.2% |

| Disgusted | 0.1% |

| Fear | 0.1% |

| Angry | 0.1% |

| Surprised | 0% |

AWS Rekognition

| Age | 24-34 |

| Gender | Male, 98.2% |

| Calm | 95.6% |

| Sad | 3.8% |

| Confused | 0.2% |

| Angry | 0.1% |

| Surprised | 0.1% |

| Disgusted | 0.1% |

| Happy | 0% |

| Fear | 0% |

AWS Rekognition

| Age | 29-39 |

| Gender | Male, 100% |

| Calm | 97.8% |

| Happy | 1% |

| Sad | 0.5% |

| Confused | 0.3% |

| Angry | 0.2% |

| Disgusted | 0.1% |

| Surprised | 0.1% |

| Fear | 0% |

AWS Rekognition

| Age | 24-34 |

| Gender | Male, 95.3% |

| Calm | 99.7% |

| Sad | 0.1% |

| Confused | 0.1% |

| Surprised | 0% |

| Angry | 0% |

| Disgusted | 0% |

| Happy | 0% |

| Fear | 0% |

AWS Rekognition

| Age | 47-53 |

| Gender | Female, 78.2% |

| Sad | 68.1% |

| Happy | 8.5% |

| Calm | 8.5% |

| Confused | 8.3% |

| Surprised | 2.5% |

| Disgusted | 1.7% |

| Fear | 1.5% |

| Angry | 1% |

Feature analysis

Categories

Imagga

| people portraits | 33.5% | |

|

| ||

| events parties | 32.6% | |

|

| ||

| streetview architecture | 17% | |

|

| ||

| beaches seaside | 8.5% | |

|

| ||

| text visuals | 4.1% | |

|

| ||

| cars vehicles | 1.4% | |

|

| ||

| food drinks | 1.1% | |

|

| ||

Captions

Microsoft

created on 2022-01-08

| a group of people sitting at a table in front of a window | 86.2% | |

|

| ||

| a group of people sitting in front of a window | 83.4% | |

|

| ||

| a group of people standing in front of a window | 83.3% | |

|

| ||

Text analysis

Amazon

3/29/39

9530.

9530

-

AZOA

9530.

9530.

3|29|39.

9530.

3|29|39.