Machine Generated Data

Tags

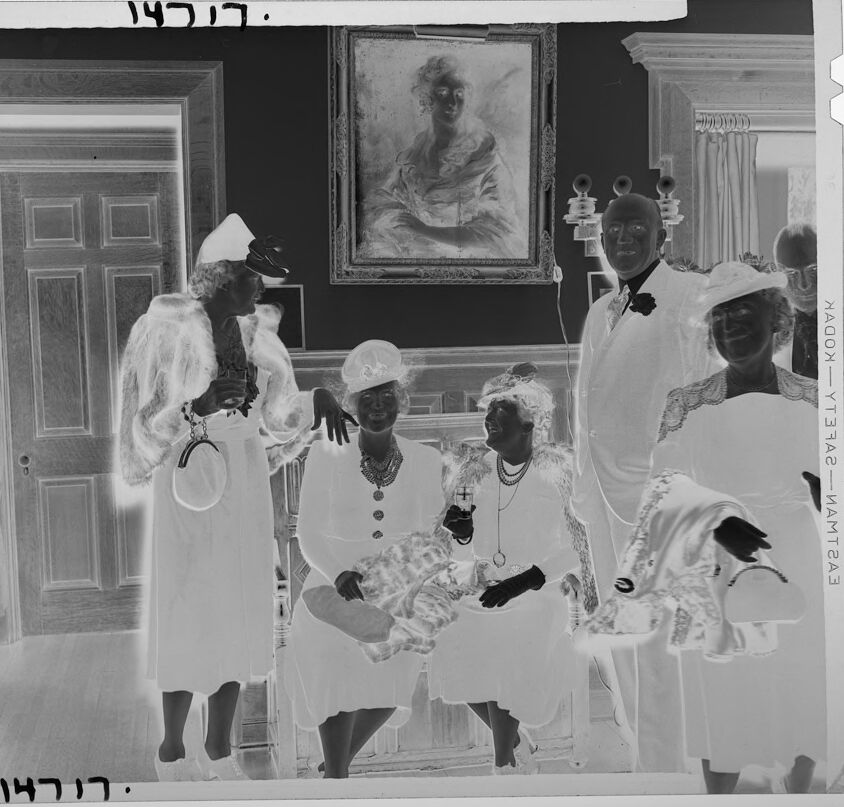

Amazon

created on 2022-01-15

| Person | 99.5 | |

|

| ||

| Human | 99.5 | |

|

| ||

| Person | 98.8 | |

|

| ||

| Person | 98.7 | |

|

| ||

| Person | 98.2 | |

|

| ||

| Person | 97.8 | |

|

| ||

| Clothing | 97.1 | |

|

| ||

| Apparel | 97.1 | |

|

| ||

| Person | 92.9 | |

|

| ||

| Person | 92.8 | |

|

| ||

| Person | 91.6 | |

|

| ||

| Face | 89.6 | |

|

| ||

| Person | 85.6 | |

|

| ||

| Person | 78.3 | |

|

| ||

| Person | 71.5 | |

|

| ||

| Shirt | 68.9 | |

|

| ||

| Floor | 63.7 | |

|

| ||

| Portrait | 63.5 | |

|

| ||

| Photography | 63.5 | |

|

| ||

| Photo | 63.5 | |

|

| ||

| Overcoat | 61.6 | |

|

| ||

| Coat | 61.6 | |

|

| ||

| Person | 61.6 | |

|

| ||

| Flooring | 56.9 | |

|

| ||

| People | 56.5 | |

|

| ||

| Indoors | 56 | |

|

| ||

Clarifai

created on 2023-10-26

Imagga

created on 2022-01-15

| nurse | 34.1 | |

|

| ||

| person | 28.1 | |

|

| ||

| man | 26.9 | |

|

| ||

| people | 26.2 | |

|

| ||

| adult | 22.4 | |

|

| ||

| male | 22 | |

|

| ||

| professional | 21.6 | |

|

| ||

| couple | 18.3 | |

|

| ||

| men | 17.2 | |

|

| ||

| two | 16.1 | |

|

| ||

| teacher | 16 | |

|

| ||

| businessman | 15.9 | |

|

| ||

| business | 15.2 | |

|

| ||

| portrait | 14.9 | |

|

| ||

| clothing | 14.8 | |

|

| ||

| lab coat | 14.5 | |

|

| ||

| happy | 14.4 | |

|

| ||

| patient | 14.2 | |

|

| ||

| coat | 13.8 | |

|

| ||

| indoors | 13.2 | |

|

| ||

| women | 12.7 | |

|

| ||

| old | 12.5 | |

|

| ||

| medical | 12.4 | |

|

| ||

| corporate | 12 | |

|

| ||

| team | 11.6 | |

|

| ||

| room | 11.6 | |

|

| ||

| worker | 11.4 | |

|

| ||

| brass | 11.4 | |

|

| ||

| home | 11.2 | |

|

| ||

| work | 11 | |

|

| ||

| day | 11 | |

|

| ||

| happiness | 11 | |

|

| ||

| educator | 10.8 | |

|

| ||

| family | 10.7 | |

|

| ||

| wind instrument | 10.6 | |

|

| ||

| job | 10.6 | |

|

| ||

| group | 10.5 | |

|

| ||

| adults | 10.4 | |

|

| ||

| looking | 10.4 | |

|

| ||

| case | 10.2 | |

|

| ||

| smiling | 10.1 | |

|

| ||

| smile | 10 | |

|

| ||

| dress | 9.9 | |

|

| ||

| office | 9.8 | |

|

| ||

| black | 9.6 | |

|

| ||

| bride | 9.6 | |

|

| ||

| lifestyle | 9.4 | |

|

| ||

| life | 9.1 | |

|

| ||

| musical instrument | 8.9 | |

|

| ||

| groom | 8.8 | |

|

| ||

| colleagues | 8.7 | |

|

| ||

| love | 8.7 | |

|

| ||

| bright | 8.6 | |

|

| ||

| doctor | 8.5 | |

|

| ||

| senior | 8.4 | |

|

| ||

| color | 8.3 | |

|

| ||

| wedding | 8.3 | |

|

| ||

| occupation | 8.2 | |

|

| ||

| human | 8.2 | |

|

| ||

| cornet | 8.1 | |

|

| ||

| suit | 8.1 | |

|

| ||

| hospital | 8.1 | |

|

| ||

| history | 8 | |

|

| ||

| building | 8 | |

|

| ||

| scientist | 7.8 | |

|

| ||

| standing | 7.8 | |

|

| ||

| architecture | 7.8 | |

|

| ||

| education | 7.8 | |

|

| ||

| lab | 7.8 | |

|

| ||

| sitting | 7.7 | |

|

| ||

| laboratory | 7.7 | |

|

| ||

| bouquet | 7.7 | |

|

| ||

| sick person | 7.7 | |

|

| ||

| health | 7.6 | |

|

| ||

| casual | 7.6 | |

|

| ||

| fashion | 7.5 | |

|

| ||

| clothes | 7.5 | |

|

| ||

| new | 7.3 | |

|

| ||

| businesswoman | 7.3 | |

|

| ||

| aged | 7.2 | |

|

| ||

| interior | 7.1 | |

|

| ||

| medicine | 7 | |

|

| ||

Google

created on 2022-01-15

| Photograph | 94.3 | |

|

| ||

| Picture frame | 86.7 | |

|

| ||

| Hat | 82.4 | |

|

| ||

| Art | 81.9 | |

|

| ||

| Monochrome | 77.2 | |

|

| ||

| Monochrome photography | 75 | |

|

| ||

| Snapshot | 74.3 | |

|

| ||

| Vintage clothing | 70.9 | |

|

| ||

| Uniform | 70.1 | |

|

| ||

| Room | 69.2 | |

|

| ||

| Event | 68.8 | |

|

| ||

| Stock photography | 64.9 | |

|

| ||

| Door | 64.3 | |

|

| ||

| Painting | 63.3 | |

|

| ||

| History | 63.2 | |

|

| ||

| Illustration | 60.3 | |

|

| ||

| Font | 57.2 | |

|

| ||

| Presbyter | 52.2 | |

|

| ||

| Photography | 51.7 | |

|

| ||

| Photo caption | 51.6 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 48-54 |

| Gender | Male, 99.8% |

| Calm | 98.4% |

| Happy | 0.8% |

| Surprised | 0.4% |

| Confused | 0.2% |

| Sad | 0.1% |

| Disgusted | 0.1% |

| Angry | 0.1% |

| Fear | 0% |

AWS Rekognition

| Age | 39-47 |

| Gender | Male, 99.9% |

| Sad | 80.7% |

| Happy | 6.8% |

| Fear | 5.3% |

| Calm | 3.8% |

| Surprised | 1.5% |

| Confused | 1.4% |

| Disgusted | 0.3% |

| Angry | 0.2% |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Possible |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| paintings art | 56.2% | |

|

| ||

| people portraits | 27.5% | |

|

| ||

| text visuals | 5.9% | |

|

| ||

| interior objects | 3.7% | |

|

| ||

| events parties | 3% | |

|

| ||

| streetview architecture | 2.2% | |

|

| ||

Captions

Microsoft

created on 2022-01-15

| a group of people standing next to a window | 87.2% | |

|

| ||

| a group of people standing in front of a window | 86.7% | |

|

| ||

| a group of people standing in front of a mirror | 82.1% | |

|

| ||

Text analysis

Amazon

14717.

14717

14J17.

14717.

NAGON-YT3RA2-MAMTZA3

14J17.

14717.

NAGON-YT3RA2-MAMTZA3