Machine Generated Data

Tags

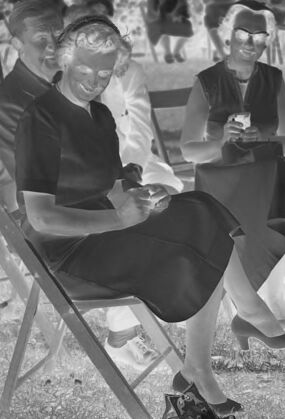

Amazon

created on 2022-01-09

| Furniture | 100 | |

|

| ||

| Person | 99.4 | |

|

| ||

| Human | 99.4 | |

|

| ||

| Person | 98.3 | |

|

| ||

| Person | 98.1 | |

|

| ||

| Person | 96.2 | |

|

| ||

| Clothing | 93.4 | |

|

| ||

| Apparel | 93.4 | |

|

| ||

| Dog | 86.4 | |

|

| ||

| Mammal | 86.4 | |

|

| ||

| Animal | 86.4 | |

|

| ||

| Canine | 86.4 | |

|

| ||

| Pet | 86.4 | |

|

| ||

| Suit | 86.1 | |

|

| ||

| Coat | 86.1 | |

|

| ||

| Overcoat | 86.1 | |

|

| ||

| Meal | 85.5 | |

|

| ||

| Food | 85.5 | |

|

| ||

| Person | 84.9 | |

|

| ||

| Person | 84.2 | |

|

| ||

| Dress | 83.1 | |

|

| ||

| Sitting | 82.8 | |

|

| ||

| Face | 81.9 | |

|

| ||

| Chair | 79.2 | |

|

| ||

| People | 78.7 | |

|

| ||

| Female | 78.7 | |

|

| ||

| Outdoors | 78.6 | |

|

| ||

| Crowd | 76.1 | |

|

| ||

| Blonde | 75 | |

|

| ||

| Teen | 75 | |

|

| ||

| Girl | 75 | |

|

| ||

| Woman | 75 | |

|

| ||

| Kid | 75 | |

|

| ||

| Child | 75 | |

|

| ||

| Person | 74.8 | |

|

| ||

| Text | 69.6 | |

|

| ||

| Nature | 67.7 | |

|

| ||

| Grass | 66.2 | |

|

| ||

| Plant | 66.2 | |

|

| ||

| Photography | 64.1 | |

|

| ||

| Photo | 64.1 | |

|

| ||

| Yard | 62.8 | |

|

| ||

| Audience | 56.2 | |

|

| ||

| Sand | 56.1 | |

|

| ||

| Leisure Activities | 55.5 | |

|

| ||

Clarifai

created on 2023-10-25

| people | 99.9 | |

|

| ||

| group together | 99.3 | |

|

| ||

| group | 98.3 | |

|

| ||

| chair | 97.8 | |

|

| ||

| furniture | 97.7 | |

|

| ||

| adult | 97.4 | |

|

| ||

| administration | 96.8 | |

|

| ||

| recreation | 96.4 | |

|

| ||

| many | 96.2 | |

|

| ||

| man | 94.3 | |

|

| ||

| woman | 93.9 | |

|

| ||

| seat | 93.8 | |

|

| ||

| leader | 90.7 | |

|

| ||

| sitting | 89.3 | |

|

| ||

| child | 88.8 | |

|

| ||

| monochrome | 87.8 | |

|

| ||

| war | 85.6 | |

|

| ||

| two | 85.4 | |

|

| ||

| sit | 85 | |

|

| ||

| wear | 84.4 | |

|

| ||

Imagga

created on 2022-01-09

| folding chair | 100 | |

|

| ||

| chair | 100 | |

|

| ||

| seat | 100 | |

|

| ||

| furniture | 77.5 | |

|

| ||

| furnishing | 38.2 | |

|

| ||

| beach | 27.8 | |

|

| ||

| sea | 21.9 | |

|

| ||

| summer | 21.9 | |

|

| ||

| sky | 21.7 | |

|

| ||

| sun | 20.1 | |

|

| ||

| water | 19.3 | |

|

| ||

| vacation | 18 | |

|

| ||

| sand | 17.4 | |

|

| ||

| people | 16.7 | |

|

| ||

| outdoor | 15.3 | |

|

| ||

| ocean | 14.9 | |

|

| ||

| day | 14.9 | |

|

| ||

| travel | 14.8 | |

|

| ||

| sunny | 14.6 | |

|

| ||

| relaxation | 14.2 | |

|

| ||

| landscape | 14.1 | |

|

| ||

| leisure | 14.1 | |

|

| ||

| chairs | 13.7 | |

|

| ||

| man | 12.8 | |

|

| ||

| building | 12.7 | |

|

| ||

| empty | 12 | |

|

| ||

| relax | 11.8 | |

|

| ||

| horizon | 11.7 | |

|

| ||

| coast | 11.7 | |

|

| ||

| holiday | 11.5 | |

|

| ||

| outdoors | 11.2 | |

|

| ||

| tourism | 10.7 | |

|

| ||

| sitting | 10.3 | |

|

| ||

| resort | 10.3 | |

|

| ||

| lifestyle | 10.1 | |

|

| ||

| tree | 10 | |

|

| ||

| happy | 9.4 | |

|

| ||

| tropical | 9.4 | |

|

| ||

| male | 9.2 | |

|

| ||

| adult | 9 | |

|

| ||

| fun | 9 | |

|

| ||

| snow | 8.9 | |

|

| ||

| urban | 8.7 | |

|

| ||

| architecture | 8.6 | |

|

| ||

| clouds | 8.4 | |

|

| ||

| tranquil | 8.1 | |

|

| ||

| sunset | 8.1 | |

|

| ||

| light | 8 | |

|

| ||

| business | 7.9 | |

|

| ||

| table | 7.8 | |

|

| ||

| idyllic | 7.5 | |

|

| ||

| paradise | 7.5 | |

|

| ||

| dark | 7.5 | |

|

| ||

| silhouette | 7.4 | |

|

| ||

| shore | 7.4 | |

|

| ||

| rest | 7.4 | |

|

| ||

| island | 7.3 | |

|

| ||

| peaceful | 7.3 | |

|

| ||

| grass | 7.1 | |

|

| ||

| person | 7.1 | |

|

| ||

| work | 7.1 | |

|

| ||

| happiness | 7 | |

|

| ||

Google

created on 2022-01-09

| Product | 90.8 | |

|

| ||

| Black | 89.5 | |

|

| ||

| Black-and-white | 85.9 | |

|

| ||

| Grass | 85.6 | |

|

| ||

| Style | 83.9 | |

|

| ||

| Folding chair | 80.4 | |

|

| ||

| People | 79.7 | |

|

| ||

| Adaptation | 79.3 | |

|

| ||

| Chair | 77.6 | |

|

| ||

| Monochrome | 76.6 | |

|

| ||

| Snapshot | 74.3 | |

|

| ||

| Monochrome photography | 73.9 | |

|

| ||

| Vintage clothing | 73.1 | |

|

| ||

| Recreation | 72.2 | |

|

| ||

| Leisure | 71.4 | |

|

| ||

| Event | 71 | |

|

| ||

| Sitting | 68.4 | |

|

| ||

| Stock photography | 63.8 | |

|

| ||

| Room | 62.3 | |

|

| ||

| Fun | 61.7 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 31-41 |

| Gender | Female, 99.2% |

| Happy | 96.4% |

| Sad | 1.2% |

| Fear | 0.9% |

| Surprised | 0.6% |

| Calm | 0.4% |

| Confused | 0.2% |

| Disgusted | 0.1% |

| Angry | 0.1% |

AWS Rekognition

| Age | 31-41 |

| Gender | Male, 93.8% |

| Happy | 53.9% |

| Calm | 31.7% |

| Confused | 5.7% |

| Sad | 2.9% |

| Surprised | 2.7% |

| Angry | 1.5% |

| Disgusted | 1.3% |

| Fear | 0.3% |

AWS Rekognition

| Age | 42-50 |

| Gender | Male, 92% |

| Happy | 89.8% |

| Calm | 8.6% |

| Sad | 0.5% |

| Disgusted | 0.3% |

| Angry | 0.3% |

| Confused | 0.2% |

| Surprised | 0.1% |

| Fear | 0.1% |

AWS Rekognition

| Age | 53-61 |

| Gender | Male, 99.9% |

| Calm | 95.3% |

| Sad | 1.5% |

| Surprised | 0.9% |

| Disgusted | 0.8% |

| Happy | 0.6% |

| Angry | 0.4% |

| Confused | 0.4% |

| Fear | 0.2% |

AWS Rekognition

| Age | 16-22 |

| Gender | Male, 76.1% |

| Disgusted | 26.4% |

| Sad | 18.4% |

| Calm | 15.5% |

| Happy | 11.6% |

| Angry | 10.4% |

| Confused | 8.5% |

| Surprised | 6.4% |

| Fear | 2.7% |

AWS Rekognition

| Age | 21-29 |

| Gender | Male, 97% |

| Calm | 90.7% |

| Sad | 5.6% |

| Happy | 1.1% |

| Fear | 1% |

| Angry | 0.9% |

| Confused | 0.3% |

| Disgusted | 0.2% |

| Surprised | 0.1% |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| paintings art | 49.4% | |

|

| ||

| streetview architecture | 18.1% | |

|

| ||

| nature landscape | 11.5% | |

|

| ||

| beaches seaside | 10.3% | |

|

| ||

| people portraits | 5% | |

|

| ||

| pets animals | 4.2% | |

|

| ||

Captions

Microsoft

created by unknown on 2022-01-09

| a group of people sitting in front of a crowd | 70.1% | |

|

| ||

| a group of people sitting in a chair | 70% | |

|

| ||

| a group of people sitting in a chair in front of a crowd | 69.9% | |

|

| ||

Text analysis

Amazon

38603

38603

38603