Machine Generated Data

Tags

Amazon

created on 2022-01-23

Clarifai

created on 2023-10-26

| people | 99.9 | |

|

| ||

| adult | 98.8 | |

|

| ||

| man | 97.9 | |

|

| ||

| two | 97.6 | |

|

| ||

| group | 96.9 | |

|

| ||

| cooking | 96.8 | |

|

| ||

| monochrome | 96.2 | |

|

| ||

| food | 94.2 | |

|

| ||

| group together | 92.8 | |

|

| ||

| meal | 92 | |

|

| ||

| several | 91.1 | |

|

| ||

| many | 90.8 | |

|

| ||

| one | 90.7 | |

|

| ||

| restaurant | 90.3 | |

|

| ||

| administration | 90.1 | |

|

| ||

| dinner | 90.1 | |

|

| ||

| three | 89.8 | |

|

| ||

| lunch | 89.1 | |

|

| ||

| four | 87.8 | |

|

| ||

| furniture | 87.3 | |

|

| ||

Imagga

created on 2022-01-23

Google

created on 2022-01-23

| Black | 89.9 | |

|

| ||

| Black-and-white | 87.4 | |

|

| ||

| Style | 84.1 | |

|

| ||

| Hat | 80.2 | |

|

| ||

| Adaptation | 79.3 | |

|

| ||

| Monochrome photography | 79.3 | |

|

| ||

| Monochrome | 78.7 | |

|

| ||

| Art | 78.2 | |

|

| ||

| Snapshot | 74.3 | |

|

| ||

| Cooking | 71.9 | |

|

| ||

| Event | 69.3 | |

|

| ||

| Room | 68.1 | |

|

| ||

| Visual arts | 65.1 | |

|

| ||

| Stock photography | 64.9 | |

|

| ||

| Picture frame | 64.5 | |

|

| ||

| Photographic paper | 61.5 | |

|

| ||

| Vintage clothing | 60.1 | |

|

| ||

| Still life photography | 59.9 | |

|

| ||

| Conversation | 55.6 | |

|

| ||

| Service | 53.9 | |

|

| ||

Microsoft

created on 2022-01-23

| person | 98 | |

|

| ||

| text | 97.7 | |

|

| ||

| food | 94.2 | |

|

| ||

| black and white | 93.8 | |

|

| ||

| clothing | 87.2 | |

|

| ||

| monochrome | 57.3 | |

|

| ||

| preparing | 40.6 | |

|

| ||

| cooking | 22.2 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 23-33 |

| Gender | Female, 80.8% |

| Calm | 99.9% |

| Sad | 0% |

| Confused | 0% |

| Surprised | 0% |

| Happy | 0% |

| Disgusted | 0% |

| Angry | 0% |

| Fear | 0% |

AWS Rekognition

| Age | 48-56 |

| Gender | Female, 94.1% |

| Calm | 87.7% |

| Sad | 9.6% |

| Confused | 1.4% |

| Disgusted | 0.4% |

| Surprised | 0.3% |

| Angry | 0.3% |

| Happy | 0.2% |

| Fear | 0.1% |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Likely |

Feature analysis

Categories

Imagga

| paintings art | 99.7% | |

|

| ||

Captions

Microsoft

created by unknown on 2022-01-23

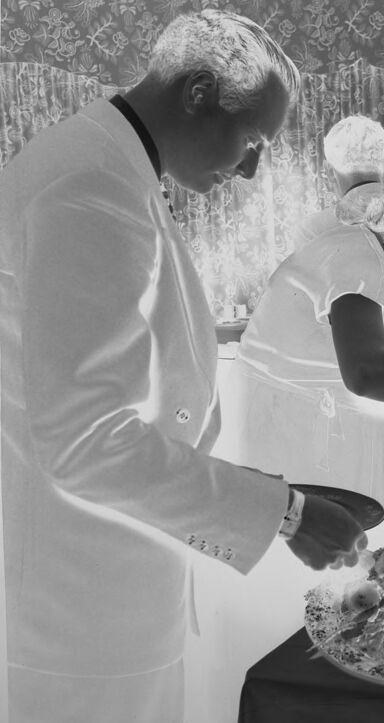

| a person standing in front of a cake | 33.6% | |

|

| ||

Clarifai

created by general-english-image-caption-blip on 2025-05-17

| a photograph of a man and woman are serving themselves at a buffet | -100% | |

|

| ||

Google Gemini

Created by gemini-2.0-flash on 2025-05-18

This is a black and white negative image depicting a buffet line or serving area, possibly at a formal event.

In the foreground, there's a large, ornate bowl filled with what appears to be a salad or a similar decorative food arrangement. Next to the bowl is a stack of plates, indicating that this is part of a self-serve setup.

Behind the buffet table, there are people, likely guests or servers. On the left, a man in a suit is holding a plate and looking at the food. To his right, a woman in what seems to be an apron or a serving uniform is also looking at the food. Further in the background, there is another person who seems to be serving or attending to the food.

The backdrop is a decorative curtain, and there's a cabinet filled with decorative plates or china behind the people. The cabinet is next to a side wall.

The overall impression is one of a formal gathering or party where food is being served in an elegant setting. The style and the photographic quality suggest that this image may be from an earlier period.

Created by gemini-2.0-flash-lite on 2025-05-18

Here's a description of the image:

The image is a black and white negative depicting what appears to be a formal gathering or meal. Several people are involved in what looks like serving food.

Key elements:

- People: The main focus is on a group of people. There's a man in a white suit in the foreground, looking down at a plate. Behind him, a woman and other individuals are tending to a table laden with food. One of the men is wearing a dark suit, and one of the women has her hair set in a bob with a very slight curl to it.

- Food and Table Setting: There are several serving dishes filled with food, likely a salad or a similar dish, in the center of the composition. There is a stack of plates, as well as some floral elements and plants.

- Background: In the background, there's a white cabinet with visible dishes. There are also floral curtains.

Overall impression:

The scene suggests a formal event, likely a party or a high-end dinner. The attire, the serving style, and the overall ambiance imply an upper-class setting from the mid-20th century.

Text analysis

Amazon