Machine Generated Data

Tags

Amazon

created on 2022-01-09

| Person | 99.8 | |

|

| ||

| Human | 99.8 | |

|

| ||

| Person | 99.4 | |

|

| ||

| Person | 99 | |

|

| ||

| Person | 89.9 | |

|

| ||

| Helicopter | 87.1 | |

|

| ||

| Transportation | 87.1 | |

|

| ||

| Vehicle | 87.1 | |

|

| ||

| Aircraft | 87.1 | |

|

| ||

| Person | 86.9 | |

|

| ||

| Person | 79.2 | |

|

| ||

| Clothing | 77.7 | |

|

| ||

| Apparel | 77.7 | |

|

| ||

| Building | 77.1 | |

|

| ||

| Waterfront | 74.7 | |

|

| ||

| Water | 74.7 | |

|

| ||

| Machine | 74.2 | |

|

| ||

| Shorts | 74.1 | |

|

| ||

| Harbor | 69.7 | |

|

| ||

| Pier | 69.7 | |

|

| ||

| Dock | 69.7 | |

|

| ||

| Port | 69.7 | |

|

| ||

| Airplane | 66.3 | |

|

| ||

| Military | 60.7 | |

|

| ||

| Cruiser | 57.3 | |

|

| ||

| Ship | 57.3 | |

|

| ||

| Navy | 57.3 | |

|

| ||

| Biplane | 56.3 | |

|

| ||

| Sailor Suit | 55.8 | |

|

| ||

Clarifai

created on 2023-10-25

| people | 99.8 | |

|

| ||

| vehicle | 98.6 | |

|

| ||

| group together | 98.4 | |

|

| ||

| group | 97.7 | |

|

| ||

| transportation system | 96.8 | |

|

| ||

| many | 95.8 | |

|

| ||

| adult | 95.5 | |

|

| ||

| military | 94.9 | |

|

| ||

| administration | 94.4 | |

|

| ||

| man | 94 | |

|

| ||

| woman | 92.4 | |

|

| ||

| wear | 91.7 | |

|

| ||

| war | 91.2 | |

|

| ||

| several | 91 | |

|

| ||

| aircraft | 90.7 | |

|

| ||

| watercraft | 89.7 | |

|

| ||

| monochrome | 89.6 | |

|

| ||

| airplane | 83.2 | |

|

| ||

| three | 81.2 | |

|

| ||

| leader | 77.8 | |

|

| ||

Imagga

created on 2022-01-09

| cockpit | 39.1 | |

|

| ||

| man | 24.2 | |

|

| ||

| war | 21.8 | |

|

| ||

| male | 21.3 | |

|

| ||

| equipment | 20.3 | |

|

| ||

| vehicle | 19.6 | |

|

| ||

| military | 19.3 | |

|

| ||

| uniform | 16.6 | |

|

| ||

| stage | 16.6 | |

|

| ||

| industrial | 16.3 | |

|

| ||

| engine | 15.4 | |

|

| ||

| work | 14.9 | |

|

| ||

| weapon | 14.6 | |

|

| ||

| television camera | 14.3 | |

|

| ||

| person | 14.3 | |

|

| ||

| power | 14.3 | |

|

| ||

| metal | 13.7 | |

|

| ||

| industry | 13.6 | |

|

| ||

| mechanical | 13.6 | |

|

| ||

| factory | 13.5 | |

|

| ||

| transportation | 13.4 | |

|

| ||

| machine | 13.3 | |

|

| ||

| engineer | 13.3 | |

|

| ||

| steel | 13.2 | |

|

| ||

| battle | 12.7 | |

|

| ||

| army | 12.7 | |

|

| ||

| technology | 12.6 | |

|

| ||

| protection | 11.8 | |

|

| ||

| gun | 11.8 | |

|

| ||

| soldier | 11.7 | |

|

| ||

| people | 11.7 | |

|

| ||

| car | 11.6 | |

|

| ||

| business | 11.5 | |

|

| ||

| television equipment | 11.5 | |

|

| ||

| device | 11.5 | |

|

| ||

| engineering | 11.4 | |

|

| ||

| platform | 11.3 | |

|

| ||

| aviator | 11.3 | |

|

| ||

| adult | 11 | |

|

| ||

| danger | 10.9 | |

|

| ||

| tank | 10.7 | |

|

| ||

| automobile | 10.5 | |

|

| ||

| transport | 10 | |

|

| ||

| camouflage | 9.8 | |

|

| ||

| outdoors | 9.7 | |

|

| ||

| men | 9.4 | |

|

| ||

| professional | 9.3 | |

|

| ||

| training | 9.2 | |

|

| ||

| occupation | 9.2 | |

|

| ||

| worker | 8.9 | |

|

| ||

| warfare | 8.9 | |

|

| ||

| electronic equipment | 8.8 | |

|

| ||

| mechanic | 8.8 | |

|

| ||

| military vehicle | 8.6 | |

|

| ||

| construction | 8.5 | |

|

| ||

| wheel | 8.5 | |

|

| ||

| leisure | 8.3 | |

|

| ||

| playing | 8.2 | |

|

| ||

| job | 8 | |

|

| ||

| manufacturing | 7.8 | |

|

| ||

| conflict | 7.8 | |

|

| ||

| color | 7.8 | |

|

| ||

| clothing | 7.8 | |

|

| ||

| emergency | 7.7 | |

|

| ||

| mask | 7.7 | |

|

| ||

| ship | 7.6 | |

|

| ||

| safety | 7.4 | |

|

| ||

| building | 7.2 | |

|

| ||

| recreation | 7.2 | |

|

| ||

| history | 7.1 | |

|

| ||

| game | 7.1 | |

|

| ||

| to | 7.1 | |

|

| ||

| working | 7.1 | |

|

| ||

| sky | 7 | |

|

| ||

Google

created on 2022-01-09

| Motor vehicle | 90 | |

|

| ||

| Vehicle | 89.9 | |

|

| ||

| Black | 89.7 | |

|

| ||

| Black-and-white | 86 | |

|

| ||

| Style | 83.9 | |

|

| ||

| Naval architecture | 83 | |

|

| ||

| Monochrome photography | 75.1 | |

|

| ||

| Monochrome | 74.6 | |

|

| ||

| Crew | 68.2 | |

|

| ||

| Boat | 66.8 | |

|

| ||

| Watercraft | 65.8 | |

|

| ||

| Room | 64.7 | |

|

| ||

| Stock photography | 62.4 | |

|

| ||

| Illustration | 61.7 | |

|

| ||

| Vintage clothing | 61.1 | |

|

| ||

| Photographic paper | 60.3 | |

|

| ||

| History | 59.8 | |

|

| ||

| Font | 59.1 | |

|

| ||

| Tent | 58.9 | |

|

| ||

| Ship | 58.2 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 49-57 |

| Gender | Male, 52.9% |

| Calm | 96.9% |

| Surprised | 1.5% |

| Confused | 0.4% |

| Sad | 0.3% |

| Angry | 0.3% |

| Disgusted | 0.2% |

| Happy | 0.2% |

| Fear | 0.2% |

AWS Rekognition

| Age | 24-34 |

| Gender | Female, 63% |

| Happy | 81% |

| Sad | 8.7% |

| Calm | 2.8% |

| Surprised | 2.1% |

| Fear | 2.1% |

| Confused | 1.6% |

| Angry | 0.9% |

| Disgusted | 0.7% |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Unlikely |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| streetview architecture | 87.2% | |

|

| ||

| beaches seaside | 9.9% | |

|

| ||

Captions

Microsoft

created on 2022-01-09

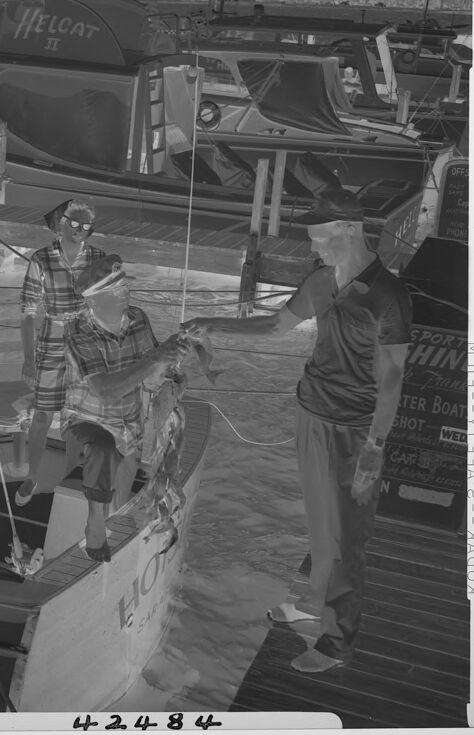

| a group of people on a boat | 39.8% | |

|

| ||

| a group of people standing on a boat | 36.9% | |

|

| ||

| a group of people in a boat | 36.8% | |

|

| ||

Text analysis

Amazon

TER

42484

SHOT

N

WED

BOAT

TER BOAT YT

HELCAT

II

E

PHONE

HO

OFFS

YT

FAST

HINE

CAR

SHOT and WED

CAR 50

50

FA

KODAK

k

2

SPORT3

roe

k Pranett

-

SAME

Ca

Pranett

not

and

ASSOCIAT

HELCAT

SPORT

HIN

TER BOAT

SHOT

WED

CA

4248 f

HOP

HELCAT

SPORT

HIN

TER

BOAT

SHOT

WED

CA

4248

f

HOP