Machine Generated Data

Tags

Amazon

created on 2022-01-22

Clarifai

created on 2023-10-26

Imagga

created on 2022-01-22

Google

created on 2022-01-22

| Face | 98.4 | |

|

| ||

| Smile | 89 | |

|

| ||

| Baby & toddler clothing | 85.2 | |

|

| ||

| Happy | 84.9 | |

|

| ||

| Baby | 83.1 | |

|

| ||

| Toddler | 81.6 | |

|

| ||

| Playing with kids | 80.5 | |

|

| ||

| People | 78.5 | |

|

| ||

| Art | 74.9 | |

|

| ||

| Monochrome | 74.5 | |

|

| ||

| Vintage clothing | 74.1 | |

|

| ||

| Child | 73.8 | |

|

| ||

| Monochrome photography | 73.3 | |

|

| ||

| Stock photography | 65.4 | |

|

| ||

| Rectangle | 65.3 | |

|

| ||

| Sitting | 63.3 | |

|

| ||

| Pattern | 62.4 | |

|

| ||

| Room | 60.9 | |

|

| ||

| Sleeve | 59.3 | |

|

| ||

| Picture frame | 59 | |

|

| ||

Color Analysis

Face analysis

Amazon

Microsoft

AWS Rekognition

| Age | 0-3 |

| Gender | Female, 100% |

| Happy | 99.9% |

| Calm | 0% |

| Surprised | 0% |

| Sad | 0% |

| Angry | 0% |

| Disgusted | 0% |

| Fear | 0% |

| Confused | 0% |

AWS Rekognition

| Age | 0-3 |

| Gender | Female, 100% |

| Surprised | 99.7% |

| Calm | 0.1% |

| Happy | 0.1% |

| Fear | 0.1% |

| Confused | 0% |

| Disgusted | 0% |

| Angry | 0% |

| Sad | 0% |

Microsoft Cognitive Services

| Age | 0 |

| Gender | Female |

Microsoft Cognitive Services

| Age | 0 |

| Gender | Female |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very likely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Possible |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| people portraits | 97.1% | |

|

| ||

| paintings art | 2.6% | |

|

| ||

Captions

Microsoft

created on 2022-01-22

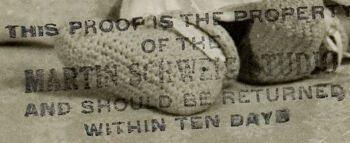

| a vintage photo of a baby | 95.7% | |

|

| ||

| a vintage photo of a person holding a baby | 89.8% | |

|

| ||

| a vintage photo of a baby holding a stuffed animal | 77.1% | |

|

| ||

Text analysis

Amazon

MARTIN

AND

TEN

OF

BE

WITHIN

THIS

THE

WITHIN TEN DAYS

OF THE

RETURNED

DAYS

AND SHOULD BE RETURNED

PROPERTY

SHOULD

STUDIO

THIS PROOPOS THE PROPERTY

PROOPOS

MARTIN SCHWEIZ STUDIO

SCHWEIZ

THIS PROOF IS THE PROPER

OF TE

MARTIS SCHWE UD

AND SHOULD BE RETURNED

LAITHIN TEN DAYS

THIS

PROOF

IS

THE

PROPER

OF

TE

MARTIS

SCHWE

UD

AND

SHOULD

BE

RETURNED

LAITHIN

TEN

DAYS