Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 50-58 |

| Gender | Male, 85.4% |

| Calm | 99.7% |

| Sad | 0.1% |

| Happy | 0.1% |

| Surprised | 0% |

| Angry | 0% |

| Disgusted | 0% |

| Confused | 0% |

| Fear | 0% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Person | 99.7% | |

Categories

Imagga

created on 2022-01-09

| paintings art | 100% | |

Captions

Microsoft

created by unknown on 2022-01-09

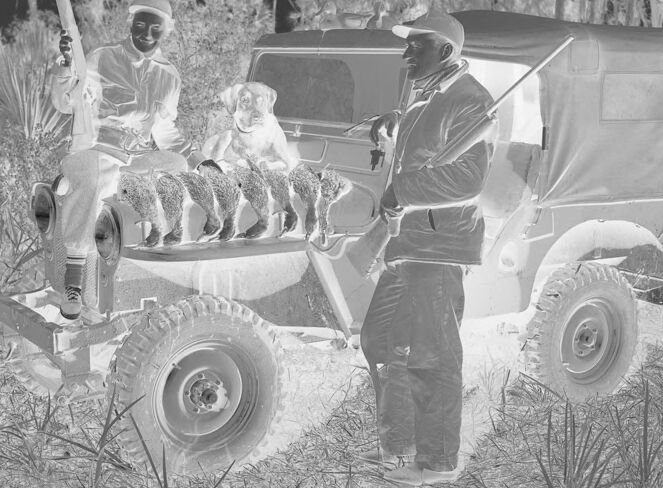

| a group of people riding on the back of a truck | 55.2% | |

| a group of men riding on the back of a truck | 45.3% | |

| a group of people standing in front of a truck | 45.2% | |

Clarifai

Created by general-english-image-caption-clip on 2025-07-15

a family of four in a tractor.

Salesforce

Created by general-english-image-caption-blip on 2025-05-16

a photograph of a man in uniform standing next to a truck

OpenAI GPT

Created by gpt-4o-2024-08-06 on 2025-06-23

The response was filtered due to the prompt triggering Azure OpenAI's content management policy. The content filter results follow.

hate: safe

self-harm: safe

sexual: safe

violence: medium

Anthropic Claude

Created by anthropic.claude-3-haiku-20240307-v1:0 on 2025-06-19

The image shows a group of people in an outdoor setting, surrounded by lush vegetation and trees. There is a vehicle, likely a truck or jeep, in the foreground, and the people appear to be engaged in some kind of outdoor activity or expedition. The people are wearing clothing typical of the time period, and the overall scene has a vintage, historical feel to it.

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-19

This appears to be a vintage black and white photograph showing several people gathered around what looks like an early model motorcycle or motorized bicycle. The setting appears to be outdoors with Spanish moss hanging from trees in the background, suggesting this may be somewhere in the American South. The image has a grainy, historical quality typical of photographs from the early to mid-20th century. There's a vehicle or car visible in the background as well. The photograph has a reference number "92708" visible in the bottom corner.

Meta Llama

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-06-10

The image is a black-and-white photograph of two men posing with a jeep and a dog. The man on the left is sitting on the front of the jeep, holding a shotgun and wearing a hat, shirt, pants, and boots. The man on the right is standing next to the jeep, holding a shotgun and wearing a hat, jacket, pants, and boots. There are several dogs sitting on the back of the jeep.

The jeep has a canvas top and large tires. It is parked in a field with tall grass and trees in the background. The overall atmosphere of the image suggests that the men are on a hunting trip or adventure.

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-06-10

The image is a black-and-white photograph of two men standing beside a jeep, with a dog sitting on the back of the vehicle. The men are dressed in casual attire, with one wearing a hat and holding a long object, possibly a rifle or a stick. The other man is also wearing a hat and appears to be holding a rifle.

The jeep is parked in a grassy area, surrounded by trees and bushes. The dog, which appears to be a hunting breed, is sitting on the back of the jeep, looking alert and focused. The overall atmosphere of the image suggests that the men are on a hunting trip, and the dog is an integral part of their expedition.

In the background, there are several trees and bushes, which provide a natural setting for the scene. The sky is not visible, but the lighting suggests that it may be early morning or late afternoon. The image has a nostalgic feel to it, evoking a sense of adventure and camaraderie between the men and their canine companion.

Overall, the image captures a moment in time when two men and their dog are enjoying the great outdoors, engaged in a shared activity that brings them together. The photograph is a testament to the bond between humans and animals, and the joy that can be found in exploring the natural world.

Amazon Nova

Created by amazon.nova-lite-v1:0 on 2025-06-05

The image depicts a black-and-white photograph of two men, one seated and one standing, in a rural setting. The seated man is holding a rifle and appears to be posing for a photograph. He is wearing a hat and a long-sleeved shirt. The standing man is also holding a rifle and is dressed similarly, with a hat and a long-sleeved shirt. They are both wearing shoes. The seated man is sitting on a vehicle, which appears to be a pickup truck or a similar type of vehicle. The vehicle is parked in a grassy area with trees in the background. The image has a watermark in the bottom right corner.

Created by amazon.nova-pro-v1:0 on 2025-06-05

The black-and-white image features two men in a field with a dog and a vehicle. The man on the left is seated on the vehicle with his legs crossed, holding a rifle, and smiling. He is wearing a hat and boots. In front of him are several birds lying on the vehicle. The man on the right is standing beside the vehicle, holding a rifle, and wearing a hat and shoes.

Google Gemini

Created by gemini-2.0-flash on 2025-05-17

Certainly! Here's a description of the image based on what I can see:

General Impression:

The image appears to be a black and white negative of a photograph taken outdoors in a wooded area, possibly after a successful hunting expedition.

Detailed Elements:

- People: There are two men in the photo. One is sitting on the front of a jeep, holding a rifle upright. The other is standing next to the jeep, also holding a rifle.

- Jeep: A classic, likely military-style Jeep is present, providing a focal point.

- Game: Several ducks (or similar waterfowl) are laid out across the front of the Jeep. This suggests a hunting theme.

- Dog: There is a dog seated on the front of the jeep.

- Setting: The environment includes trees, likely wetland vegetation and grass, suggesting a marsh or swamp-like area.

- Clothing: The men are wearing outdoor clothing, possibly hunting attire.

Interpretation:

The photograph seems to capture a hunting scene with the hunters posing proudly with their game and dog beside their jeep. It conveys a sense of success.

Created by gemini-2.0-flash-lite on 2025-05-17

Certainly! Here's a description of the image:

Overall Impression

The image is a negative image, rendering it in a grayscale color scheme. It seems to be a photograph taken in a natural outdoor setting, possibly a marsh or forest area. It depicts two men standing near a vintage jeep, along with a dog and some harvested game animals.

Key Elements and Details

- People: Two men are visible. One is seated on the front of the jeep, holding a rifle and smiling. The other stands beside the jeep, also holding a rifle and wearing a hat.

- Vehicle: A classic-looking jeep is the central element. It has a canvas roof and appears to be a military-style vehicle.

- Dog: A dog, possibly a retriever breed, is sitting on the front of the jeep.

- Game: Several dead birds are placed on the jeep's hood. This suggests the men have been hunting.

- Setting: The background has trees, tall grass, and a swampy, natural environment.

- Composition: The photo is well-framed, with the jeep and figures centrally positioned. The background adds depth to the scene.

- Other elements: The image has paper edges showing, a numbered punch hole from a binder or filing system. At the bottom of the image, the number "42708" is handwritten.

Overall, the image portrays a scene related to hunting, set in a natural environment.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-06-02

The black-and-white photograph depicts three men and two dogs in a wooded area. The men are standing near a vintage military-style jeep, which has a canvas cover over the back.

- The man on the left is sitting on the hood of the jeep, holding a rifle. He is dressed in a light-colored jacket and pants, and he is wearing a cap.

- The man in the middle is standing beside the jeep, holding a rifle in a relaxed position. He is wearing a dark jacket and pants, along with a hat.

- The man on the right is also standing beside the jeep, holding a rifle. He is dressed in a dark jacket and pants, and he is wearing a hat.

- Two dogs are sitting on the hood of the jeep. One dog appears to be a larger breed, while the other is smaller and spotted.

The background shows a wooded area with trees and underbrush, suggesting that the men and dogs are possibly on a hunting expedition or engaged in some outdoor activity. The photograph has a vintage feel, possibly from the mid-20th century, given the style of the clothing and the vehicle.

Qwen

Created by Qwen/Qwen2.5-VL-7B-Instruct on 2025-07-04

This is a black-and-white photograph depicting two individuals and a dog in a rural setting, likely during the early to mid-20th century based on the style of clothing, vehicle, and overall aesthetic.

Foreground and Vehicle: The main subject is a vintage four-wheeled vehicle, possibly a military or utility Jeep, which appears to be parked or in motion on a grassy area. The Jeep is equipped with a canvas top and has a spare tire attached to the back. It has a flatbed on the front, which is used as a makeshift seat or platform for the individuals and the dog. The vehicle has a rustic appearance, consistent with the historical period it suggests.

Individuals and Dog: On the platform of the Jeep, there are two individuals seated. The person on the left appears to be in a relaxed pose, possibly a driver or a passenger, and is wearing a lighter-colored outfit with a hat. The person on the right is standing and appears to be holding a shotgun, suggesting a hunting activity. Both individuals are wearing jackets and hats, which could indicate a cool climate or outdoor work. The dog, sitting between the two individuals on the platform, adds to the rural and outdoor setting.

Background: The background consists of trees, shrubs, and what appears to be a swampy or wetland area, as indicated by the visible vegetation and the appearance of the ground. The trees are sparsely spaced, and there is a sense of wilderness in the environment.

Overall Atmosphere: The photograph evokes a sense of outdoor adventure, rural life, and possibly hunting or a labor-intensive activity in a natural setting. The monochromatic color palette and the style of the vehicle and clothing contribute to a nostalgic feel, suggesting the photo was taken during a time when such vehicles and attire were common. The image number "42708" on the bottom right corner likely indicates it is from a collection or archive.

Created by Qwen/Qwen2.5-VL-72B-Instruct on 2025-07-04

This black-and-white photograph depicts two men and several dogs in an outdoor setting. One man is seated in the driver's seat of a vintage open-sided vehicle, while the other man stands next to the vehicle, gripping the steering wheel. The vehicle appears to be a modified truck or jeep, with a large number of coons (raccoons) hanging from the front grille. The background shows a natural environment with trees and vegetation. The men are dressed in casual attire suitable for outdoor activities. The image has a historical feel, suggesting it might be from the mid-20th century. There is a number "42708" at the bottom right corner of the image.