Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 34-42 |

| Gender | Male, 98.8% |

| Calm | 99.1% |

| Sad | 0.5% |

| Angry | 0.1% |

| Confused | 0.1% |

| Disgusted | 0.1% |

| Surprised | 0.1% |

| Happy | 0% |

| Fear | 0% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Person | 99.2% | |

Categories

Imagga

created on 2022-01-15

| streetview architecture | 80.2% | |

| paintings art | 17.5% | |

| people portraits | 1.8% | |

Captions

Microsoft

created by unknown on 2022-01-15

| a group of people standing in a room | 95.8% | |

| a group of people standing in front of a window | 91% | |

| a group of people in a room | 90.9% | |

Clarifai

Created by general-english-image-caption-clip on 2025-07-14

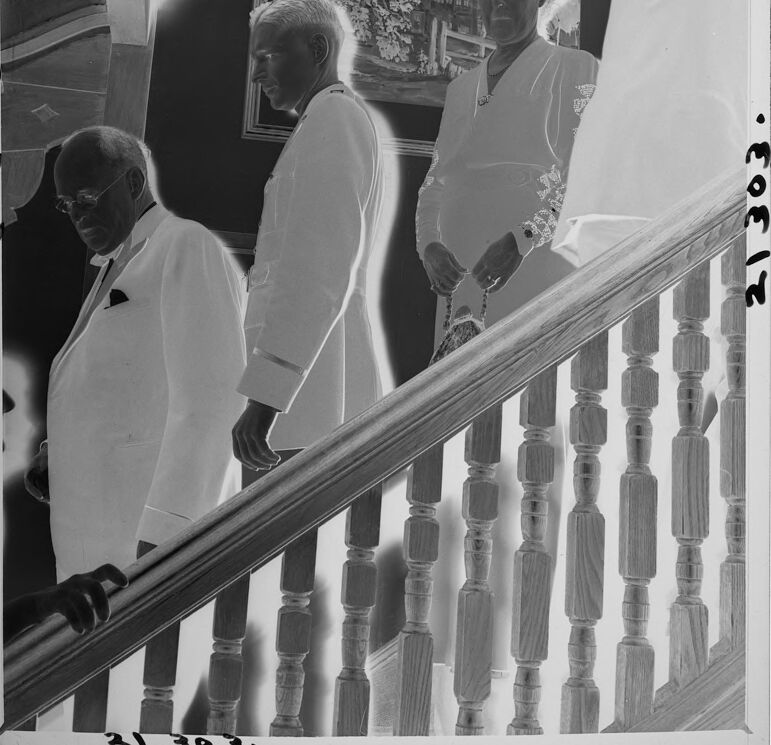

photograph of the wedding of person and noble person.

Salesforce

Created by general-english-image-caption-blip on 2025-05-23

a photograph of a man in a suit and tie standing on a staircase

OpenAI GPT

Created by gpt-4o-2024-11-20 on 2025-06-15

The image is a black-and-white negative capturing individuals on a staircase. The wooden balusters and handrail of the staircase are prominently visible in the foreground. The background includes a framed artwork depicting a scenic building surrounded by foliage. The individuals are dressed in formal attire, with one holding what appears to be a small handbag. The negative format lends the image a high-contrast appearance, reversing light and dark tones.

Created by gpt-4o-2024-08-06 on 2025-06-15

This image is a photographic negative showing an interior setting where a group of people are standing on a wooden staircase. The individuals are dressed in formal attire, with men wearing suits and a woman in a dress. The staircase has turned balusters and a handrail, characteristic of classic architecture. On the wall beside the staircase is a framed painting depicting a building surrounded by greenery. The overall composition suggests a moment captured during a social or formal gathering. The numbers 21 303 are seen written on the edges, possibly indicating a catalog or reference number for the photograph.

Anthropic Claude

Created by anthropic.claude-3-haiku-20240307-v1:0 on 2025-06-20

The image appears to depict several individuals in religious attire, likely priests or clergy members, standing on a staircase or balcony. They are wearing white robes and appear to be observing or participating in some kind of religious ceremony or event. The background includes a framed artwork or photograph on the wall, suggesting this is taking place in a religious or institutional setting.

Created by us.anthropic.claude-3-opus-20240229-v1:0 on 2025-06-20

The black and white image shows three elderly gentlemen standing on a staircase and looking at a framed painting or photograph on the wall. They are all dressed formally in light-colored suits or jackets. The staircase has wooden banisters and spindles. The framed artwork they are examining appears to depict a house or building exterior.

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-20

This is a black and white photograph showing several people dressed in white formal attire descending what appears to be a wooden staircase. The staircase has decorative turned balusters, and there's a framed artwork visible on the wall in the background showing what looks like a house or building among trees. The subjects are wearing white dinner jackets or similar formal wear, and the image appears to be from an earlier era, possibly mid-20th century based on the style and photographic quality. The composition creates an interesting visual rhythm as the figures descend the stairs in sequence.

Meta Llama

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-05-26

This image is a black-and-white photograph of four people standing on a staircase. The individuals are dressed in formal attire, with the men wearing white suits and the woman wearing a white dress. They are positioned on a staircase with wooden banisters, and a framed picture hangs on the wall behind them.

The photograph appears to be a historical or archival image, as it has been digitized and features handwritten notes along the edges. These notes include numbers and letters, which may indicate the image's cataloging information or other relevant details. Overall, the image presents a formal and dignified scene, capturing a moment in time from the past.

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-05-26

The image depicts a black-and-white photograph of four individuals descending a staircase, with the photographer's position located at the top right corner of the image.

The staircase features a wooden railing and balusters, with the individuals dressed in formal attire. The man on the left wears a white suit jacket and tie, while the man in the middle sports a white suit jacket. The woman on the right is dressed in a white dress, and the man on the top right is attired in a white shirt.

In the background, a painting adorns the wall, accompanied by a column and a doorway. The overall atmosphere of the image suggests a formal event or gathering, possibly a wedding or a social function. The photographer's presence at the top right corner of the image adds a sense of intimacy and immediacy to the scene, capturing the moment as it unfolds.

Amazon Nova

Created by amazon.nova-pro-v1:0 on 2025-05-26

The black-and-white photo shows four people walking up a staircase. The staircase has a wooden handrail and a wooden floor. Three of them are wearing white coats, while the other one is wearing a white blouse. The two men are wearing eyeglasses. One of them is holding a handbag. There is a picture frame mounted on the wall behind them. The photo has a watermark with some text and numbers on the bottom left.

Created by amazon.nova-lite-v1:0 on 2025-05-26

The image is a black-and-white photograph of a group of people standing on a staircase. The group consists of three men and one woman. The man on the left is wearing a white suit, a bow tie, and glasses. The man in the middle is also wearing a white suit. The woman is wearing a white dress with a design on the sleeve and is holding a handbag. The man on the right is wearing a white coat and glasses. Behind them is a wall with a picture frame.

Google Gemini

Created by gemini-2.0-flash on 2025-05-18

Here is a description of the image:

The black and white inverted image shows four people descending a set of wooden stairs. A hand can be seen grasping the banister on the lower left of the image. An older gentleman is dressed in a suit and glasses, and appears to be the first to descend. Behind him is a man in a military uniform. A woman in a light colored outfit, holding a small bag is next. Following behind her is another person, who is partially visible. A framed picture hangs on the wall behind the group. The image is framed with handwritten numbers along the edges of the image.

Created by gemini-2.0-flash-lite on 2025-05-18

Here is a description of the image:

The image is a black and white negative of a photograph taken indoors. It features a group of four people, presumably adults, descending a staircase. The composition shows the people at different points along the staircase, creating a sense of movement.

The first person on the left is an older man wearing glasses and a suit, his head is slightly bowed. Next to him, and just behind him, is a man in a military-style jacket. Then a woman is centered holding a small patterned bag. The last person, further up the stairs, is also a man in a long jacket.

The staircase itself is a prominent element, with its wooden balustrade and turned spindles. A framed picture hangs on the wall. The image appears to have been taken in a formal setting.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-06-03

The image is a black-and-white photograph that appears to be a composite of multiple exposures, creating a ghostly, ethereal effect. It features three men dressed in formal attire, possibly white suits or robes, ascending a staircase. The staircase has wooden balusters and handrails, and the setting appears to be indoors, possibly in a formal or official building.

The men are captured at different points along the staircase, giving the impression of movement and progression. The background includes a framed painting or photograph on the wall, adding to the formal atmosphere of the scene. The multiple exposures create a sense of overlapping and transparency, with the figures of the men appearing semi-transparent and slightly blurred. This effect enhances the artistic and surreal quality of the image.

Qwen

Created by Qwen/Qwen2.5-VL-7B-Instruct on 2025-07-05

The image is a black-and-white photograph showing a group of people standing indoors, likely in a formal setting. The individuals are dressed in formal attire, with men wearing white coats and what appear to be bow ties, and a woman in a light-colored dress holding a small handbag. They are positioned near a wooden staircase with a balustrade. In the background, there is a framed painting depicting a landscape scene, possibly a town or village. The overall atmosphere suggests a formal or ceremonial event, with the individuals possibly participating in or observing the occasion. The number "21303" is printed repeatedly in the corner of the image, which might indicate a catalog or inventory number.

Created by Qwen/Qwen2.5-VL-72B-Instruct on 2025-07-05

This black-and-white photograph captures a formal scene where three men in white suits are descending a staircase. The men appear to be in a well-decorated room, with a painting hanging on the wall in the background. The staircase has a wooden railing with vertical balusters. The image has a vintage quality, and there is a visible timestamp "21303" on the left side of the photograph. The overall atmosphere suggests a formal or official event.