Machine Generated Data

Tags

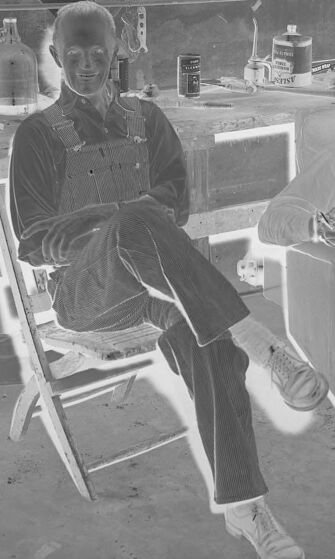

Amazon

created on 2019-03-25

| Furniture | 100 | |

|

| ||

| Person | 99.3 | |

|

| ||

| Human | 99.3 | |

|

| ||

| Person | 99.2 | |

|

| ||

| Chair | 98.3 | |

|

| ||

| Chair | 96.9 | |

|

| ||

| Shoe | 88.8 | |

|

| ||

| Apparel | 88.8 | |

|

| ||

| Footwear | 88.8 | |

|

| ||

| Clothing | 88.8 | |

|

| ||

| Shoe | 88.4 | |

|

| ||

| Shoe | 85.9 | |

|

| ||

| Table | 84.3 | |

|

| ||

| Clinic | 81.9 | |

|

| ||

| Indoors | 68.9 | |

|

| ||

| Room | 68.9 | |

|

| ||

| Helmet | 66.8 | |

|

| ||

| People | 63.9 | |

|

| ||

| Dining Table | 60 | |

|

| ||

| Photography | 58.6 | |

|

| ||

| Photo | 58.6 | |

|

| ||

| Workshop | 57.4 | |

|

| ||

| Shorts | 56.8 | |

|

| ||

| Drawing | 55.6 | |

|

| ||

| Art | 55.6 | |

|

| ||

Clarifai

created on 2019-03-25

Imagga

created on 2019-03-25

| brass | 87 | |

|

| ||

| trombone | 82.6 | |

|

| ||

| wind instrument | 70.9 | |

|

| ||

| musical instrument | 47.6 | |

|

| ||

| man | 20.8 | |

|

| ||

| sax | 19.2 | |

|

| ||

| male | 19.1 | |

|

| ||

| chair | 18.4 | |

|

| ||

| people | 17.8 | |

|

| ||

| business | 15.8 | |

|

| ||

| group | 15.3 | |

|

| ||

| work | 14.9 | |

|

| ||

| black | 13.8 | |

|

| ||

| person | 13.5 | |

|

| ||

| technology | 12.6 | |

|

| ||

| room | 12.4 | |

|

| ||

| stage | 11.7 | |

|

| ||

| businessman | 11.5 | |

|

| ||

| table | 11.2 | |

|

| ||

| engineer | 10.3 | |

|

| ||

| sitting | 10.3 | |

|

| ||

| men | 10.3 | |

|

| ||

| adult | 10 | |

|

| ||

| job | 9.7 | |

|

| ||

| interior | 9.7 | |

|

| ||

| concert | 9.7 | |

|

| ||

| music | 9.6 | |

|

| ||

| chart | 9.5 | |

|

| ||

| building | 9.1 | |

|

| ||

| modern | 9.1 | |

|

| ||

| cornet | 9 | |

|

| ||

| body | 8.8 | |

|

| ||

| equipment | 8.7 | |

|

| ||

| boy | 8.7 | |

|

| ||

| construction | 8.5 | |

|

| ||

| studio | 8.3 | |

|

| ||

| silhouette | 8.3 | |

|

| ||

| human | 8.2 | |

|

| ||

| blackboard | 8.1 | |

|

| ||

| classroom | 8.1 | |

|

| ||

| teacher | 8.1 | |

|

| ||

| working | 7.9 | |

|

| ||

| women | 7.9 | |

|

| ||

| design | 7.9 | |

|

| ||

| teaching | 7.8 | |

|

| ||

| play | 7.7 | |

|

| ||

| musical | 7.7 | |

|

| ||

| sky | 7.6 | |

|

| ||

| engineering | 7.6 | |

|

| ||

| pencil | 7.6 | |

|

| ||

| professional | 7.4 | |

|

| ||

| company | 7.4 | |

|

| ||

| light | 7.3 | |

|

| ||

| computer | 7.2 | |

|

| ||

| worker | 7.2 | |

|

| ||

| drawing | 7.1 | |

|

| ||

| device | 7 | |

|

| ||

Google

created on 2019-03-25

| Photograph | 96.7 | |

|

| ||

| Snapshot | 88.2 | |

|

| ||

| Room | 71.4 | |

|

| ||

| Photography | 62.4 | |

|

| ||

| Black-and-white | 56.4 | |

|

| ||

Microsoft

created on 2019-03-25

| person | 94.4 | |

|

| ||

| sport | 89.1 | |

|

| ||

| black and white | 60.9 | |

|

| ||

| boy | 44.7 | |

|

| ||

| child | 37.9 | |

|

| ||

| monochrome | 20.6 | |

|

| ||

Color Analysis

Face analysis

Amazon

AWS Rekognition

| Age | 27-44 |

| Gender | Male, 98.9% |

| Surprised | 1.4% |

| Angry | 2.3% |

| Sad | 5.6% |

| Calm | 77.5% |

| Happy | 1% |

| Confused | 2.4% |

| Disgusted | 9.8% |

AWS Rekognition

| Age | 26-43 |

| Gender | Male, 98.2% |

| Happy | 28.7% |

| Sad | 5.3% |

| Angry | 3.2% |

| Disgusted | 1.5% |

| Surprised | 3.8% |

| Calm | 51.6% |

| Confused | 5.9% |

Feature analysis

Categories

Imagga

| interior objects | 52.8% | |

|

| ||

| paintings art | 27.8% | |

|

| ||

| streetview architecture | 9.9% | |

|

| ||

| text visuals | 8.4% | |

|

| ||

Captions

Microsoft

created on 2019-03-25

| a group of people sitting in a chair | 80.4% | |

|

| ||

| a group of people sitting in chairs | 80.3% | |

|

| ||

| a group of people sitting on a chair | 77.7% | |

|

| ||

Text analysis

Amazon

:

: WO MOS

WO

MOS