Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

Imagga

AWS Rekognition

| Age | 47-53 |

| Gender | Male, 93.7% |

| Happy | 96.8% |

| Calm | 2.2% |

| Confused | 0.4% |

| Sad | 0.3% |

| Disgusted | 0.1% |

| Angry | 0.1% |

| Surprised | 0.1% |

| Fear | 0.1% |

Feature analysis

Amazon

Clarifai

AWS Rekognition

| Person | 99.3% | |

Categories

Imagga

created on 2022-01-15

| people portraits | 92% | |

| events parties | 7.2% | |

Captions

Microsoft

created by unknown on 2022-01-15

| a group of people sitting in front of a window | 87.6% | |

| a group of people sitting at a table in front of a window | 87.2% | |

| a group of people sitting at a table | 87.1% | |

Clarifai

Created by general-english-image-caption-clip on 2025-07-12

photograph of a group of doctors in a medical laboratory.

Salesforce

Created by general-english-image-caption-blip on 2025-05-19

a photograph of a group of people in lab coats and lab coats

OpenAI GPT

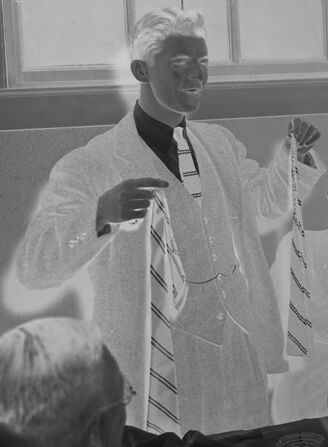

Created by gpt-4o-2024-11-20 on 2025-06-08

This image appears to be a negative photograph of a formal gathering or meeting, possibly a banquet or social event. The room has a long table covered with a dark tablecloth, holding glasses, dishes, and decorative items. Several men dressed in suits are seated around the table, with one man standing and holding up what looks like neckties. The environment includes windows that let natural light in and a mirror on the wall reflecting part of the room. The atmosphere suggests a formal or celebratory occasion.

Created by gpt-4o-2024-08-06 on 2025-06-08

The image depicts a group of men in formal attire seated around a table, which is set with glasses and a cloth. The setting appears to be a formal gathering or meeting. The man standing is holding a piece of clothing, possibly a tie, and appears to be speaking or presenting to the seated group. The room has windows with rectangular panes and a decorative mirror on the wall that reflects part of the room. The atmosphere suggests a sophisticated and perhaps ceremonial or business-related occasion.

Anthropic Claude

Created by anthropic.claude-3-haiku-20240307-v1:0 on 2025-06-13

The image appears to depict a group of men in a formal setting, likely a gathering or event of some kind. The men are dressed in white coats, suggesting they may be medical professionals or scientists. They are seated around a table, some holding glasses, indicating this may be a social or celebratory occasion. The room has large windows and appears to be well-lit. The overall scene conveys a sense of camaraderie and professionalism among the individuals shown.

Created by us.anthropic.claude-3-5-sonnet-20241022-v2:0 on 2025-06-13

This is a black and white photograph that appears to be from an earlier era, showing several people seated at what looks like a bar or counter. They are all wearing white jackets or coats with dark ties, suggesting they might be medical professionals or perhaps members of a service industry. One person is standing while others are seated. There are glasses on the counter and windows visible in the background. The lighting in the photo creates an interesting contrast effect, with the white jackets standing out prominently against the darker background. The number "9534" appears to be written on the photograph, possibly indicating it's part of an archived collection.

Meta Llama

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2025-05-24

The image is a black-and-white photograph of a group of men in white lab coats seated at a table. The men are all wearing white lab coats and appear to be engaged in some sort of activity or discussion. One man is holding up a tie, while another is gesturing with his hand. The table is set with glasses and plates, suggesting that they may be having a meal or snack.

In the background, there are windows and a mirror on the wall, which adds depth and context to the scene. The overall atmosphere of the image is one of camaraderie and collaboration, as the men seem to be working together or sharing ideas.

The image has a vintage feel to it, with the black-and-white tones and the style of the clothing and furniture suggesting that it may have been taken in the mid-20th century. Overall, the image captures a moment of interaction and collaboration among a group of men in a professional or academic setting.

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2025-05-24

The image is a black-and-white photograph of a group of men in white suits and ties, gathered around a table with glasses and pitchers on it. One man stands at the front of the room, holding up a tie.

- The men are all wearing white suits and ties, with some having white hair.

- They are seated around a table with glasses and pitchers on it.

- One man stands at the front of the room, holding up a tie.

- The background of the image shows a room with windows and a mirror on the wall.

- The overall atmosphere of the image suggests that the men are gathered for a formal event or meeting.

The image appears to be a vintage photograph, possibly from the early 20th century, given the style of clothing and the quality of the image. It is likely that the men in the image are members of a social club or organization, given their formal attire and the setting of the photograph.

Amazon Nova

Created by amazon.nova-pro-v1:0 on 2025-05-24

The image is a black-and-white photograph of a group of people sitting around a table. The man in the center, who appears to be the main focus, is standing and smiling. He is wearing a white coat and a tie. Behind him, there are four men sitting at the table, all wearing white coats. One of them is wearing glasses. The table has wine glasses and other items on it.

Created by amazon.nova-lite-v1:0 on 2025-05-24

The image is a black-and-white photograph of a group of men gathered in a room, likely for a formal event or meeting. The men are dressed in white coats and ties, and they appear to be engaged in conversation. The man in the center of the image is holding a glass and appears to be speaking, while the other men are listening attentively. The room has a simple, unadorned interior, with a window in the background and a mirror on the wall.

Google Gemini

Created by gemini-2.0-flash-lite on 2025-04-29

Here is a description of the image:

The image is a black and white negative of a group of men gathered in a room. The main focus is a man standing in front of a table, holding up a tie and gesturing with his other hand as if he's presenting or explaining something. He is well-dressed in a suit jacket, a collared shirt, and a patterned tie.

Several other men are seated around the table, all also dressed in suits. Some are looking at the man with the tie, while others appear to be gazing out towards the viewer or elsewhere in the room. The table is covered with a dark tablecloth, and there are glasses and what appears to be a pitcher on it.

The room has large windows in the background, and a mirror on the wall reflects the image of a man. The overall atmosphere is one of a business meeting or social gathering from the mid-20th century.

Created by gemini-2.0-flash on 2025-04-29

Here is a description of the image:

The image appears to be a black and white photograph or scan, possibly of a group of men seated around a table. They are dressed in suits and ties, suggesting a formal or business gathering.

In the foreground, one man is holding up a tie, seemingly displaying or discussing it with the others. Glasses and perhaps other items are on the table.

In the background, there are windows allowing light into the room, and a mirror is visible on the wall. The number "9534" is written across the top and bottom of the image, which may be an archival reference number.

The photo has the look of being from the mid-20th century based on the clothing and style. Due to the negative appearance, some details may be hard to discern accurately.

Mistral Pixtral

Created by us.mistral.pixtral-large-2502-v1:0 on 2025-05-25

The image depicts a group of men seated at a table in what appears to be a formal or semi-formal setting. They are dressed in white lab coats, suggesting that they might be scientists, doctors, or professionals in a related field. The men are smiling and appear to be in a jovial mood, with one man standing and holding a string of beads or a necklace. The setting includes a large window on the left side of the image, a framed mirror on the wall, and several other men seated around the table. The table is covered with a dark tablecloth and has glasses and other items on it. The overall atmosphere seems to be one of camaraderie and celebration. The image is in black and white, indicating it might be from an earlier era.

Qwen

Created by Qwen/Qwen2.5-VL-7B-Instruct on 2025-07-04

The image is a black-and-white photograph of a group of men in formal attire, likely in a social or formal setting. They are seated around a table that has glasses and possibly some food or other items on it. The men are dressed in white coats, which could suggest they are part of a professional or ceremonial event, possibly in a hospital, medical facility, or academic setting. The room has a classic design with multiple windows and a mirror on the wall. The atmosphere appears to be relaxed and social, with the men smiling and engaging with each other. The photograph has a vintage feel, likely taken in the early to mid-20th century.

Created by Qwen/Qwen2.5-VL-72B-Instruct on 2025-07-04

This black-and-white photograph depicts a group of men, likely medical professionals, gathered in a room that appears to be a lounge or dining area. They are dressed in white coats, suggesting they are doctors or researchers. The man on the left is standing and holding a striped tie, while the others are seated around a table with glasses and other items on it. The room has several windows and a mirror on the wall. The image has a vintage look, indicating it might be from an earlier era. There are numbers and text on the photograph, possibly for cataloging or identification purposes.