Machine Generated Data

Tags

Amazon

created on 2019-11-16

| Person | 99.8 | |

|

| ||

| Human | 99.8 | |

|

| ||

| Person | 99.7 | |

|

| ||

| Person | 99.6 | |

|

| ||

| Meal | 98.3 | |

|

| ||

| Food | 98.3 | |

|

| ||

| Dating | 88.9 | |

|

| ||

| Sitting | 86.2 | |

|

| ||

| Tablecloth | 82.8 | |

|

| ||

| Furniture | 82.5 | |

|

| ||

| Table | 82.5 | |

|

| ||

| Beverage | 81.9 | |

|

| ||

| Drink | 81.9 | |

|

| ||

| Plant | 78.5 | |

|

| ||

| Dish | 77.9 | |

|

| ||

| Glass | 74.7 | |

|

| ||

| People | 73.9 | |

|

| ||

| Apparel | 73.5 | |

|

| ||

| Clothing | 73.5 | |

|

| ||

| Dining Table | 67.8 | |

|

| ||

| Alcohol | 67.6 | |

|

| ||

| Leisure Activities | 67.4 | |

|

| ||

| Picnic | 66.6 | |

|

| ||

| Vacation | 66.6 | |

|

| ||

| Home Decor | 64.2 | |

|

| ||

| Vegetation | 62.2 | |

|

| ||

| Tabletop | 61.7 | |

|

| ||

| Face | 61.3 | |

|

| ||

| Tree | 60.9 | |

|

| ||

| Photography | 60.8 | |

|

| ||

| Portrait | 60.8 | |

|

| ||

| Photo | 60.8 | |

|

| ||

| Child | 58.8 | |

|

| ||

| Blonde | 58.8 | |

|

| ||

| Woman | 58.8 | |

|

| ||

| Girl | 58.8 | |

|

| ||

| Female | 58.8 | |

|

| ||

| Teen | 58.8 | |

|

| ||

| Kid | 58.8 | |

|

| ||

| Supper | 58.3 | |

|

| ||

| Dinner | 58.3 | |

|

| ||

Clarifai

created on 2019-11-16

| people | 99.9 | |

|

| ||

| group | 98.6 | |

|

| ||

| group together | 98 | |

|

| ||

| adult | 98 | |

|

| ||

| man | 97.9 | |

|

| ||

| woman | 97.4 | |

|

| ||

| sit | 95.9 | |

|

| ||

| several | 95.2 | |

|

| ||

| four | 94.6 | |

|

| ||

| two | 93.6 | |

|

| ||

| facial expression | 92.5 | |

|

| ||

| furniture | 91.9 | |

|

| ||

| administration | 91.1 | |

|

| ||

| leader | 90.3 | |

|

| ||

| recreation | 90.1 | |

|

| ||

| three | 89.8 | |

|

| ||

| wear | 89.5 | |

|

| ||

| child | 87.4 | |

|

| ||

| many | 87.2 | |

|

| ||

| five | 86.3 | |

|

| ||

Imagga

created on 2019-11-16

| mother | 34.2 | |

|

| ||

| kin | 32.8 | |

|

| ||

| male | 32.2 | |

|

| ||

| people | 29.6 | |

|

| ||

| man | 27.8 | |

|

| ||

| child | 26.5 | |

|

| ||

| family | 24 | |

|

| ||

| together | 23.7 | |

|

| ||

| couple | 23.5 | |

|

| ||

| happy | 21.9 | |

|

| ||

| parent | 21.9 | |

|

| ||

| home | 19.9 | |

|

| ||

| park | 19.8 | |

|

| ||

| outdoors | 18.9 | |

|

| ||

| happiness | 18.8 | |

|

| ||

| lifestyle | 18.8 | |

|

| ||

| person | 18.4 | |

|

| ||

| smiling | 18.1 | |

|

| ||

| portrait | 17.5 | |

|

| ||

| father | 16.7 | |

|

| ||

| love | 16.6 | |

|

| ||

| women | 15.8 | |

|

| ||

| senior | 15 | |

|

| ||

| sitting | 14.6 | |

|

| ||

| adult | 14.4 | |

|

| ||

| garden | 14.2 | |

|

| ||

| mature | 13.9 | |

|

| ||

| daughter | 13.6 | |

|

| ||

| old | 13.2 | |

|

| ||

| friends | 13.1 | |

|

| ||

| outdoor | 13 | |

|

| ||

| dad | 13 | |

|

| ||

| men | 12.9 | |

|

| ||

| groom | 12.4 | |

|

| ||

| togetherness | 12.3 | |

|

| ||

| boy | 12.2 | |

|

| ||

| smile | 12.1 | |

|

| ||

| attractive | 11.9 | |

|

| ||

| world | 11.7 | |

|

| ||

| husband | 11.6 | |

|

| ||

| cheerful | 11.4 | |

|

| ||

| group | 11.3 | |

|

| ||

| handsome | 10.7 | |

|

| ||

| retired | 10.7 | |

|

| ||

| married | 10.5 | |

|

| ||

| tree | 10.5 | |

|

| ||

| elderly | 10.5 | |

|

| ||

| pretty | 10.5 | |

|

| ||

| casual | 10.2 | |

|

| ||

| teen | 10.1 | |

|

| ||

| cute | 10 | |

|

| ||

| aged | 10 | |

|

| ||

| childhood | 9.8 | |

|

| ||

| kid | 9.7 | |

|

| ||

| fun | 9.7 | |

|

| ||

| grandma | 9.6 | |

|

| ||

| retirement | 9.6 | |

|

| ||

| gift | 9.5 | |

|

| ||

| day | 9.4 | |

|

| ||

| two | 9.3 | |

|

| ||

| meal | 9.3 | |

|

| ||

| girls | 9.1 | |

|

| ||

| children | 9.1 | |

|

| ||

| table | 8.7 | |

|

| ||

| holiday | 8.6 | |

|

| ||

| outside | 8.6 | |

|

| ||

| face | 8.5 | |

|

| ||

| kids | 8.5 | |

|

| ||

| bench | 8.4 | |

|

| ||

| room | 8.3 | |

|

| ||

| teenager | 8.2 | |

|

| ||

| son | 8.1 | |

|

| ||

| romance | 8 | |

|

| ||

| grandmother | 7.8 | |

|

| ||

| innocent | 7.7 | |

|

| ||

| toddler | 7.6 | |

|

| ||

| wife | 7.6 | |

|

| ||

| enjoying | 7.6 | |

|

| ||

| eating | 7.6 | |

|

| ||

| holding | 7.4 | |

|

| ||

| grandfather | 7.4 | |

|

| ||

| adorable | 7.4 | |

|

| ||

| looking | 7.2 | |

|

| ||

| clothing | 7 | |

|

| ||

Google

created on 2019-11-16

| Photograph | 97.7 | |

|

| ||

| People | 95.4 | |

|

| ||

| Black-and-white | 91.5 | |

|

| ||

| Snapshot | 88.2 | |

|

| ||

| Monochrome photography | 87.7 | |

|

| ||

| Monochrome | 83.8 | |

|

| ||

| Photography | 81.8 | |

|

| ||

| Stock photography | 73.1 | |

|

| ||

| Fun | 70.4 | |

|

| ||

| Sitting | 68.9 | |

|

| ||

| Tree | 68.7 | |

|

| ||

| Gentleman | 67.8 | |

|

| ||

| Conversation | 67 | |

|

| ||

| Adaptation | 67 | |

|

| ||

| Event | 62.7 | |

|

| ||

| Family | 61.8 | |

|

| ||

| Style | 55 | |

|

| ||

Color Analysis

Face analysis

Amazon

Microsoft

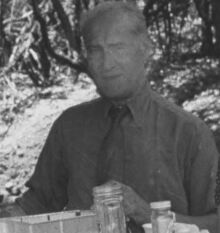

AWS Rekognition

| Age | 36-52 |

| Gender | Male, 98.9% |

| Happy | 95% |

| Sad | 0.7% |

| Disgusted | 1.4% |

| Surprised | 0.5% |

| Fear | 0.3% |

| Angry | 0.9% |

| Confused | 0.5% |

| Calm | 0.6% |

AWS Rekognition

| Age | 22-34 |

| Gender | Female, 98.2% |

| Angry | 0.2% |

| Surprised | 0.2% |

| Disgusted | 0.1% |

| Confused | 0.1% |

| Fear | 0.1% |

| Calm | 0.2% |

| Happy | 99.2% |

| Sad | 0% |

AWS Rekognition

| Age | 39-57 |

| Gender | Male, 96.8% |

| Happy | 0.4% |

| Sad | 3.3% |

| Disgusted | 0.2% |

| Surprised | 0.2% |

| Fear | 0.1% |

| Angry | 1.4% |

| Confused | 0.2% |

| Calm | 94.2% |

Microsoft Cognitive Services

| Age | 54 |

| Gender | Male |

Microsoft Cognitive Services

| Age | 33 |

| Gender | Female |

Microsoft Cognitive Services

| Age | 44 |

| Gender | Male |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very likely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very likely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Google Vision

| Surprise | Very unlikely |

| Anger | Very unlikely |

| Sorrow | Very unlikely |

| Joy | Very unlikely |

| Headwear | Very unlikely |

| Blurred | Very unlikely |

Feature analysis

Categories

Imagga

| paintings art | 55.8% | |

|

| ||

| food drinks | 33.4% | |

|

| ||

| nature landscape | 4.4% | |

|

| ||

| people portraits | 4.1% | |

|

| ||

| interior objects | 1.1% | |

|

| ||

Captions

Microsoft

created on 2019-11-16

| a group of people sitting at a table | 98.3% | |

|

| ||

| a group of people sitting around a table | 98.2% | |

|

| ||

| a group of people sitting at a table posing for a photo | 97.9% | |

|

| ||