Machine Generated Data

Tags

Color Analysis

Face analysis

Amazon

Microsoft

AWS Rekognition

| Age | 47-65 |

| Gender | Male, 98.8% |

| Angry | 46.2% |

| Disgusted | 0.5% |

| Surprised | 0.9% |

| Fear | 0.4% |

| Happy | 1.6% |

| Confused | 5.9% |

| Calm | 35.5% |

| Sad | 9% |

Feature analysis

Amazon

| Person | 95.4% | |

Categories

Imagga

| events parties | 89% | |

| people portraits | 10% | |

Captions

Microsoft

created on 2020-04-23

| a man wearing a suit and tie | 94.3% | |

| a man in a suit and tie | 94.2% | |

| a man standing in front of a building | 75.2% | |

OpenAI GPT

Created by gpt-4o-2024-05-13 on 2024-12-30

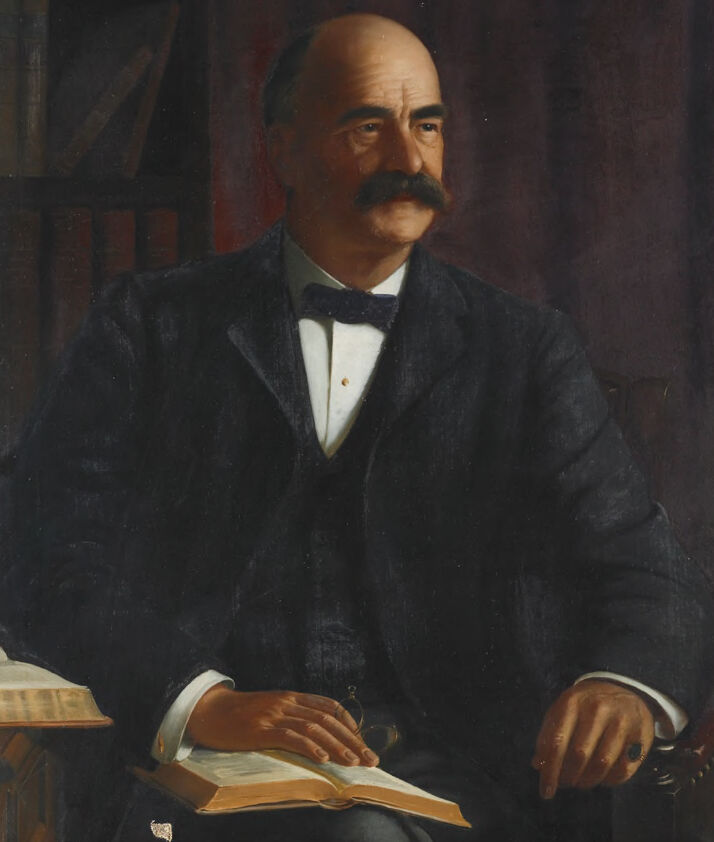

The image depicts a man wearing a dark suit, white shirt, and bow tie, seated in a dignified pose. He is holding a small book or document in his left hand, while another open book is resting on his lap. The background includes a bookshelf with several books, suggesting a scholarly or intellectual setting. The overall mood of the image conveys a sense of sophistication and contemplation.

Created by gpt-4 on 2024-01-25

The image depicts an individual dressed in a black suit with a white shirt and black bowtie, seated with one hand resting on an open book and the other holding glasses. The person appears to be situated in a room with bookshelves filled with books in the background, creating an atmosphere of study or academic work. The warm tones of the setting suggest a serene and contemplative environment.

Anthropic Claude

Created by claude-3-haiku-48k-20240307 on 2024-03-29

The image shows a portrait of a man with a mustache, wearing a dark suit and bow tie. He is sitting at a desk, with one hand resting on an open book. The background is dark, creating a somber and serious atmosphere. The man's expression is composed and thoughtful, suggesting he may be a scholar or academic.

Meta Llama

Created by us.meta.llama3-2-90b-instruct-v1:0 on 2024-12-24

The image depicts a portrait of a man in a suit, sitting in a chair with a book on his lap. The man is balding and has a mustache. He is wearing a dark-colored suit with a white shirt and a black bow tie. His right hand rests on top of the book, while his left hand is placed on the armrest of the chair. In the background, there appears to be a bookshelf filled with books. The overall atmosphere of the image suggests that it is a formal portrait, possibly taken in a library or study. The man's attire and the presence of books in the background suggest that he may be an intellectual or academic figure.

Created by us.meta.llama3-2-11b-instruct-v1:0 on 2024-11-23

The image depicts a painting of a man in a suit, sitting in a chair and holding an open book. The man is balding with a mustache and is wearing a dark-colored suit jacket over a white shirt and black bow tie. He has his left hand resting on the book, which appears to be a large, leather-bound volume. His right hand is not visible in the frame. The background of the painting is dark, with a red curtain visible behind the man. To the left of the man, there is a small table or desk with another book or object on it. The overall atmosphere of the painting suggests that the man is a scholar or intellectual, engaged in reading and contemplation. The painting is signed by the artist in the bottom-right corner, but the signature is illegible. The painting itself appears to be an oil on canvas work, with visible brushstrokes and texture. The overall style of the painting is realistic, with attention to detail and a focus on capturing the subject's likeness and expression.

Amazon Nova

Created by amazon.nova-pro-v1:0 on 2025-01-04

The painting depicts a man sitting in a chair with a book in front of him. He is wearing a black suit with a white inner shirt, a black bow tie, and a ring on his finger. He has a mustache and is looking to the left. Behind him is a wooden shelf with several books on it. The painting has a signature on the bottom right corner.

Created by amazon.nova-lite-v1:0 on 2025-01-04

The image is a portrait of a man, possibly an older gentleman, who is seated on a chair. He is dressed in a formal suit and bow tie. He is holding a book in his lap and looking at it. The man has a mustache and a bald head. Behind him is a shelf with several books on it. The image has a slightly blurry effect. The painting is signed by the artist, W.L. Sargent, in the lower right corner.